At its core, an internet of things solution is an engineered system that translates physical events into digital intelligence to drive business outcomes. It integrates connected devices, secure networks, and robust software platforms to bridge the gap between physical operations and data-driven strategy.

Deconstructing Modern IoT Solutions

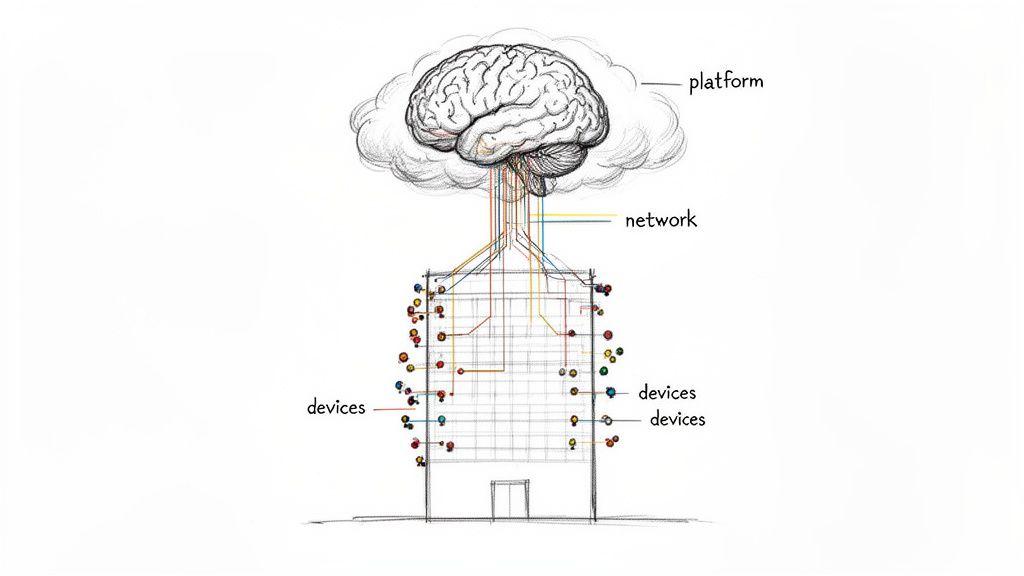

Many technical leaders initially view the Internet of Things through the narrow lens of consumer gadgets, missing its strategic value in B2B contexts. The problem with this perspective is that it overlooks the systemic nature of IoT. A resilient solution is not an assortment of components but a cohesive architecture—a central nervous system for physical operations that enables visibility and control.

Viewing IoT as a complete system is the first step toward achieving measurable results. Without this architectural mindset, initiatives often result in disconnected data silos, security vulnerabilities, and a failure to deliver quantifiable business value.

The Anatomy of an IoT Solution

A well-architected IoT solution functions like a biological sensory network. Each component has a distinct, interdependent role, working in concert to convert raw environmental data into actionable intelligence. This system is invariably built on three foundational layers.

Core Components of a Modern IoT Solution

Every robust IoT system, regardless of its application, is composed of three essential layers. Understanding their interaction is critical to engineering a solution that is both effective and maintainable.

| Component Layer | Primary Function | Key Architectural Considerations |

|---|---|---|

| Devices | Senses specific conditions (e.g., temperature, location) or performs a physical action. | Hardware reliability, power consumption, firmware security, and environmental resilience. |

| Networks | Securely transmits data from devices to the central platform. | Balancing range, bandwidth, and power usage (e.g., LoRaWAN, Cellular IoT, Wi-Fi); protocol security. |

| Platform | Ingests, processes, stores, and analyzes data to generate insights and trigger actions. | Scalability, data processing latency, security protocols, and integration with other enterprise systems. |

This system-level approach ensures every component works in concert, creating a reliable data pipeline from the physical world to your business intelligence tools.

The primary problem that Internet of Things solutions solve is the elimination of operational blind spots. By capturing real-time data from physical assets, companies can shift from reactive problem-solving to proactive optimization. This transition directly impacts efficiency, reduces operational risk, and can enable new service-based revenue models.

For CTOs and product leaders, the objective is not just to connect devices. It is to architect a scalable and secure data infrastructure that delivers a sustainable competitive advantage. The architecture must be planned for longevity, anticipating future device fleet growth and evolving security threats.

You can learn more about how these principles apply in the real world by exploring our work with IoT in the automotive industry.

Architecting for Scalability and Resilience

The long-term viability of an Internet of Things solution is determined by its architecture. A poorly designed foundation results in a brittle system that is difficult to maintain and impossible to scale. A pragmatic design, however, yields a system that can grow with the business while remaining resilient and cost-effective.

There is no single, universally applicable architectural template. The correct design is always a function of specific use-case constraints, carefully balancing processing latency, data volume, security, and operational cost.

Edge vs. Cloud: The Core Architectural Trade-Off

A foundational architectural decision is where data processing occurs. Should computations run locally, on or near the device (edge computing), or should raw data be transmitted to a centralized server (cloud computing)? The answer is dictated by constraints related to latency, connectivity, and cost.

Consider an industrial monitoring system designed to detect equipment failures. It must react in milliseconds to initiate a shutdown and prevent damage. Transmitting sensor data to the cloud for analysis introduces unacceptable latency. In this scenario, edge computing is not merely an option; it is a requirement for immediate, on-site decision-making.

Contrast this with a smart-city platform aggregating traffic data from thousands of sensors. The priority is not millisecond response but large-scale data aggregation and historical analysis. A cloud-native architecture is well-suited for this purpose, providing the ingestion capacity and computational power required for complex trend modeling.

Most mature internet of things solutions employ a hybrid model, executing time-sensitive tasks at the edge while sending aggregated data to the cloud for deeper analysis and long-term storage. We explore this topic in more detail in our guide on on-premises vs cloud computing.

Managing Diverse Data Streams with Proven Patterns

IoT systems generate heterogeneous data streams, from small, infrequent status updates to high-throughput video feeds. A naive, monolithic platform attempting to process all data uniformly will quickly become a bottleneck, unable to handle the varying velocity and volume.

The most significant architectural risk in IoT is constructing a rigid, monolithic platform. Such systems are difficult to modify, expensive to scale, and create single points of failure. A modular, service-oriented architecture is not a luxury; it is essential for resilience and long-term maintainability.

To manage this complexity, successful architectures often implement patterns like the Lambda architecture. This approach segregates data processing into distinct layers:

- Speed Layer: Processes real-time data for immediate actions and low-latency alerts.

- Batch Layer: Manages large historical datasets, executing complex queries to identify long-term trends.

- Serving Layer: Merges results from the speed and batch layers to provide a unified data view.

This separation of concerns ensures that urgent, real-time tasks are not impeded by resource-intensive batch jobs, creating a system that is both responsive and capable of deep analysis.

Designing for Future Growth and Maintainability

As a device fleet grows from ten to ten thousand, so does the complexity of its management. A truly scalable architecture must account for secure device provisioning, over-the-air (OTA) firmware updates, and remote diagnostics from its inception. Ignoring these operational realities accrues significant technical debt.

The demand for robust IoT infrastructure continues to grow. The global IoT market is projected to reach USD 724.1 billion by 2031, with North America remaining a major contributor due to early adoption and mature infrastructure. This is precisely where a well-architected custom solution delivers strategic value.

Ultimately, a well-designed IoT solution is an investment in future agility. It is built on flexible, modular principles that can adapt as business requirements evolve.

Embedding Security and Privacy by Design

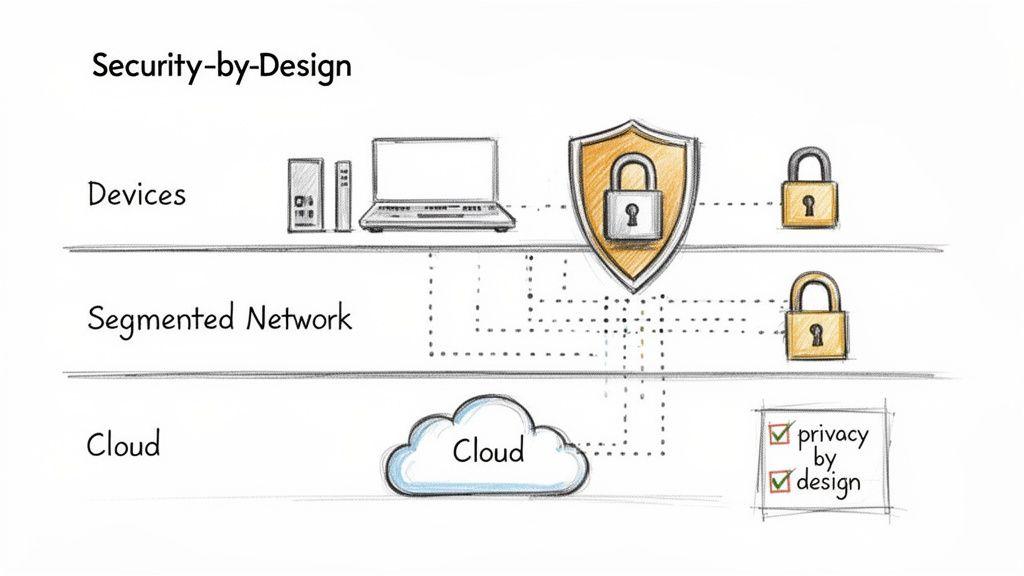

In any professional internet of things solution, security is not a feature to be added at the end of a development cycle. It is a non-negotiable architectural requirement from day one. A common and costly mistake is to treat security as a final pre-launch checklist item, which often leads to critical vulnerabilities, expensive retrofitting, and significant regulatory risk.

A defensible system is built with layered security, where each component is hardened and every communication channel is secured. This principle must extend from the physical device in the field to the cloud backend where data is processed and stored.

A Multi-Layered Security Strategy

The IoT threat surface is expansive, with potential vulnerabilities at every layer of the architecture. A naive approach might focus solely on securing the cloud, leaving thousands of deployed devices as unsecured entry points. A pragmatic strategy addresses risks methodically across the entire system.

This strategy is built on three core pillars:

- Device Hardening: This is the first line of defense. It begins with securing physical hardware through features like secure boot, which ensures a device only runs trusted, signed firmware. It also involves disabling unused ports and services to minimize the attack surface.

- Network Segmentation: Devices should never operate on a flat, open network. Segmentation isolates device groups into distinct virtual networks. If one device is compromised, this containment strategy prevents the breach from propagating across the entire system.

- Cloud Access Control: All access to cloud resources must adhere to the principle of least privilege. This simple yet powerful concept dictates that every user, service, and device is granted only the minimum permissions necessary to perform its function, drastically limiting the potential damage from a compromised credential.

The most pervasive risk in IoT is assuming a secure perimeter. The moment an attacker bypasses this “wall”—often through a weakly secured device—a lack of internal controls allows for lateral movement. A robust security posture assumes breaches will occur and builds in controls to detect and contain them.

Connecting Technical Controls to Compliance

For compliance and IT managers, these technical practices map directly to regulatory requirements. Mandates like GDPR and the NIS2 Directive are not merely legal documents; they provide technical blueprints for building trustworthy systems.

The concept of ‘privacy by design’ is a cornerstone of these regulations, demanding that data protection safeguards are engineered into the system’s architecture from the outset, not applied as a superficial layer. You can find a more detailed breakdown of this philosophy in our complete guide to Privacy by Design.

Practical Threat Modeling for Your IoT Project

Before writing any code, the engineering team should conduct a threat modeling exercise. This is a structured process for identifying potential security threats, assessing their severity, and planning mitigation strategies. The objective is to think like an attacker to identify weaknesses before they can be exploited.

A pragmatic threat modeling process includes these steps:

- Decompose the System: Map every component of the IoT solution, from device sensors to cloud databases and third-party APIs.

- Identify Trust Boundaries: Pinpoint where data moves between components under your control and those that are not. These boundaries are high-risk areas.

- Enumerate Threats: For each component and data flow, brainstorm potential failure modes. Frameworks like STRIDE (Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, Elevation of Privilege) provide a useful structure.

- Prioritize and Mitigate: Rank threats by potential impact and likelihood. Define concrete technical controls to address the highest-priority risks.

This proactive process transforms security from a source of anxiety into a managed engineering discipline. It ensures your internet of things solution is not only functional but fundamentally secure and compliant.

Integrating AI for Intelligent Automation

A mature IoT solution does not merely collect and display data; it uses that data to drive intelligent, automated actions. This is the role of Artificial Intelligence (AI) and Machine Learning (ML), which transform a passive monitoring tool into a responsive, autonomous engine. This is where the highest value is unlocked, converting terabytes of raw sensor readings into proactive, tangible business outcomes.

The objective is to move beyond dashboards toward systems that can predict failures, optimize processes dynamically, and synthesize complex events for human operators. This requires embedding intelligence directly into operational workflows.

Practical AI Integration Patterns

Integrating AI into an IoT architecture is not about adopting trends but about making deliberate design choices that balance computational cost, latency, and model accuracy. Different use cases require different architectural patterns.

Two common and effective patterns are:

- Edge AI for Real-Time Decisions: For use cases requiring a split-second response, running ML models directly on edge devices is the only viable approach. A classic example is predictive maintenance on a factory floor. A lightweight anomaly detection model running on an edge gateway can analyze vibration data in real-time and trigger a machine shutdown before a catastrophic failure, eliminating the latency of a round trip to the cloud.

- Cloud AI for Complex Analysis: For tasks requiring significant computational power and large datasets, cloud-based AI is the appropriate choice. For example, a Large Language Model (LLM) can process and summarize thousands of operational alerts from a distributed network, delivering a concise, human-readable report instead of overwhelming an operations team with raw data.

The choice between edge and cloud is a critical trade-off. Edge AI provides speed and autonomy but is constrained by device hardware. Cloud AI offers immense computational power but introduces latency and requires reliable connectivity.

Governance and Cost Management for AI Systems

Deploying AI models into a production environment introduces new layers of operational risk and complexity that must be managed. An AI-driven action that is unreliable, unauditable, or cost-prohibitive can cause more harm than good. This is where robust governance becomes non-negotiable.

The greatest risk in AI-driven automation is the “black box” problem. If you cannot explain why an AI model made a particular decision, you cannot trust it with critical operations. Explainable and auditable AI is not a luxury; it is a core requirement for responsible implementation.

To manage these risks, your architecture needs specific, built-in safeguards:

- Guardrails and Fallbacks: Your system requires “guardrails”—pre-defined rules that prevent illogical or dangerous actions if a model produces an aberrant output. It also needs a fallback mechanism, a default safe state to which it can revert if the AI system fails.

- Continuous Monitoring: AI model performance can degrade over time as real-world conditions change, a phenomenon known as “model drift.” Continuous monitoring of model accuracy and operational outcomes is essential to ensure system reliability.

- Cost Controls: AI models, particularly large ones hosted in the cloud, can incur significant operational costs. Implementing rate limiting, caching results, and selecting appropriately sized models are essential engineering practices for economic viability.

The market for these advanced capabilities is growing rapidly. The telecom IoT market in North America, a key area for AI-enabled integrations, was valued at USD 23.16 billion in 2025 and is projected to reach USD 160.54 billion by 2035. You can explore more data on this growth in Precedence Research’s market analysis. This trend highlights the demand for intelligent, automated, and well-governed internet of things solutions.

A Phased Roadmap for IoT Implementation

A successful internet of things solution is not a single, monolithic project but a product of managed, incremental development. Attempting to build a full-scale system without foundational validation is a common cause of budget overruns and technical failure.

A phased approach is the most effective way to mitigate risk, validate assumptions, and ensure the final product delivers business value. This roadmap divides the process into four distinct, manageable stages designed to build momentum and align technical development with clear business objectives.

Phase 1: Focused Discovery and Proof of Concept

This initial phase is not about building technology but about validating the business problem. Before any hardware is procured, you must rigorously define the operational challenge and the specific metrics targeted for improvement.

With this clarity, a Proof of Concept (PoC) can be developed. A PoC is a small-scale, targeted experiment designed to answer a single critical question with minimal investment: is our core technical assumption viable?

For example, a logistics company seeking to reduce spoilage in refrigerated containers would run a PoC focused only on testing whether a specific sensor can reliably transmit temperature data from within a moving, metal-enclosed space. It is an experiment, not a miniature version of the final product.

Phase 2: Prototyping and MVP Development

With the PoC having validated the core technical hypothesis, the next step is to build a Minimum Viable Product (MVP). The MVP is the leanest version of the solution that delivers tangible value to a small group of end-users. Its purpose is to test the end-to-end system flow—from device to data to user action—and gather critical feedback.

An effective MVP includes:

- A small batch of prototype devices: Reliable enough for real-world testing but not necessarily production-grade.

- A basic data platform: Capable of ingesting, storing, and visualizing data from the devices.

- A simple user interface: Sufficient for target users to interact with the data and provide feedback on its utility.

This phase is focused on learning. The feedback gathered here will inform the architecture and feature set of the scaled solution, preventing costly changes later in the development cycle.

The process of turning raw data into intelligence follows a clear, repeatable pattern.

This flow from data collection through AI modeling to automated action is the ultimate goal of any intelligent IoT system.

Phase 3: Scaled Development and Architecture

Armed with insights from the MVP, you can now invest confidently in building the full, secure, and scalable architecture. This is where you engineer for the long term, implementing robust security, resilient network protocols, and a cloud infrastructure designed for growth.

Key activities in this stage include:

- Finalizing hardware design for mass production and supply chain management.

- Building out the full-featured software platform with a focus on maintainability and security.

- Implementing comprehensive security controls from device hardware to the cloud.

- Establishing CI/CD pipelines for automated testing and deployment.

This is the most capital-intensive phase, and its success is contingent on the quality of the preceding discovery and MVP work. The Asia Pacific region, for example, is investing heavily in this area, particularly in smart manufacturing. The IoT market there is projected to grow from USD 218.57 billion in 2025 to USD 392.76 billion by 2030. You can find more insights about the IoT market in the APAC region in recent analyses.

Phase 4: Deployment and Long-Term Maintenance

Deployment is not the end of the project. Once the solution is live, the focus shifts to observability, maintenance, and optimization. A successful IoT system requires ongoing lifecycle management to ensure its health and value.

A common failure point is underestimating the operational overhead of a deployed IoT system. The Total Cost of Ownership (TCO) is dominated not by the initial build, but by the long-term cost of managing firmware updates, monitoring device health, and ensuring system security over a multi-year lifecycle.

This final phase involves establishing robust monitoring and alerting to track system performance and device health. It also requires a clear process for deploying over-the-air (OTA) updates to patch vulnerabilities or introduce new features. This lifecycle management protects the investment and allows the solution to adapt as business needs evolve.

Measuring Success and Ensuring Long-Term Value

The true measure of an internet of things solution is its direct, quantifiable impact on business operations. A solution without clear Key Performance Indicators (KPIs) is a technical exercise, not a strategic asset.

Success is achieved when IoT data is tied directly to core business objectives. For any CTO or product leader, this means moving beyond vanity metrics like device uptime to focus on outcomes that affect the bottom line. The goal is to draw a direct line from a sensor reading to a business result.

Use-Case Specific Metrics

Generic KPIs are insufficient for specialized IoT deployments. To gain meaningful insight, success metrics must be tailored to the specific operational context.

- Manufacturing: The industry standard is Overall Equipment Effectiveness (OEE). Using sensors to track availability, performance, and quality allows a company to pinpoint production bottlenecks. A 5% increase in OEE, driven by IoT data, translates directly to higher output and lower operational costs.

- Logistics: The most critical metric is asset utilization. GPS and sensor data can track not just location but also engine hours, cargo status, and idle time, providing a fleet manager with the intelligence to optimize routes and reduce fuel consumption.

- Smart Buildings: For facility managers, success is measured in energy consumption (kWh) per square meter and occupant comfort. IoT-driven HVAC and lighting controls can reduce energy costs by 10-20% while maintaining a productive environment.

The most common failure in measuring IoT value is treating it as an IT project instead of a business initiative. If the data generated cannot be tied to a financial or operational KPI, the project lacks a clear purpose and will struggle to justify its long-term cost.

Case Study: A Clear Line to Business Outcomes

Consider a logistics company with a fleet of refrigerated trucks, facing challenges with cargo spoilage and high fuel costs. A well-designed IoT solution was implemented with two clear objectives.

First, temperature sensors inside the trailers provided real-time alerts, preventing load spoilage and leading to a 95% reduction in temperature-related incidents. Second, engine diagnostics and GPS data were used to analyze driver behavior and route efficiency.

The result was a 15% reduction in annual fuel costs—an outcome directly traceable to the IoT data. This exemplifies a successful internet of things solution: pragmatic architecture, embedded security, and a relentless focus on delivering measurable, long-term business value.

Questions We Often Hear

When discussing a new IoT initiative with CTOs, founders, and IT managers, several key questions consistently arise. They typically focus on practical concerns: cost, technology choices, and security.

What’s the Biggest Hidden Cost in an IoT Project?

While hardware and cloud services are budgeted for, the most significant long-term cost is almost always maintenance. An IoT solution not architected for maintainability becomes exponentially more expensive to manage, update, and secure as it scales from hundreds to thousands of devices.

The real costs are in managing firmware updates across a large fleet, patching security vulnerabilities in the field, and scaling backend infrastructure without downtime. Partnering with an engineering team that prioritizes a maintainable architecture is the most effective way to control the Total Cost of Ownership (TCO).

Should We Build or Buy an IoT Platform?

This is the classic ‘build vs. buy’ trade-off. The correct answer depends on your core business. Off-the-shelf platforms can accelerate time-to-market for standard use cases, but they can also be rigid and expensive to customize.

Building a custom solution requires a larger upfront investment but provides total control and the ability to develop unique features that create a competitive advantage. A hybrid approach often provides a good balance: use a platform for foundational infrastructure while building your unique, value-adding applications on top.

How Do We Keep Our IoT Data Secure and Compliant?

Security and compliance cannot be afterthoughts; they must be designed into the system from its inception. Several practices are non-negotiable:

- End-to-end encryption for all data, both in transit and at rest.

- A robust identity and access management system for every device and user.

- Adherence to the principle of data minimization—collecting only the data that is absolutely necessary.

- Clear data governance policies that address regulatory requirements like GDPR, including processes for handling data subject requests.

A “privacy by design” philosophy is not a feature but a foundational architectural choice. It means engineering safeguards into the system from the start, not attempting to patch them in after a security incident.

At Devisia, we engineer secure, scalable, and maintainable IoT solutions that are tied directly to measurable business outcomes. If you are looking for a long-term technical partner to translate your vision into a reliable digital product, we should have a conversation.