At their core, Internet of Everything (IoE) sensors are the hardware components that translate physical phenomena into digital data. They are the sensory inputs for complex software systems, capturing real-world information—such as temperature, motion, or chemical composition—and making it machine-readable.

This data is the foundational layer for building the intelligent, automated, and responsive B2B systems that define modern digital architecture.

The Strategic Value of Internet of Everything Sensors

The Internet of Everything (IoE) represents an architectural shift beyond simply connecting devices. It focuses on integrating data, processes, and people into a single, cohesive system. For technical leaders, the problem isn’t just acquiring data—it’s engineering a system that transforms that data into reliable, actionable intelligence that drives specific business outcomes.

A well-defined IoE architecture is the solution to this challenge.

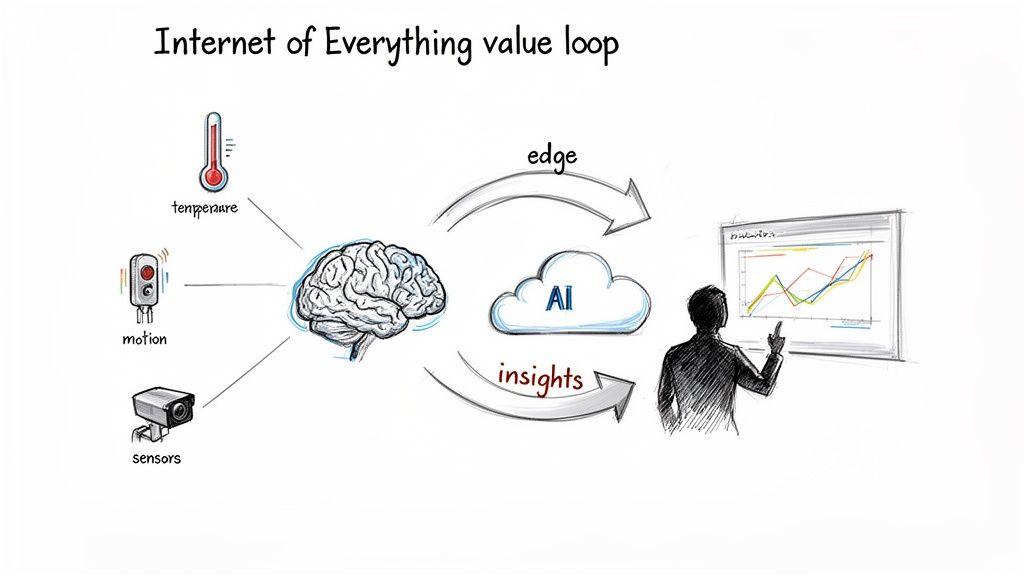

Unlike the more narrowly focused Internet of Things (IoT), IoE aims to create a complete feedback loop. In this ecosystem, Internet of Everything sensors provide the raw telemetry that fuels everything from AI platforms and predictive analytics to automated control systems.

From Data Collection to Value Generation: An Architectural Problem

A naive approach to IoE treats sensors as simple data collectors. This common mistake leads to unmanageable datasets, high cloud storage costs, and a low signal-to-noise ratio, yielding no practical return on investment.

The correct architectural goal is to design a system where data directly informs business processes and enhances human decision-making.

A mature IoE implementation establishes a value loop:

- Sensors capture specific, relevant data about an operational environment.

- Data is processed, often at the edge, to filter out noise and identify meaningful events.

- Insights are generated by cloud-based AI models or analytics platforms.

- Actions are either automated (e.g., adjusting a machine) or presented to human operators, directly impacting business outcomes.

This loop transforms sensor integration from a cost center into a foundational investment for building competitive, scalable software systems. The ability to sense and respond to the physical world is no longer an optional feature.

The Architectural Imperative

For CTOs and product leaders, integrating Internet of Everything sensors is a core architectural decision. It directly influences network protocol selection, data pipeline design, security posture, and regulatory compliance (e.g., GDPR, NIS2).

A system’s intelligence is constrained by the quality and relevance of its inputs. Neglecting the foundational sensor layer guarantees that any subsequent AI or analytics initiative will be built on an unreliable base, limiting its potential from the outset.

The primary objective is to build a system that is not only functional but also scalable, maintainable, and cost-effective across its entire lifecycle. This requires a pragmatic approach that carefully weighs the trade-offs between different sensor types, connectivity options, and data processing strategies.

We explore the practicalities of this in our guide to Internet of Things solutions. A successful IoE strategy turns sensor data from a commodity into a strategic asset that solves tangible business problems.

Selecting the Right Sensors and Communication Protocols

Choosing the correct hardware and connectivity for your Internet of Everything (IoE) system is a critical architectural decision. An incorrect choice can lead to failed deployments, spiraling operational costs, and systems that cannot meet their performance or compliance requirements.

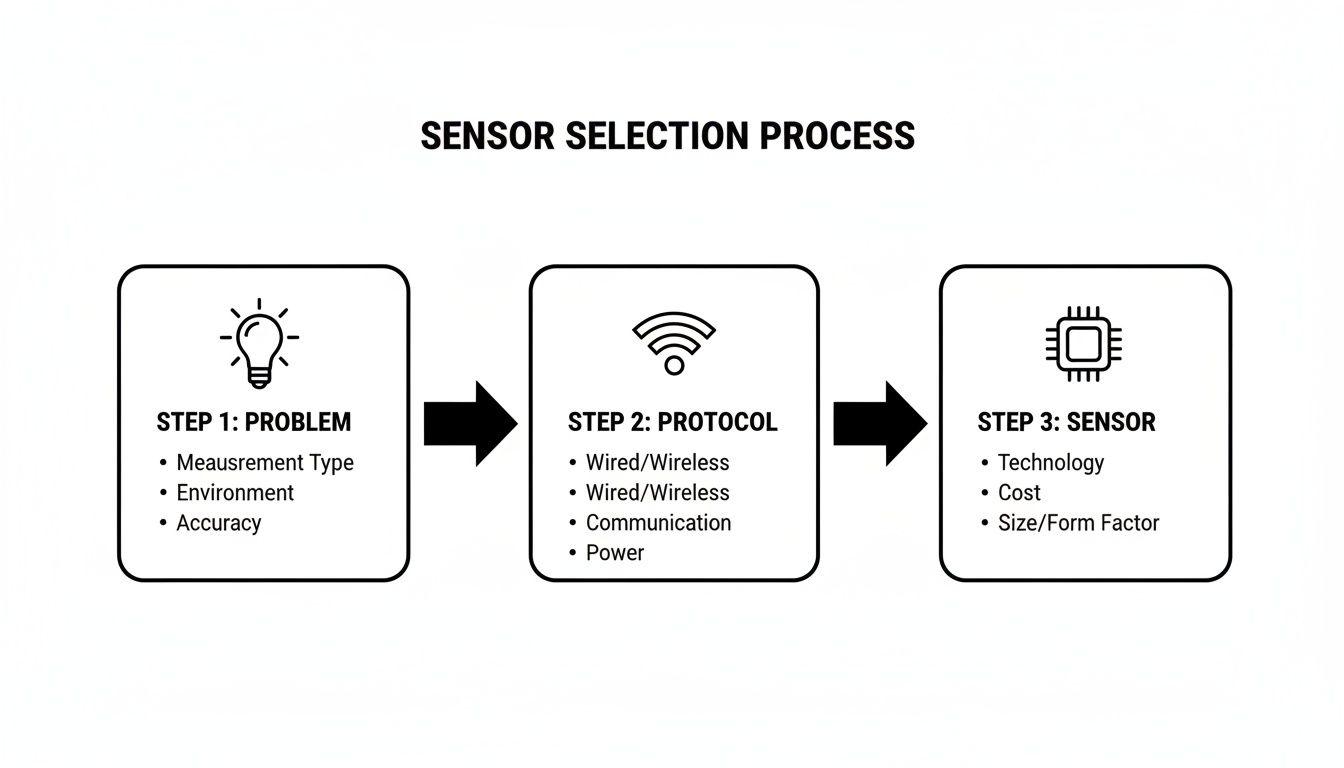

The initial problem is not selecting a sensor, but defining the data required to solve a specific business problem. Are you verifying cold chain integrity for regulatory compliance, detecting unauthorized physical access, or predicting electromechanical failure? The answer to this question defines the necessary sensor specifications.

Categorizing Sensors by Business Function

A practical approach to sensor selection is to categorize them by their function within the business logic. This connects hardware choices directly to outcomes.

-

Environmental and Chemical Sensors: These components measure ambient conditions such as temperature, humidity, air quality, or the presence of specific gases. They are fundamental for compliance-driven applications in logistics (refrigerated transport), smart agriculture (soil moisture and nutrients), and industrial safety (hazardous gas detection).

-

Motion and Proximity Sensors: This category includes accelerometers, gyroscopes, and passive infrared (PIR) sensors. Their function is to detect movement, orientation, or physical presence. Common use cases include asset tracking in a warehouse, anti-theft systems, and energy optimization in smart buildings through occupancy-based HVAC and lighting control.

-

Optical and Biometric Sensors: Image sensors, infrared detectors, and biometric scanners capture visual or biological information. These are essential for automated quality control on manufacturing lines, advanced access control systems, and retail analytics for understanding footfall patterns.

The demand for these components is growing rapidly. In India, for instance, the IoT sensors market was valued at USD 1.2 billion in 2023 and is projected to reach USD 7.5 billion by 2030. You can read the full research about these market dynamics to understand the scale of this trend.

Analyzing Communication Protocol Trade-Offs

Once the required data is defined, the next architectural problem is determining how to transmit it reliably and cost-effectively. The communication protocol is the connective tissue of your IoE network; a mismatch between the protocol and the operational environment is a common failure point.

The decision hinges on a set of key architectural trade-offs. No single protocol is universally “best”; each is optimized for a different set of constraints.

The most common point of failure in IoE projects is a mismatch between the chosen communication protocol and the operational reality of the deployment. A high-bandwidth protocol will drain batteries in remote deployments, while a low-power protocol will fail to transmit complex data in time.

To make an informed choice, you need a structured evaluation framework. The following table provides a pragmatic comparison of common protocols, highlighting their strengths and weaknesses in B2B contexts.

Protocol Selection Framework for IoE Sensor Deployments

| Protocol | Typical Range | Data Rate | Power Consumption | Best-Fit B2B Scenario |

|---|---|---|---|---|

| Wi-Fi | ~50m | High (1-600+ Mbps) | High | Smart office/factory floors where power is readily available and high-speed data is needed for video or complex sensor fusion. |

| Bluetooth/BLE | ~10-100m | Low-Medium (1-3 Mbps) | Very Low | Wearable devices, indoor asset tracking (beacons), and short-range equipment monitoring where battery life is a primary constraint. |

| LoRaWAN | 2-15km | Very Low (0.3-50 Kbps) | Extremely Low | Smart agriculture, city-wide metering, or remote environmental monitoring where devices must operate for years on a single battery. |

| Cellular (4G/5G) | Several kms | Very High | High | Vehicle telematics, mobile asset tracking, and remote high-bandwidth applications (e.g., security cameras) requiring ubiquitous coverage. |

| Zigbee/Z-Wave | ~10-100m | Low (40-250 Kbps) | Low | Smart building automation and industrial control systems where a reliable, self-healing mesh network is more critical than high data throughput. |

By aligning protocol characteristics with your specific use case constraints, you can architect an IoE deployment that is both robust and efficient.

Architecting Scalable Data Pipelines from Edge to Cloud

Integrating thousands of Internet of Everything sensors is only the first step. The more complex architectural problem is designing a system to process the resulting data volume and velocity without incurring prohibitive costs or latency.

A common but flawed approach is to stream all raw sensor data directly to a central cloud. This naive architecture quickly leads to high bandwidth costs, poor latency for time-sensitive applications, and an ingestion infrastructure that is difficult to scale. For any system requiring a near-real-time response, this model is unworkable.

The solution is a distributed data pipeline that processes information intelligently at different layers—from the network edge to the central cloud. This model filters and aggregates data, ensuring that only relevant information is transmitted, thereby optimizing for cost, latency, and scalability.

The Edge and Fog Computing Paradigm

A robust IoE architecture pushes computation closer to the data source. This is the core principle of edge and fog computing. Instead of a simple sensor-to-cloud data flow, this model introduces intermediate layers to perform real-time processing and reduce the load on centralized resources.

-

Edge Computing: Processing occurs directly on or near the sensor device. For example, an intelligent camera on a manufacturing line runs a lightweight ML model to detect product defects. Instead of streaming raw video, it only transmits an alert and a single image when a defect is identified. This drastically reduces bandwidth consumption and enables an immediate response.

-

Fog Computing: This layer sits between the edge devices and the cloud, typically on a local gateway or on-premises server. It aggregates data from multiple edge devices and can run more complex analytics. For instance, a fog node in a warehouse could correlate data from motion, temperature, and humidity sensors to optimize the HVAC system in real-time, without a round-trip to a distant cloud data center.

This tiered approach is critical for managing large-scale deployments. The Asia-Pacific region, for example, is projected to see a compound annual growth rate of 39.6% in IoT sensor adoption from 2024-2029. This growth is driven by advanced applications where low-latency edge processing is a strict requirement. You can discover more insights about these global sensor market trends to understand the scale of this shift.

Core Components of a Resilient Data Pipeline

Building a distributed data pipeline requires a stack of specialized technologies, each chosen to handle the unique demands of sensor data. The architecture must be decoupled to ensure maintainability and resilience.

The journey begins with selecting the right sensor, a foundational process that precedes the pipeline architecture itself.

Defining the problem dictates the requirements for everything that follows, from communication protocols to the specific hardware needed.

The most resilient IoE data pipelines are not monolithic. They are composed of discrete, decoupled services that can be scaled, updated, and maintained independently, reducing the risk of a single point of failure bringing down the entire system.

Key components in a well-architected pipeline include:

-

Message Brokers: Services like MQTT brokers (e.g., Mosquitto, EMQ X) function as the messaging backbone. They manage communication between numerous devices and backend services using a lightweight publish-subscribe model ideal for constrained devices on unreliable networks.

-

Data Ingestion Services: At the cloud boundary, managed services like AWS IoT Core or Azure IoT Hub handle device authentication, message routing, and integration with other cloud services at scale.

-

Time-Series Databases: Sensor data is fundamentally time-series data. Specialized databases such as InfluxDB or TimescaleDB are engineered to store and query this data format with high efficiency, far outperforming traditional relational databases for monitoring and analytics workloads.

-

Observability Stack: A dedicated observability stack (e.g., Prometheus for metrics, Grafana for visualization, and a logging solution) is non-negotiable for monitoring the health of sensors, gateways, and the data pipeline.

The decision of where to host these components involves a trade-off analysis between on-premises and cloud deployments. To dive deeper into these choices, explore our guide on on-premises vs cloud architectures. The optimal architecture balances real-time operational needs with long-term analytical goals, creating a system that is both powerful and cost-effective.

Embedding Security and Privacy into Your IoE Architecture

In the context of the Internet of Everything, security and privacy are not features; they are foundational architectural requirements. The primary problem is that the IoE attack surface extends into the physical world, creating novel threat vectors that can lead to data breaches, regulatory penalties (e.g., under GDPR or NIS2), and a permanent loss of trust.

Treating security as an afterthought is a critical error. A system that connects to the physical world—monitoring industrial processes, managing building access, or tracking assets—presents risks far beyond simple data loss. An attacker could manipulate sensor data to cause physical damage or disrupt critical operations. Your security model must address the entire system, from physical tampering with internet of everything sensors to sophisticated man-in-the-middle attacks.

Building a Defence-in-Depth Strategy

The solution is not a single tool but a multi-layered, defence-in-depth strategy. If one layer of defence is compromised, another is in place to mitigate the threat.

These controls are architectural necessities:

- Secure Device Identity and Provisioning: Each sensor requires a unique, non-forgeable identity, typically implemented using cryptographic certificates. This prevents an attacker from introducing a malicious device into the network to inject false data or exfiltrate legitimate data.

- End-to-End Encryption (E2EE): Data must be encrypted in transit (e.g., using TLS) and at rest. This ensures that intercepted data remains confidential and useless to an unauthorized party.

- Secure Boot and Firmware Integrity: Devices must be architected to run only authenticated, cryptographically signed software. A secure boot process ensures that a device with tampered firmware will fail to start, preventing an attacker from establishing a persistent foothold. Over-the-air (OTA) updates must be equally secure.

Aligning Technical Controls with Compliance Mandates

For compliance and IT managers, these technical controls are the implementation mechanisms for legal requirements like GDPR and the NIS2 Directive, which impose strict rules on data protection and system resilience.

Privacy is an architectural choice, not a feature. An IoE system designed without privacy-by-design principles will inevitably lead to compliance failures, as retrofitting controls is often technically infeasible and prohibitively expensive.

An auditable system must demonstrate that these controls are in place and effective. For example:

- GDPR: Strong encryption and robust identity management directly address the “integrity and confidentiality” principle (Article 5(1)(f)).

- NIS2 Directive: A key requirement is to limit the impact of a security incident. Network segmentation—isolating critical IoE devices from the corporate network—is a fundamental control for achieving this.

The market’s push toward more advanced sensors makes these controls even more vital. North America, a leader in IT adoption, saw its IoT sensors market valued at USD 6.19 billion in 2024. Vision sensors, growing at a 29.91% CAGR, are increasingly being paired with LLMs for complex analysis, which only raises the stakes for security and privacy. You can learn more about these IoE sensor market findings to see the scale of what’s at risk.

By architecting an IoE system with a security-first, privacy-by-design mindset, you are not just protecting data. You are building a foundation of trust essential for long-term operational viability.

Real-World IoE Implementation and AI Integration

The problem facing technical leaders is how to move from an architectural blueprint to a functional, value-generating system without succumbing to a “big bang” deployment that is complex and risky. The solution is a phased approach: start with a Minimum Viable Product (MVP) that solves a discrete problem, deliver value quickly, and use the collected data to build more sophisticated, AI-driven capabilities.

The following use cases illustrate this iterative path from simple monitoring to intelligent automation.

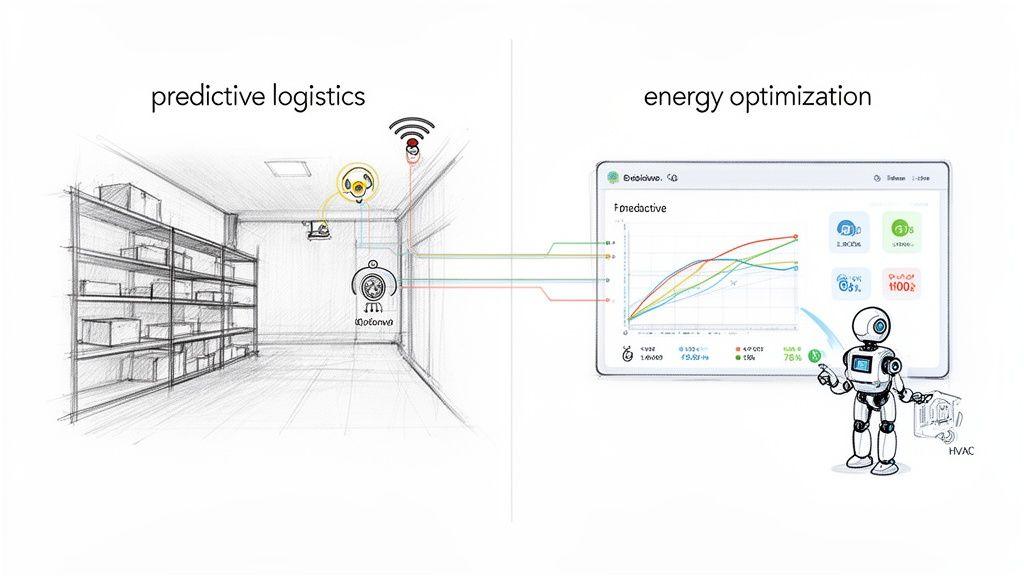

Use Case 1: Predictive Logistics for a SaaS Platform

A SaaS company provides a warehouse management system. To differentiate their product, they want to move beyond simple inventory tracking to predict supply chain disruptions caused by environmental conditions. This requires a network of internet of everything sensors.

Phase 1: The MVP for Compliance and Monitoring

The initial goal is to provide immediate, tangible value. The team deploys temperature and humidity sensors in a key client’s warehouse.

- Sensor Selection: Low-power, long-range LoRaWAN sensors are chosen for their ease of installation (no new wiring) and multi-year battery life, minimizing maintenance overhead.

- Data Pipeline: Every 15 minutes, sensors transmit data to a LoRaWAN gateway. The data is then published via MQTT to a cloud ingestion service and stored in a time-series database.

- User Value: The client gains a dashboard for real-time environmental monitoring and receives automated alerts if conditions deviate from a pre-defined safe range. This immediately helps the client meet regulatory compliance requirements for sensitive goods.

Phase 2: AI-Powered Predictive Forecasting

After several months of data collection, the system has a valuable historical dataset. The objective now shifts from reactive alerting to proactive prediction of spoilage events.

An effective IoE system evolves. It starts by solving a simple, immediate problem and then uses the data it collects as the fuel to build more advanced, predictive capabilities that deliver compounding value over time.

The architecture is enhanced:

- Edge Processing: Gateway firmware is updated to perform basic data aggregation, such as calculating hourly averages. This reduces data transmission and cloud processing costs.

- Cloud AI Model: A machine learning forecasting model is trained on the historical sensor data, combined with external data sources like weather forecasts and shipping schedules.

- Enhanced Dashboard: The platform now displays a “risk of spoilage” score for the next 72 hours, enabling managers to take pre-emptive action, such as rerouting a shipment or adjusting warehouse climate controls.

Use Case 2: AI-Driven Facility Management

A custom software application is being built for a corporate campus to reduce energy consumption. The system will use occupancy data to allow an AI agent to intelligently control the building’s HVAC system while maintaining employee comfort and safety.

Phase 1: The MVP for Occupancy Analytics

The first step is to understand space utilization. A network of passive infrared (PIR) occupancy sensors is installed in meeting rooms and common areas.

- Sensor Selection: BLE-based PIR sensors are chosen for their low cost and simple connectivity to a local BLE-to-Wi-Fi gateway.

- Data Pipeline: The gateway aggregates “occupied/unoccupied” state changes and forwards them to a cloud backend.

- User Value: Facility managers receive a dashboard with heatmaps showing building utilization patterns. This data alone provides significant value for optimizing cleaning schedules and space planning.

Phase 2: The AI Control Agent

With a baseline of occupancy data, an AI agent is introduced to automate HVAC control. This requires careful integration and safety considerations. You can explore more on this topic in our article on the synergy of artificial intelligence and IoT.

- Integration: The cloud application is integrated with the building management system (BMS) via its API, enabling it to send control commands to HVAC units.

- AI Agent Logic: An AI agent is developed using a rules engine combined with a predictive model. It learns the typical occupancy schedule for each zone and begins to pre-cool or pre-heat rooms before occupants arrive, powering down systems in empty areas.

- Safety Guardrails: This is the most critical component. The agent operates within strict, pre-defined safety limits. It cannot disable ventilation entirely or set temperatures outside an approved comfort range. This combination of intelligent automation and robust safety constraints is essential for a successful deployment.

Actionable Takeaways for Technical Leaders

For leaders considering an IoE initiative, the path from concept to a reliable, high-value system is defined by deliberate architectural decisions. This should be treated as a core evolution of your software’s capabilities, not an auxiliary project.

Success depends on a pragmatic mindset that continuously balances technical trade-offs with measurable business value.

A Foundation for Long-Term Success

The primary goal is to transform sensor data into a dependable asset. The most common mistake is to treat Internet of Everything sensors as simple collectors. Instead, view their integration as a foundational architectural choice with system-wide implications.

A pragmatic, long-term approach is the only way to transform the promise of sensor data into a genuinely intelligent system. Rushed deployments create technical debt and operational headaches. A strategic implementation builds a durable competitive advantage.

Start with security and privacy as non-negotiable, day-one requirements. Attempting to retrofit security is exponentially more expensive and often technically impossible. Embed controls like end-to-end encryption and secure device identities into your initial design.

Execution and Scalability

When selecting technology, conduct a rigorous analysis based on real-world constraints. The choice of sensors, protocols, and data platforms must be justified by power consumption, data throughput, and total cost of ownership.

Finally, design data pipelines for both scalability and cost-efficiency. This is where an edge computing strategy becomes a powerful tool, enabling local data processing to reduce latency and cloud expenditures. By building incrementally and focusing on measurable outcomes, your IoE system will evolve into a powerful, reliable, and intelligent asset.

Frequently Asked Questions About IoE Sensors

When planning an IoE sensor deployment, practical questions regarding long-term management and cost are paramount. Here are common concerns from technical leaders, with answers grounded in real-world implementation experience.

How do we manage thousands of deployed IoE sensors?

Managing a large fleet of internet of everything sensors requires a holistic strategy, not a single tool. Architecturally, a robust device management platform is essential from day one. This platform must handle remote diagnostics, secure over-the-air (OTA) firmware updates, and automated device provisioning.

Operationally, success depends on prudent hardware selection and system design. Choose sensors with a high Mean Time Between Failures (MTBF) and, where feasible, design for physical serviceability. On the software side, comprehensive monitoring must be built into your platform to track battery levels, network connectivity, and data integrity. This allows you to preemptively address device failures before they impact service delivery.

What is the most significant hidden cost in an IoE project?

While hardware procurement is a visible capital expense, the most significant hidden cost in nearly every IoE project is data management at scale. This is not merely cloud storage fees but the ongoing operational expense (OpEx) and engineering effort required to build, maintain, and evolve the data pipeline.

Ingesting, cleaning, storing, and securing terabytes of sensor data represents a substantial ongoing cost. A naive architecture that funnels all raw data to the cloud will quickly lead to unsustainable bandwidth and platform fees, undermining the project’s ROI.

This is precisely why a well-considered edge computing strategy is critical. By processing data locally and transmitting only high-value, aggregated insights to the cloud, you can effectively manage and control these long-term costs.

How can we ensure IoE sensor data is reliable for AI models?

The performance of an AI model is entirely dependent on the quality of its training data. The principle of “garbage in, garbage out” is especially true for sensor-driven systems. Data reliability begins with hardware selection and calibration. Use industrial-grade sensors for critical applications and implement automated calibration routines to mitigate sensor drift over time.

Your data pipeline must then function as a quality gate. A robust pipeline will include:

- Data Validation: Filter out noise and physically impossible values at the point of ingestion.

- Anomaly Detection: Employ algorithms to flag anomalous readings, which can be an early indicator of sensor malfunction.

- Sensor Fusion: Where possible, combine data from multiple sensor types (e.g., an accelerometer and a gyroscope). This provides a more reliable and context-rich view of an event than a single data point can offer.

For mission-critical applications, incorporating a human-in-the-loop validation process is essential for maintaining the accuracy of your AI models’ ground truth.

At Devisia, we help businesses transform their vision into reliable, AI-enabled digital products. From pragmatic architectural design to long-term maintainability, we provide a clear path to building meaningful, scalable software. Discover how we can help you build your next system at https://www.devisia.pro.