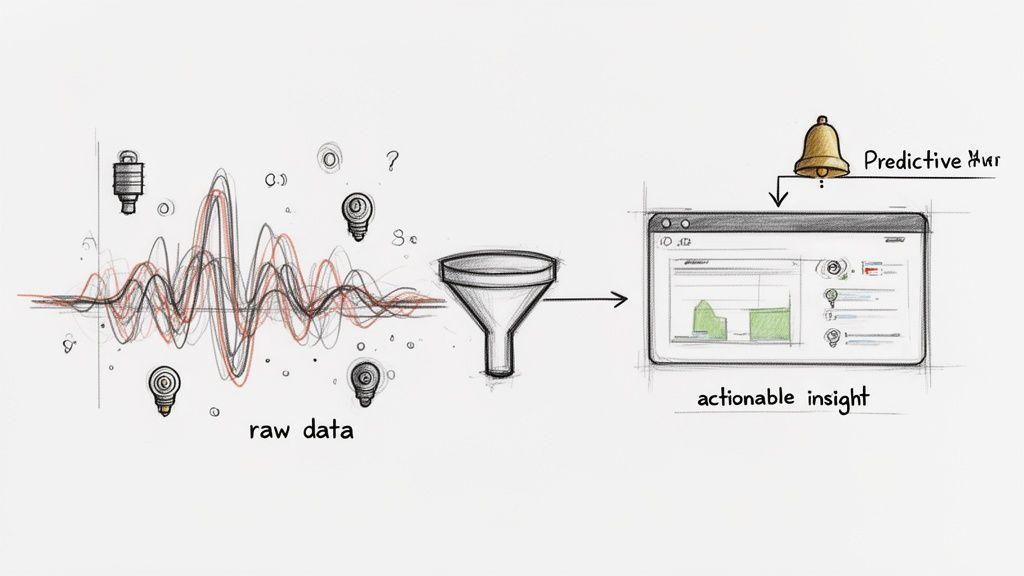

Combining Artificial Intelligence and the Internet of Things (AIoT) is not just about connecting devices; it’s about embedding intelligence into them. This architectural approach is what finally turns the overwhelming flood of raw data from connected hardware into precise, actionable insights.

The problem is that the Internet of Things (IoT) succeeded in connecting billions of devices, but this created a new challenge: organisations are now drowning in a torrent of sensor readings, logs, and telemetry data. On its own, this data has little value. The issue was never a lack of data; it was an inability to extract meaning from it.

An artificial intelligence IoT strategy closes this gap. By integrating AI models into an IoT data pipeline, you build systems that can learn, reason, and act with a degree of autonomy. This isn’t about adding another buzzword to the tech stack. It’s a fundamental architectural decision about how to generate business outcomes from operational data.

From Raw Data to Operational Value

For CTOs and product leaders, this marks a critical shift from passive data collection toward active intelligence generation. The objective is to engineer systems that are not just connected, but also contextually aware and responsive. A naive approach—simply collecting more data—only exacerbates the problem, increasing storage costs without improving outcomes.

This guide provides a pragmatic roadmap for building secure, scalable, and cost-effective AIoT systems. We focus on implementation considerations and architectural trade-offs, covering:

- Key Architectural Patterns: Evaluating edge, cloud, and hybrid models based on latency, cost, security, and compliance constraints.

- Data Governance by Design: How to embed frameworks like GDPR and NIS2 into the system architecture from day one, not as an afterthought.

- Practical Implementation Scenarios: Moving beyond diagrams to examine how to build reliable AIoT workflows that function in real-world operational environments.

The market reflects this operational urgency. The global Artificial Intelligence in IoT market is projected to reach USD 221.77 billion by 2032. This growth is a direct response to the need to manage data from an ever-expanding fleet of connected devices, expected to approach 40 billion by 2030. You can explore the full research on AIoT market trends to understand the scale of this technological shift.

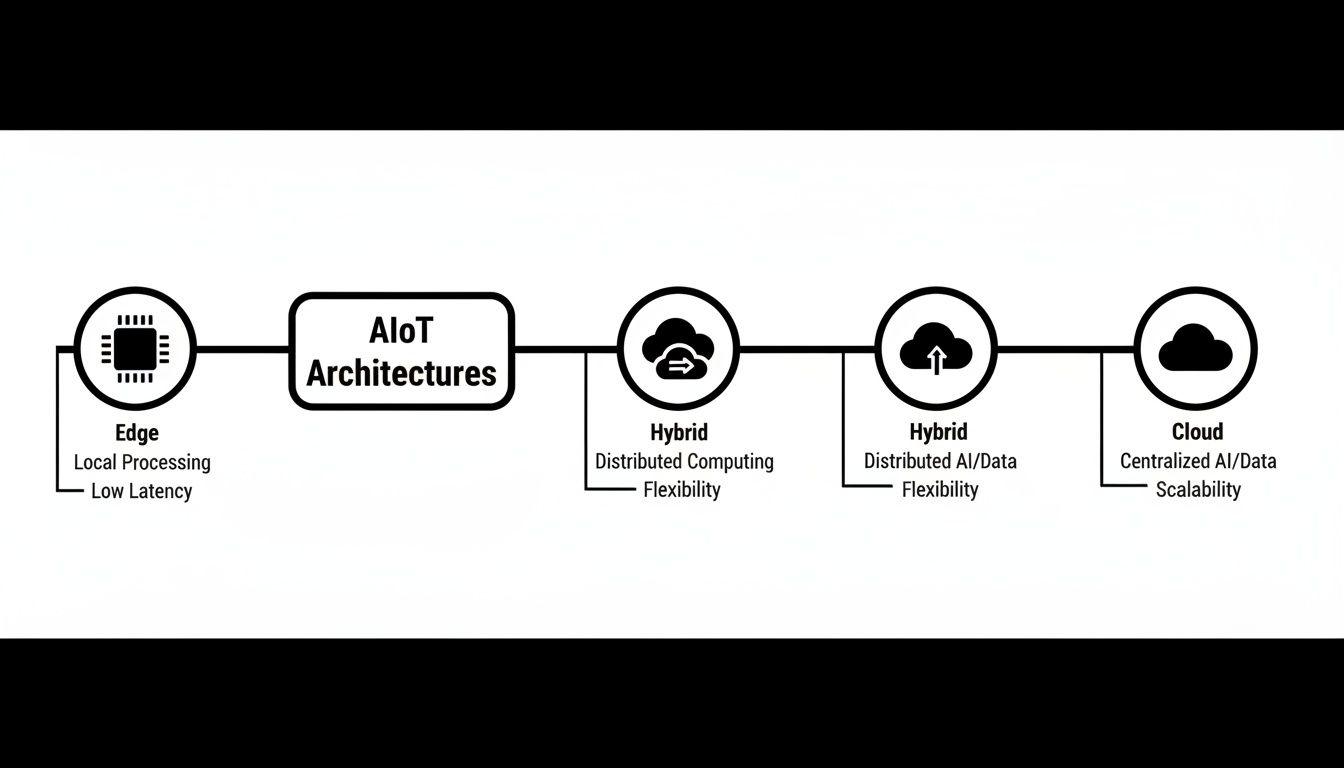

Choosing the Right AIoT Architectural Blueprint

Selecting the right architecture for your artificial intelligence IoT system is one of the most critical engineering decisions you will make. It directly determines the solution’s performance, operational cost, data security posture, and scalability. The fundamental question is: where does the data processing—the “thinking”—actually happen?

A superficial approach is to send all data from every device to a central cloud for processing. For many real-world scenarios, however, this is not just impractical; it can be dangerous. Imagine a factory using computer vision for real-time quality control on a production line. Waiting for a round-trip to the cloud to identify a defect is operationally untenable.

The Edge Computing Model

In an edge-first architecture, AI processing occurs directly on or near the IoT device. This could be a powerful sensor, a local gateway within a factory, or a small server in a retail store.

Data is analysed at its source. Only essential results—such as summaries, anomalies, or alerts—are transmitted to the cloud. This model is a necessity for any application requiring near-instantaneous response.

- Ultra-Low Latency: Decisions are made in milliseconds, independent of network delays. This is non-negotiable for autonomous vehicles, industrial robotics, or critical patient monitoring systems.

- Privacy by Design: Sensitive information, such as video feeds from a secure facility or personal health data, can be processed locally without leaving the premises. This is a foundational principle for building GDPR-compliant systems.

- Reduced Bandwidth Costs: Streaming high-resolution data from thousands of devices becomes prohibitively expensive. Edge processing dramatically reduces the volume of data transmitted over the network.

However, edge computing introduces its own trade-offs. Managing, updating, and securing a distributed fleet of intelligent devices is a complex operational challenge. Furthermore, the processing power available on an edge device is inherently limited compared to the vast resources of a cloud environment.

The Cloud Computing Model

A pure cloud model aggregates raw data from all IoT devices into a central data lake or warehouse. Here, you can apply powerful AI models to perform large-scale analysis, train new models on massive historical datasets, and generate high-level business intelligence.

This architecture is effective when immediate response is not the primary requirement. It is well-suited for a smart city project analysing long-term traffic patterns to optimise signal timing or for a utility company forecasting energy demand across an entire region.

The primary risk of a cloud-only model for an artificial intelligence IoT solution is its fragility. Complete reliance on cloud connectivity creates a single point of failure. If the network connection is lost, your “smart” devices become “dumb” data collectors, unable to function autonomously.

The Hybrid Model: A Pragmatic Default

For most enterprise-grade AIoT systems, neither a pure edge nor a pure cloud model is sufficient. The hybrid architecture provides a pragmatic balance, enabling you to execute processing tasks where they are most effective.

In this configuration, time-sensitive operations and initial data filtering happen at the edge. For instance, a device on a wind turbine might analyse vibration data in real time to detect an anomaly. If it identifies a potential fault, it can trigger an immediate local alert while sending a condensed data packet to the cloud for deeper forensic analysis. In the cloud, that specific event can then be correlated with data from thousands of other turbines to identify systemic trends or refine predictive maintenance models.

This balanced approach provides the low latency of the edge and the powerful analytical capabilities of the cloud, resulting in a system that is both resilient and efficient. It also helps mitigate vendor lock-in often associated with proprietary, all-in-one platforms by allowing for greater architectural flexibility.

Comparing AIoT Architectural Models

This table summarises the trade-offs between Edge, Cloud, and Hybrid architectures across key technical and business dimensions.

| Dimension | Edge Computing | Cloud Computing | Hybrid Model |

|---|---|---|---|

| Latency | Milliseconds; ideal for real-time control. | High; dependent on network connection. | Low latency for edge tasks, high for cloud analysis. |

| Data Privacy | High; sensitive data stays on-premise. | Lower; data is transmitted to a third party. | High; sensitive data can be processed locally first. |

| Bandwidth Cost | Low; only essential data is sent. | High; raw data streams are transmitted. | Moderate; balanced between local and cloud traffic. |

| Scalability | Complex to manage at large scale. | Highly scalable with cloud provider resources. | Best of both; scalable analytics with manageable edge fleet. |

| Offline Capability | High; core functions operate without a network. | None; device is useless without a connection. | High; critical functions run locally, sync when online. |

| Model Complexity | Limited by device hardware. | Virtually unlimited; supports large, complex models. | Simple models run at edge, complex ones in the cloud. |

| Upfront Cost | High; requires investment in capable hardware. | Low; pay-as-you-go cloud services. | Moderate; balanced hardware and cloud costs. |

The hybrid model is often the most practical starting point, offering a robust foundation that can adapt as your system’s requirements evolve.

From Anomaly Detection to Automated Workflows

Once you have a solid architecture, the next challenge is applying AI models within your IoT data streams to turn raw sensor readings into valuable actions. This is about selecting the right tool for the right job. For example, a computer vision model on an edge device can identify microscopic defects on a production line in real-time, while an acoustic model can detect a subtle change in machine noise that signals impending bearing failure. This is where artificial intelligence and IoT transitions from a concept to a powerful operational tool.

Using Embeddings for Contextual Anomaly Detection

One of the most practical techniques in AIoT is using embeddings—rich numerical representations of data—to identify subtle anomalies. A simple threshold alert might tell you a machine’s temperature is high. That’s a start, but embeddings provide deeper context.

By converting complex sensor data (vibration, temperature, power consumption) into a single vector, a model can learn the normal relationship between these metrics. When that relationship deviates, even if individual metrics remain within their own “normal” thresholds, it signals a developing problem that isolated alerts would miss.

Orchestrating Automated Agent Workflows

These insights become more powerful when integrated into an automated agent workflow. An AI agent is a system designed to execute a sequence of actions to achieve a goal, coordinating different tools and data sources.

Consider a real-world scenario: an AI agent is tasked with monitoring a fleet of industrial generators. Its workflow is not a simple “if-then” rule but a multi-step reasoning process designed for reliability and efficiency. A naive implementation could lead to false positives or unnecessary dispatches.

A robust process looks like this:

- Initial Alert: The agent receives a high-temperature alert from a generator’s sensors.

- Contextual Analysis: Instead of blindly dispatching a technician, the agent queries a vector database. It uses the alert’s data (temperature, load, runtime) to find similar historical events and cross-references them with maintenance logs.

- Pattern Recognition: The agent discovers that this pattern of alerts has preceded a coolant pump failure in 85% of past cases. It also confirms that no recent maintenance was performed on the coolant system.

- Automated Action: Armed with this context, the agent uses an API to check the on-call schedule and books the next available technician with the correct specialisation. It simultaneously creates a work order with a summary of its findings, highlighting the high probability of a coolant pump issue.

Engineering for Reliability and Cost Control

Building such workflows requires robust orchestration to ensure the system is reliable, auditable, and cost-effective. A superficial implementation can quickly lead to cascading failures or a runaway cloud bill. This is where architectural choice is critical.

As the diagram illustrates, a hybrid model often provides the optimal balance. It allows for immediate, local processing at the edge while enabling complex, large-scale analysis in the cloud—the exact combination required for sophisticated agentic workflows. To make it work, you must focus on several key engineering principles:

- Robust Observability: A unified monitoring solution is required to track the entire workflow, including device status, API call latency, AI model performance, and the cost of each step.

- Intelligent Caching: The agent in our example should not re-query the vector database for every minor temperature fluctuation. Smart caching reduces redundant model calls and API requests, which is essential for managing operational costs.

- Fail-safes and Human-in-the-Loop: What happens if the scheduling API is unavailable? The agent must have a fallback, such as sending a high-priority notification to a human operator. For irreversible or high-stakes decisions, a “human-in-the-loop” step is non-negotiable. For a deeper dive into structuring these systems, learn more about our pragmatic approach to AI system structure.

By orchestrating these components, you move from a collection of smart devices to a cohesive, intelligent system that actively improves operations.

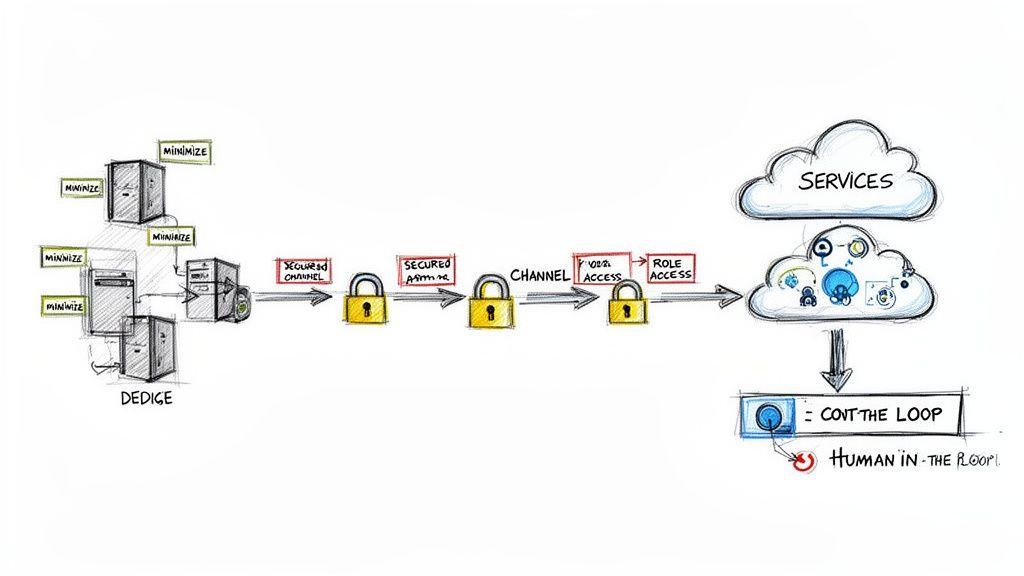

Architecting for Security and Data Governance

In any artificial intelligence IoT system, security and data governance are not features to be added later; they are architectural imperatives. Attempting to “bolt on” compliance at the end of a project inevitably leads to expensive redesigns, regulatory penalties, and a loss of customer trust. The proliferation of data and automated decision-making dramatically expands the attack surface and amplifies privacy risks.

Navigating the Regulatory Landscape as an Engineering Problem

Modern regulations are not abstract legal documents; they are concrete engineering requirements. Frameworks like the EU’s GDPR, NIS2 Directive, and the Digital Operational Resilience Act (DORA) have direct implications for how you build and operate AIoT solutions.

- GDPR (General Data Protection Regulation): This mandates “privacy by design.” In an AIoT context, this means practicing data minimisation at the source—for instance, processing data on an edge device to avoid transmitting unnecessary personal information to the cloud.

- NIS2 (Network and Information Security Directive): This requires robust security measures for critical infrastructure, translating to mandatory end-to-end encryption for all data in transit and at rest, along with strict access controls.

- DORA (Digital Operational Resilience Act): Focused on the financial sector, this demands that organisations can withstand and recover from ICT-related disruptions. It necessitates resilient architectures with built-in fail-safes and well-defined incident response plans.

Ignoring these frameworks exposes an organisation to significant legal and financial liability. The only defensible approach is to build systems that are secure and compliant by their very design.

Practical Risk Mitigation Strategies

An effective security posture is built in layers, directly into the AIoT architecture. A cornerstone strategy is data minimisation at the edge. For example, a smart camera monitoring foot traffic in a retail store does not need to stream high-definition video to the cloud. An edge device can analyse the feed locally, extract an anonymous count, and transmit only that integer. The raw footage can be immediately discarded, architecturally eliminating the risk of a personal data breach.

Another critical component is robust Role-Based Access Control (RBAC). This ensures that users and automated systems only have the minimum permissions necessary to perform their functions. A maintenance technician should not have credentials to access financial analytics, and an AI agent optimising energy consumption should not be able to alter production line configurations.

Security is an architectural choice, not a feature. In AIoT, this means designing systems with explicit controls that assume threats are not a matter of if, but when.

This proactive mindset is essential. With a reported 300% increase in IoT-related cybersecurity incidents last year, building resilient systems is a business imperative. The stakes are particularly high in manufacturing, where AIoT is helping reduce supply chain costs by 20-30%. This trend is especially pronounced in the Asia Pacific region, where the AIoT market is surging. Read more on the growing Asia Pacific AIoT market.

Implementing Guardrails and Human Oversight

Finally, for any system where an AI can trigger actions in the physical world, guardrails and human-in-the-loop (HITL) controls are mandatory. Guardrails are automated checks that prevent an AI model from taking actions that violate predefined safety or business rules.

For truly critical decisions, however, human confirmation is essential. Imagine an AIoT system detects a major anomaly on a factory’s main production line. Instead of automatically initiating a shutdown—a decision that could cost millions—the system should present its analysis and recommendation to a human operator. The final decision to halt production rests with a person who possesses contextual knowledge the AI lacks. This balanced approach combines the efficiency of automation with the wisdom of human judgment.

Managing Cost and Scalability in Your AIoT System

For any artificial intelligence IoT solution to be viable, it must be manageable both technically and financially. A proof-of-concept that becomes prohibitively expensive at scale is a failed project. Scalability is not just about handling more devices; it’s a financial problem. Every API call, gigabyte transferred, and second of cloud compute contributes to the operational bill. Without clear visibility and control, costs can escalate unpredictably.

Establishing Unified Observability

The first step toward control is comprehensive observability. The naive approach of monitoring individual components in isolation creates blind spots. A unified dashboard is needed to track the entire data pipeline from the edge device to the final insight.

- Device Health: Monitor CPU load, memory usage, and network connectivity on edge devices to pre-empt hardware failures.

- Data Pipeline Latency: Track how long it takes for data to move from sensor to insight to identify network bottlenecks or processing delays.

- AI Model Performance and Drift: Continuously monitor model accuracy. A model’s performance can degrade over time as real-world conditions change—a phenomenon known as model drift.

- Cost Per Transaction: Attribute costs to specific actions or devices. Knowing that a certain type of query costs £0.05 enables precise financial planning and ROI calculation.

Concrete Strategies for Cost Control

Once you have clear observability, you can implement specific tactics to manage spending. Runaway cloud spend is often a symptom of poor architectural choices, not an unavoidable cost.

A common mistake is treating AI model calls as a limitless resource. In a large-scale artificial intelligence IoT deployment, even a low-cost model can generate immense expense if called carelessly and redundantly.

Here are three effective strategies:

- Implement Intelligent Caching: Reduce redundant AI model calls by caching the results of common queries. If multiple sensors report similar data within a short window, the system should use a cached response instead of triggering a new, expensive inference.

- Use Rate Limits and Circuit Breakers: Protect your budget from a misconfigured device or a software bug. Implement strict rate limits on API calls and use circuit breakers to automatically halt processes that exceed cost thresholds, alerting an operator immediately.

- Choose the Right Model Mix: Not every task requires a large, proprietary model. Use a tiered approach: deploy small, efficient open-source models at the edge for initial data filtering. Reserve more powerful, expensive models for complex analyses in the cloud, but only after a potential issue has been identified. You can learn more about finding the right balance by reading our guide on on-premises vs. cloud computing.

By combining total system observability with disciplined cost-control tactics, you can build an AIoT solution that scales reliably and affordably.

Your Phased Roadmap to AIoT Implementation

Implementing an artificial intelligence IoT solution is a phased journey, not a single, high-risk “big bang” deployment. For founders and product leaders, the most effective approach is to move in stages, de-risking the investment and validating value along the way.

The journey starts with discovery. The first step is to identify a specific, high-value business problem that an AIoT system can solve. Vague goals like “improving efficiency” are insufficient. You need a concrete metric, such as “reducing unplanned machine downtime by 15%.”

Start Small with a Focused Pilot

With a clear business case, the next step is a small-scale pilot project. This serves as a reality check to validate your technical assumptions and confirm that the technology can deliver the desired outcome before committing significant resources. The pilot should have a tight scope, such as monitoring a handful of critical assets. The primary goal is to confirm that your sensors collect useful data and your initial AI models can generate meaningful insights. This is an opportunity to fail fast and learn cheaply.

This measured approach is vital. With global connected IoT devices set to hit 18.8 billion by the end of 2024 and enterprises increasing their IoT budgets, the temptation to rush is strong. A successful pilot validates your approach, allowing you to scale into a market projected to reach USD 158.1 billion by 2034 without taking on unmanageable risk. You can discover more about the growth in connected devices and its implications.

Adopt an Incremental Delivery Model

After a successful pilot, you can scale using an incremental delivery model. This means adding new capabilities in layers, ensuring each addition provides measurable value and builds on a stable foundation.

The core principle is iterative enhancement: start with a simple, robust system, then add complexity. This allows you to learn and adapt based on real-world feedback, ensuring the system evolves to solve actual business needs.

A typical implementation roadmap might look like this:

- Phase 1: Data Collection & Basic Alerts. Begin by collecting data from a wider set of devices and establishing a reliable data pipeline. Implement simple, threshold-based alerts to notify operators of immediate issues.

- Phase 2: Predictive Analytics. Once you have a solid baseline of historical data, introduce more sophisticated AI models to predict future events, shifting from a reactive to a proactive operational posture.

- Phase 3: Automated Responses. Finally, empower the system to take automated actions based on its predictions, such as generating a maintenance work order or adjusting machine parameters in real-time, with appropriate human oversight.

This methodical progression ensures your artificial intelligence IoT investment is grounded in practical results, not speculation. For organisations looking to build resilient systems, our guide on various Internet of Things solutions offers further practical insights.

Frequently Asked Questions About AIoT

When CTOs and product leaders begin evaluating artificial intelligence IoT, the same practical questions arise. Here are direct answers based on real-world implementation experience.

What Is the First Step to Implementing an AIoT Strategy?

The first step is not technological. It is identifying a high-value business problem that can be solved with intelligent data.

Start by defining a clear metric you want to influence—such as reducing unplanned downtime by a specific percentage or cutting energy waste by a set number of kilowatt-hours. Then, execute a small-scale pilot with a limited number of devices to validate that the data you collect can impact that metric. This approach de-risks the project and ensures you are building a solution for a real need, not just adopting technology for its own sake.

How Do We Manage AI Processing Costs for Thousands of IoT Devices?

You must implement a multi-layered architectural strategy. A naive implementation where every device freely calls cloud APIs is a recipe for uncontrolled spending.

First, process as much data as possible at the edge to reduce transmission and cloud processing costs. Second, implement intelligent caching so that identical queries do not trigger redundant AI inferences.

Third, use a tiered approach to AI models. A simple, low-cost model can run on the edge for initial screening, reserving a more powerful, expensive model for use only when a potential issue has been flagged. Finally, implement strict observability and rate limiting to monitor costs in real-time and prevent budget overruns.

Can We Integrate AIoT into Our Existing Legacy Industrial Equipment?

Yes, this is a common and practical scenario. You do not need to replace your entire infrastructure to gain the benefits of an artificial intelligence IoT system.

The most effective approach is to retrofit legacy machines with modern, non-invasive sensors (e.g., vibration, thermal, or acoustic sensors). These sensors connect to an edge gateway that collects the data, performs initial processing, and then securely transmits it for further analysis. This allows you to gain AI-driven insights, such as predictive maintenance, from your existing assets, extending their operational life and improving efficiency without the massive capital expenditure and disruption of a complete overhaul.

At Devisia, we specialise in building reliable, maintainable, and secure digital products. From scalable SaaS platforms to AI-enabled systems with robust governance, we provide a clear path from business vision to meaningful software. Learn how our product mindset can help you build your next solution at https://www.devisia.pro.