For founders, CTOs, and product leaders, consent management has evolved from a legal checkbox into a core architectural challenge. Article 7 of the GDPR sets strict, technical conditions for consent that directly impact system design. A superficial approach—treating consent as a mere UI element—creates significant technical debt, compliance risk, and erodes the user trust that modern software products depend on.

Why Consent Is Now a Core Engineering Problem

Many engineering teams still treat consent as a front-end task: a banner, a modal, and a checkbox. This is a fragile, surface-level approach that fails under regulatory scrutiny. The reality is that the conditions for valid consent under Article 7 GDPR have deep implications for your entire software stack, from UI components to backend services, data pipelines, and storage layers.

The problem with treating consent as an afterthought is the cost of rework. When a user withdraws consent, your systems must react immediately and verifiably. This isn’t just about flipping a boolean in a user profile. It requires propagating that change across microservices, severing data flows to third-party analytics tools, and ensuring data is either deleted or properly anonymized in all downstream systems, including AI model training datasets. A failure to do so is a compliance breach.

The Architectural Shift from Checkbox to Core Capability

The real challenge lies in Article 7’s requirement that you must be able to demonstrate that valid consent was obtained. This single obligation shifts consent from a simple UI element to a critical system capability that needs robust architectural support. A naive implementation that cannot produce an audit trail is non-compliant by design.

Privacy is an architectural choice, not a feature. A robust, auditable consent mechanism should be treated as a core system capability, on par with authentication, authorization, or logging.

This perspective reframes compliance from a regulatory burden into a sound engineering principle. A system designed for demonstrable consent is inherently more transparent, resilient, and trustworthy. Building these capabilities from the ground up avoids the technical debt and compliance risks associated with bolted-on, superficial solutions.

Key architectural questions your engineering team must answer include:

- Auditability: How can you prove, at any moment, that a specific user consented to a specific data processing activity at a specific time, under a specific policy version?

- Granularity: Does your architecture support separate consents for different purposes (e.g., analytics vs. marketing automation) and enforce them independently?

- Revocability: How easily can a user withdraw consent, and how does your system guarantee that withdrawal is honored across all services and data stores?

Answering these questions at the design stage builds a foundation for compliance that scales with your product. A well-architected consent management system isn’t just about meeting legal requirements; it’s a hallmark of engineering excellence and a crucial component in building long-term user trust.

Translating Article 7 Into Engineering Requirements

To build a compliant system, legal principles must be translated into concrete engineering tasks. Article 7 GDPR rests on four pillars for valid consent: it must be freely given, specific, informed, and unambiguous. For engineers and product leaders, these are not abstract legal terms; they are functional specifications that dictate UI design, backend logic, and data architecture.

The problem is that a failure to map these legal requirements to technical implementation leads to non-compliant systems. Ignoring this translation step is one of the fastest ways to accumulate technical and regulatory debt.

Freely Given Consent

The principle of ‘freely given’ consent means the user must have a genuine choice, without pressure or negative consequences for refusal. From an engineering perspective, this immediately invalidates common but non-compliant UI/UX patterns.

Your system must be designed to avoid:

- Pre-ticked boxes: The default for any consent option must be “no.” The user must take a clear, affirmative action to opt in. This is a hard requirement.

- Bundled consent: You cannot force a user to agree to non-essential processing (like marketing analytics) to use your core service. Your backend must be architected to handle these permissions separately.

- Deceptive UI (Dark Patterns): Interfaces that make it difficult to refuse consent, whether through confusing language or manipulative design, render the consent invalid. The “accept” and “reject” options should carry equal visual weight.

Specific and Informed Consent

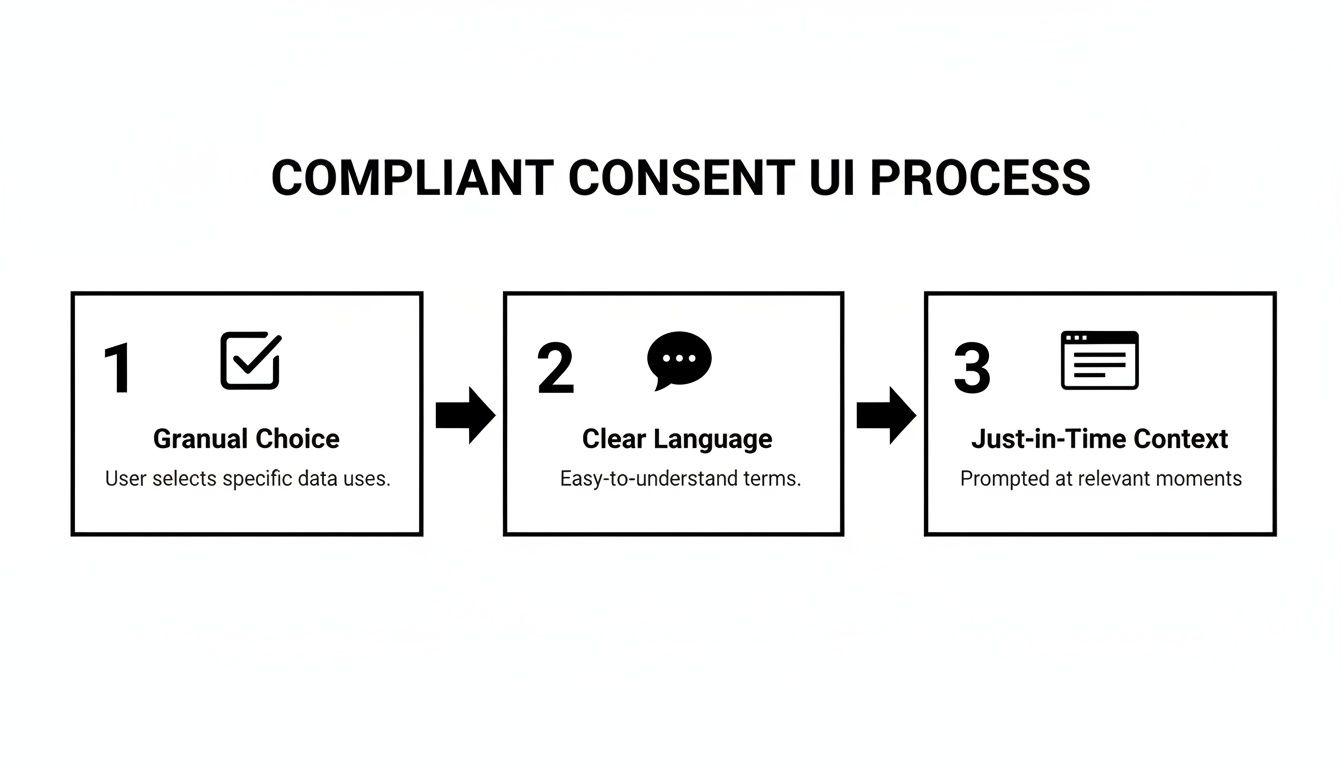

Consent must also be specific to a particular purpose and informed, meaning the user understands what they’re agreeing to before they consent. This demands both granularity in your system architecture and clarity in your user-facing language.

This translates into two key engineering requirements:

- Granular Permissions: Your consent management system must support separate, distinct permissions for different processing activities. For instance, a user might consent to transactional emails but not to sharing usage data with a third-party marketing partner. Your backend must be able to store and enforce these individual choices.

- Just-in-Time Information: Instead of linking to a lengthy privacy policy, you should request consent contextually. Use clear, simple language to explain what data you’re collecting and why, right at the moment it’s needed. This often means designing contextual modals or UI elements that deliver concise information within the user’s workflow. For more on information obligations, our guide on GDPR Article 14 provides useful context.

Unambiguous and Demonstrable Action

Finally, consent requires an unambiguous signal via a clear, affirmative action. A user must actively do something—like ticking a box or clicking a button—that your system can reliably record. This recordable event is the foundation of your audit trail.

The introduction of Article 7 GDPR forced a significant technical shift. When enforcement began, many organizations struggled to implement compliant mechanisms. By December 2018, only 50% of companies believed they were fully GDPR compliant, with some spending an average of $1.3 million on initial compliance efforts. This history underscores the importance of getting the engineering right from the start to avoid costly retrofitting.

An unambiguous action is a recordable event. Your system must be designed to log not just that a user consented, but precisely what they consented to and when.

This means your system’s logging capabilities must be robust. A simple has_consented boolean flag in a user table is dangerously inadequate. True compliance demands a detailed, immutable record that can withstand scrutiny from a Data Protection Authority (DPA).

Building a System That Can Prove Consent

Article 7(1) of the GDPR contains a simple but demanding requirement: the data controller must be able to demonstrate that consent was obtained. This shifts consent from a front-end checkbox to a critical back-end architectural challenge.

The problem is that many systems can’t provide this proof. A simple true/false field in a database is a claim, not evidence. To build a system that is compliant by design, you need to think like an auditor and create immutable, verifiable consent records. If a Data Protection Authority (DPA) investigates, this audit trail is your primary defense.

What a Defensible Consent Record Looks Like

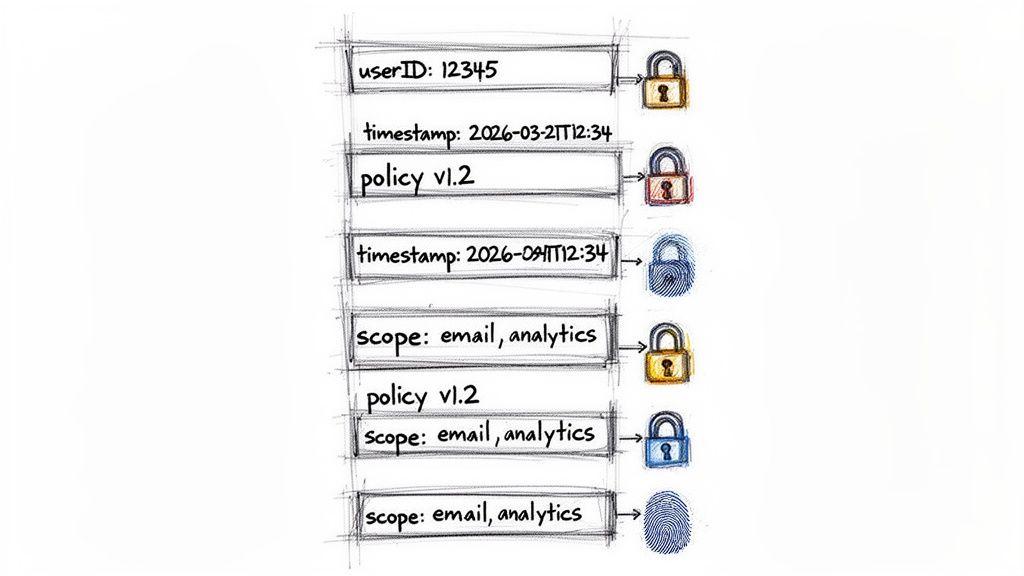

The core of a provable consent system is an immutable log. This log acts as the single source of truth for every consent-related action. Each time consent is given or withdrawn, you must capture a specific set of details to create a complete and defensible record.

The early years of GDPR demonstrated how seriously regulators take this. Authorities received 144,000 complaints and logged 89,000 data breaches, with a significant backlog of cases. These GDPR enforcement challenges on Varonis.com show that investigations can occur at any time, making robust records your best defense. A lack of proof is often treated as a lack of consent.

So, what data points are essential for a compliant consent log?

Essential Data Points for a GDPR-Compliant Consent Log

This table outlines the minimum required data fields for creating an auditable consent record, ensuring your system can demonstrate compliance with Article 7.

| Data Point | Description | Technical Implementation Example |

|---|---|---|

| User Identifier | A unique, persistent ID that unambiguously identifies the user. | user_uuid: "a1b2c3d4-e5f6-..." |

| Precise Timestamp | An ISO 8601 timestamp recording the exact moment consent was given or withdrawn. | timestamp: "2024-10-26T10:00:00Z" |

| Consent Scope | A clear, granular description of what the user agreed to. | scope: "analytics.tracking" |

| Legal Document Version | The specific version ID of the privacy policy or notice shown to the user. | document_id: "privacy_policy_v2.1" |

| Interface Context | Information about the UI where consent was captured. | context: "onboarding_modal" |

An event log containing these fields is the only way to accurately reconstruct a user’s consent journey and prove compliance.

Architectural Choices: The Right and Wrong Way to Store Logs

Knowing what to log is one thing; deciding how to store it is another. There are two main architectural paths, each with significant trade-offs for compliance.

A common but risky approach is to store consent logs in your main application database. While seemingly simpler to implement, it introduces a major flaw: data in standard relational tables can be altered, either accidentally or maliciously, undermining the integrity of your audit trail.

An immutable, append-only ledger is the gold standard for consent records. It provides the strongest proof that your records are a complete and unaltered history of a user’s consent lifecycle.

A more robust architecture uses a dedicated, append-only data store. This could be a specialized ledger database, a managed blockchain service, or even a properly configured, access-controlled log stream. This approach ensures that once a consent record is written, it cannot be altered or deleted. It can only be superseded by a new entry, such as a consent withdrawal event.

This architecture requires more upfront effort but delivers the data integrity a regulator would demand. Separating compliance records from application data also protects them from accidental changes during routine development. For modern systems, this is directly linked to managing user permissions, a topic we explore in our guide on Google Consent Mode v2.

Designing Consent Interfaces That Actually Work

A compliant backend is critical, but it’s only half the solution. The user interface is where consent is captured, and under Article 7 GDPR, its design is a legal requirement, not just a UX choice.

The problem is that a poorly designed interface can invalidate the consent it collects, regardless of how robust your backend logs are. If your UI is confusing, manipulative, or unclear, the consent is not considered valid. The goal is to build a flow that is both compliant and provides a clear, fair user experience.

This means translating the legal terms ‘informed’ and ‘unambiguous’ into practical UI/UX patterns. It requires moving away from burying consent in terms of service or using dark patterns to trick users into agreement.

Compliant Patterns vs. Dark Patterns

The line between a compliant and a non-compliant interface often comes down to key design choices. Compliant interfaces empower users with clear information and genuine choice. Dark patterns prioritize conversion over user rights and are explicitly non-compliant.

Here are the practical trade-offs:

-

Compliant Interface (The Right Way):

- Granular Checkboxes: Use separate, unticked checkboxes for each distinct processing purpose (e.g., “Analytics,” “Marketing Emails”). The user must actively opt in.

- Just-in-Time Modals: Ask for consent only when it’s needed, providing immediate context. For example, a modal requests location access only when a user opens a map feature.

- Equal Choice: Design “Accept” and “Reject” buttons with equal visual prominence. It should be just as easy to say no as it is to say yes.

-

Non-Compliant Dark Patterns (The Wrong Way):

- Pre-ticked Boxes: This defaults the user into consent, forcing them to take action to opt out. This is explicitly banned by the GDPR.

- Bundled Consent: Hiding consent for several unrelated activities behind a single “I Agree” button. This is neither specific nor granular.

- Confusing Language: Using legal jargon or vague phrases like “to improve your experience” without explaining what that means for their data.

Getting your opt-in and opt-out mechanisms right is fundamental. You can explore this further in our article on designing effective opt-in systems.

Writing Clear and Unambiguous Consent Text

The words you choose are as important as the UI design. The ‘informed’ requirement of Article 7 means people must understand what they are agreeing to. Your consent text must be a model of clarity.

Good consent text is simple, direct, and free of jargon. It should be understandable by a non-technical user, not a lawyer. The goal is clarity, not just legal cover.

Instead of writing, “We may leverage third-party data processors to analyse user behaviour for service optimisation,” say this:

“We use tools like Google Analytics to see how you use our product so we can improve it. This involves sharing anonymous usage data with Google. Do you agree?”

This is transparent, respects the user, and provides enough information for a genuine choice without causing overwhelm.

The Challenge of Consent for AI Personalization

Obtaining valid consent is particularly challenging for AI-driven systems, especially those used for personalization. The complexity of many AI models makes the ‘informed’ requirement difficult to meet. A blanket permission “to be profiled by our AI” is insufficient and non-compliant.

For any AI-powered feature, you must be specific about:

- What data is used for training and inference.

- What kind of decisions the AI makes (e.g., recommends products, sorts content).

- The logic behind the personalization (if it can be explained simply).

For example, when asking for consent to personalize a news feed, you could say: “Allow us to use your reading history to power an AI that recommends articles you might like. You can reset your personalization profile at any time in your settings.”

This level of honesty builds trust and ensures your implementation of Article 7 GDPR is defensible, even for your most advanced features.

The Engineering Challenge of Withdrawing Consent

Article 7(3) of the GDPR states that withdrawing consent must be as easy as giving it. This simple sentence is an architectural landmine for engineers. It demands a system where withdrawal is granular, immediate, and verifiably complete across your entire backend.

The problem is that many systems implement this superficially. A common but dangerous anti-pattern is to just flip a flag in a user’s profile from true to false. This doesn’t solve the problem; it creates a legal one. Data collected before the withdrawal cannot be processed further for the withdrawn purpose. A proper withdrawal must trigger a verifiable erasure or cessation-of-processing request that ripples through every service that holds that user’s data.

The consent process—granular, clear, and contextual—must essentially be run in reverse.

If a user can consent to analytics but not marketing, they must be able to withdraw from analytics while leaving other permissions intact.

Architecting for Propagation and Erasure

When a user clicks “withdraw,” your architecture must be prepared to act. In a modern microservices environment, this is a significant engineering challenge. The withdrawal instruction must be reliably propagated to every relevant service, from the main application to third-party integrations.

You need a clear map of your data flows. Key architectural patterns include:

- Event-Driven Propagation: A

ConsentRevokedevent, containing the user ID and the scope of withdrawal, can be published to a message queue. Downstream services subscribe to this event and trigger their own local cleanup or suppression processes. - Third-Party API Triggers: If you send data to a CRM or marketing tool, your system must call their respective APIs to trigger deletion or suppression on their end. This requires building and maintaining dedicated integrations for this purpose.

- Data Warehouse and Analytics Logs: This is a common point of failure. Data residing in a data warehouse or old logs must be either fully deleted or permanently anonymized for the withdrawn purpose. This often requires running surgical cleanup jobs that can remove or anonymize records without corrupting entire datasets.

The legal obligation to honor withdrawal of consent translates directly into an engineering requirement for a distributed transaction. The system must guarantee the withdrawal is completed across all services, or be able to report and retry failures.

The commercial stakes are high. When GDPR was introduced, EU firms saw an 8% reduction in profits and a 2% drop in sales. This financial impact, detailed in the GMU Law Review’s report on GDPR’s market impact, proves that compliance is not optional. Retrofitting these mechanisms is always more expensive and disruptive than building them correctly from the start.

Handling Difficult Edge Cases

Even with a solid architecture, you’ll face tricky edge cases. Your team needs a documented plan.

Two common implementation challenges are:

- Data in Backups: GDPR does not require you to alter immutable backups. However, your documented process must ensure that if a backup is restored, the user’s withdrawn consent status is immediately re-applied before any data is processed again.

- Orphaned Data: In complex systems, data can become “orphaned” in untracked services. Regular data mapping and discovery exercises are the only way to ensure your withdrawal process covers every data store, preventing “zombie data” from persisting after consent is withdrawn.

Building a compliant withdrawal system requires a deep, almost obsessive, understanding of your data landscape. Documenting the end-to-end process is as important as the code itself—it’s the evidence that proves you are meeting your obligations.

An Article 7 GDPR Checklist for Your Product Team

Legal principles are one thing; shipping compliant code is another. To bridge the gap between legal theory and engineering practice, product teams need a pragmatic way to audit their work.

This is not a legal document. It’s a hands-on checklist for CTOs, product managers, and engineers. Use it to audit how your consent architecture behaves against the core requirements of Article 7 GDPR.

Consent Collection and UI

The process starts at the point of consent capture. This is your most critical line of defense. If the user interface is ambiguous or misleading, any consent collected is invalid from the start.

- No Pre-Ticked Boxes. All consent checkboxes must be unchecked by default. The user must perform a clear, affirmative action to opt in.

- Granular Choices Offered. Can a user consent to analytics but reject marketing emails? Your system must support and enforce this. Bundling unrelated purposes is a common and avoidable mistake.

- Clear, Simple Language. Read your consent text. Does it use jargon? Rewrite it for clarity. It must be simple to understand what data is being collected and for what purpose.

- Separation from Terms. Your request for consent cannot be buried in the fine print of your Terms of Service. It must be a distinct, standalone choice.

Record-Keeping and Auditability

Your ability to prove compliance rests on the quality and integrity of your consent records. If you cannot prove a user consented, a regulator will assume they did not.

Under Article 7(1), the burden of proof is on you. If your logs are incomplete, alterable, or non-existent, you have no defense. A solid audit trail is your primary evidence.

- Essential Data Points Logged. For every consent event, your logs must capture: a unique user ID, a precise timestamp, the specific scope of consent, and the version of the privacy notice presented.

- Immutable Records. Are your consent logs tamper-proof? They should be stored in an append-only fashion. Records should never be modified—only superseded by a new event, like a withdrawal.

Consent Withdrawal Process

Finally, test what happens when a user changes their mind. Article 7(3) is clear: withdrawing consent must be as easy as giving it.

- Easy-to-Find Interface. Can a user find their consent management settings easily? It should not be buried deep in account settings.

- End-to-End System Test. When a user clicks “withdraw,” what happens next? Trigger a withdrawal and verify that processing stops across all relevant systems, including third-party tools.

Your Technical Implementation Checklist

Use this table during sprint planning, code reviews, and pre-release audits to ensure technical details align with GDPR requirements.

Article 7 Technical Implementation Checklist

| Requirement Area | Verification Check | Status (Compliant / Needs Review) |

|---|---|---|

| Consent Capture | All consent checkboxes are unchecked by default across all user-facing forms. | |

| Granularity | The system can store and enforce separate consent statuses for different processing activities (e.g., analytics, marketing). | |

| Record-Keeping | Consent logs capture user ID, timestamp, consent scope, and privacy notice version for every event. | |

| Audit Trail | Consent records are immutable; a new record is created for any change in status (e.g., withdrawal). | |

| Withdrawal UI | A user can access their consent settings and withdraw consent in three clicks or fewer from the main dashboard. | |

| Withdrawal Propagation | A test of the withdrawal function confirms that data processing stops in all connected internal and third-party systems. |

This checklist covers the most common failure points in real-world systems. Regularly reviewing these items will help you build and maintain a consent architecture that is not only compliant but also worthy of your users’ trust.

A Practical Path to Demonstrable Compliance

Viewing Article 7 GDPR as a one-time project is a common but costly mistake. It represents an ongoing architectural commitment. For technical leaders, demonstrable compliance is built on engineering excellence, not just legal checklists.

This means treating consent as a core system capability, as fundamental as authentication or logging.

The principles of Article 7 are your engineering specifications. Consent must be explicit, granular, provable, and easily revocable. Your architecture must be able to prove, at any moment, that a user gave specific, informed consent. It must also honor a withdrawal request with the same rigor.

From Roadblock to Framework

Viewing GDPR as a roadblock leads to technical debt and fragile, bolt-on solutions. Instead, treat it as a framework for building more resilient, transparent, and trustworthy digital products.

A system designed from day one with auditable consent in mind is inherently more robust and less prone to expensive rework.

Privacy by design isn’t a compliance burden; it’s a durable competitive advantage. It signals that you build with integrity, fostering the trust essential for long-term growth, especially in AI-driven systems.

This commitment translates into specific, recurring actions for your team:

- Audit Your Logs: Your consent records must be immutable and contain all critical data points: user ID, timestamp, the exact scope of consent, and the legal notice version.

- Test Your Interfaces: Scrutinize your UI/UX. Eliminate dark patterns. Ensure consent is unambiguous and freely given.

- Validate Withdrawal: Run end-to-end tests to confirm that revoking consent triggers a complete and verifiable stop to data processing across all connected services.

Embedding Privacy Into Your DNA

The key takeaway for founders, CTOs, and product leaders is this: embed these principles into your development lifecycle from the start.

Make privacy an architectural choice, not a feature tacked on before launch. By doing so, you are not just meeting the letter of the law; you are practicing superior engineering. This approach builds better products, strengthens user trust, and creates a more defensible business.

Building reliable, compliant, and AI-enabled systems is our expertise. Devisia partners with technical leaders to translate business vision into robust digital products with a focus on pragmatic architecture and long-term maintainability. Learn how we can help you build with confidence.