Article 14 of the GDPR specifies the information you must provide to data subjects when you process their personal data without obtaining it directly from them. This right to information is the cornerstone of transparency whenever you acquire data from third-party sources, such as partner APIs, data enrichment services, or public databases. It ensures individuals are aware of who is processing their data and for what purpose.

This guide is written for technical leaders—CTOs, architects, and product managers—who build and operate real-world software systems. We will move beyond legal theory and focus on the architectural patterns and operational realities of implementing Article 14.

The Problem: The Architectural Challenge of “Hidden Data”

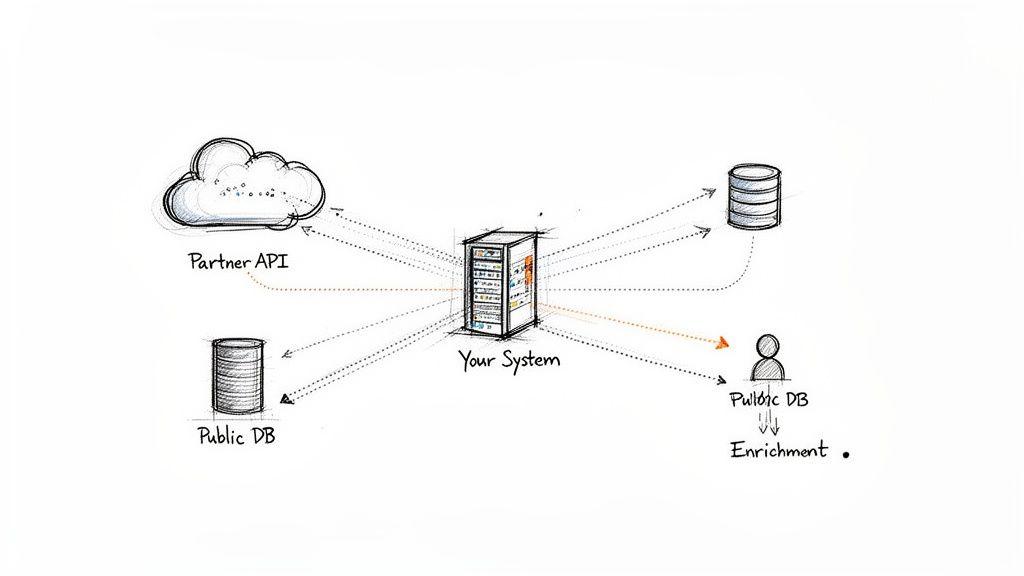

Consider a common scenario: your system ingests data from a partner’s API, scrapes a public database for research, or uses a third-party service to enrich user profiles. You now hold and process personal data for individuals with whom you have no direct relationship. This creates a significant transparency gap.

This is the exact problem Article 14 GDPR addresses. For modern B2B SaaS and AI platforms, this is not an abstract compliance edge case; it is a core operational challenge. Most complex systems are designed to aggregate data from multiple external sources to deliver value. Each time your application ingests data that a user did not provide directly, it triggers the obligations of Article 14.

Why Superficial Approaches Fail

Ignoring the provenance of indirectly collected data is a significant architectural and business risk. It goes beyond the threat of regulatory fines. From a technical standpoint, this “hidden data” introduces complexity and technical debt. Without a robust strategy for managing it, you build your system on an unstable foundation of untraceable and non-compliant data.

The risks of a naive or superficial approach are substantial:

- Compliance Penalties: Supervisory authorities can issue fines of up to €20 million or 4% of global annual turnover for transparency failures.

- Erosion of Trust: When users discover their data is being used without their knowledge, trust in your product and brand is immediately compromised. This is especially damaging for B2B relationships where data stewardship is paramount.

- System Brittleness: A lack of clear data lineage makes it nearly impossible to service data subject rights (DSRs) for access or erasure, debug data-related issues, or evolve your platform without introducing compliance vulnerabilities.

Treating Article 14 GDPR as a bureaucratic checklist is a common but dangerous mistake. A better approach is to view it as an architectural constraint that forces you to build more transparent, trustworthy, and maintainable software. Proactive compliance is a byproduct of sound engineering.

This guide reframes the Article 14 challenge for a technical audience. We will translate legal requirements into practical implementation patterns for systems that handle indirectly acquired data correctly from an architectural level.

What Article 14 GDPR Requires of Your System

When your system obtains personal data from a third party, Article 14 mandates that you close the resulting information gap. The individual must be informed that you now have their data and understand what you intend to do with it. Vague, incomplete, or missing notices are among the most common and costly GDPR compliance failures.

What Information You Must Provide

The GDPR is highly specific. The following items constitute a non-negotiable checklist for any privacy notice designed to comply with Article 14.

Every notice must be clear, concise, and include:

- Identity and Contact Details: Your company’s legal name and the contact information for your Data Protection Officer (DPO), if one is appointed.

- Purposes of Processing: A specific, unambiguous explanation of why you are using the data. “To enrich sales leads with professional affiliation data” is a valid purpose; “To improve our services” is not.

- Legal Basis for Processing: Your lawful ground for processing, such as ‘legitimate interest’. If using legitimate interest, you must also describe what that interest is.

- Categories of Personal Data: The specific types of data you have obtained (e.g., “professional contact information,” “publicly available employment history”).

A critical requirement unique to Article 14 is the obligation to disclose the source of the data. You must be transparent about where you obtained the information.

This means being as precise as possible, such as stating “data obtained from Partner X’s lead generation API.” If this is not feasible, you must at least describe the type of source, such as “from publicly accessible professional networking sites.” Failure to specify the source is a direct violation of transparency principles and a frequent target for regulatory scrutiny.

Article 14 GDPR Information Disclosure Checklist

This table translates the legal requirements of Article 14 into technical and operational considerations for your software system. Use it as a blueprint for auditing your data flows and designing your privacy notices.

| Information Requirement | Description | System Implementation Considerations |

|---|---|---|

| Controller’s Identity & Contact | Your company’s legal name and contact details, plus DPO contact if applicable. | Hard-code or store this in a configuration file, ensuring it is easily updatable. |

| Purposes of Processing | A clear, specific explanation of why you are using the data. | Your system’s back-end processes must align with these stated purposes. Mismatches create compliance risk. |

| Legal Basis for Processing | The specific lawful ground under GDPR (e.g., legitimate interest). | This is a strategic product and legal decision that dictates system obligations. |

| Categories of Personal Data | The types of data being processed (e.g., contact info, professional data). | Your data models and schemas must accurately reflect these declared categories. Avoid processing undeclared data types. |

| Source of the Personal Data | The specific third party or the nature of the source (e.g., public sources, data brokers). | This is the core of Article 14. Your architecture must support data provenance tracking to report this accurately. |

| Recipients of the Data | Any third parties you plan to share the data with. | Document all outbound API calls, data shares, and integrations that transmit personal data to external systems. |

| International Data Transfers | If data is sent outside the EEA, you must mention it and the safeguards in place. | A critical consideration for cloud-native architectures using global infrastructure. Requires careful service selection and configuration. |

| Data Retention Period | How long you will store the data, or the criteria used to determine that period. | Your system must have mechanisms to enforce these policies, such as automated deletion jobs or TTL (Time-To-Live) settings on records. |

| Data Subject Rights | A statement informing individuals of their rights (access, rectification, erasure, etc.). | Your application’s back-end and any internal admin tools must be equipped to service these requests efficiently. |

| Right to Lodge a Complaint | Information on how to complain to a supervisory authority. | This is a mandatory, standardized statement demonstrating awareness of the regulatory framework. |

| Automated Decision-Making | If you use the data for automated profiling, you must provide meaningful information about the logic involved. | For AI/ML systems, this is critical. You must be able to explain—at a high level—how your models operate and their potential impact. |

Timing Requirements: An Architectural Constraint

Article 14 imposes strict deadlines for providing this information. Your system architecture must be designed to meet these time-based triggers automatically. A manual, end-of-month batch process is insufficient.

You must provide the information by the earliest of the following events:

- Within a reasonable period after obtaining the data, and in any case, no later than one month.

- At the time of the first communication with the data subject, if you use the data to contact them.

- No later than when the personal data are first disclosed to another recipient.

For an engineering team, this means your architecture must be event-driven. It needs to generate notifications based on specific system events, such as a new record being ingested from a third-party API or the first time an automated email is sent to an enriched sales lead.

Building these triggers into your architecture is a core tenet of Privacy by Design. It ensures compliance is automated, auditable, and reliable—not an afterthought. This is also essential for maintaining accurate records of processing activities, as detailed in our guide on Article 30 GDPR.

Knowing When Article 14 Does Not Apply

While the default is to provide a notice, Article 14 includes a few narrow exemptions. Relying on an exemption is a high-stakes decision that requires careful justification and documentation. The burden of proof is always on you to demonstrate why an exemption applies.

The primary exemptions include:

- The individual already has the information: You must be able to prove that the data subject has already received all the required information from another source.

- Provision is impossible or involves disproportionate effort: “Impossible” is an absolute standard. “Disproportionate effort” is a high bar that requires balancing the effort of notification against the impact on the individual’s rights. This almost always requires a formal Privacy Impact Assessment (PIA) to justify. You are also required to make the information publicly available through other means, like your website.

- Obtaining or disclosure is laid down by law: The obligation is superseded by a legal requirement to obtain or disclose the data.

- The data must remain confidential under an obligation of professional secrecy: This typically applies to professions like law or medicine and is rarely applicable to most software companies.

Misinterpreting these exemptions is a common compliance failure. When in doubt, the default action should always be to provide the notice.

Architectural Patterns for Article 14 Compliance

Effective compliance cannot be achieved through manual processes. It must be an automated, inherent property of your system architecture. This is the essence of implementing Privacy by Design. The goal is to architect data pipelines where transparency notices are a natural and unavoidable output of data ingestion.

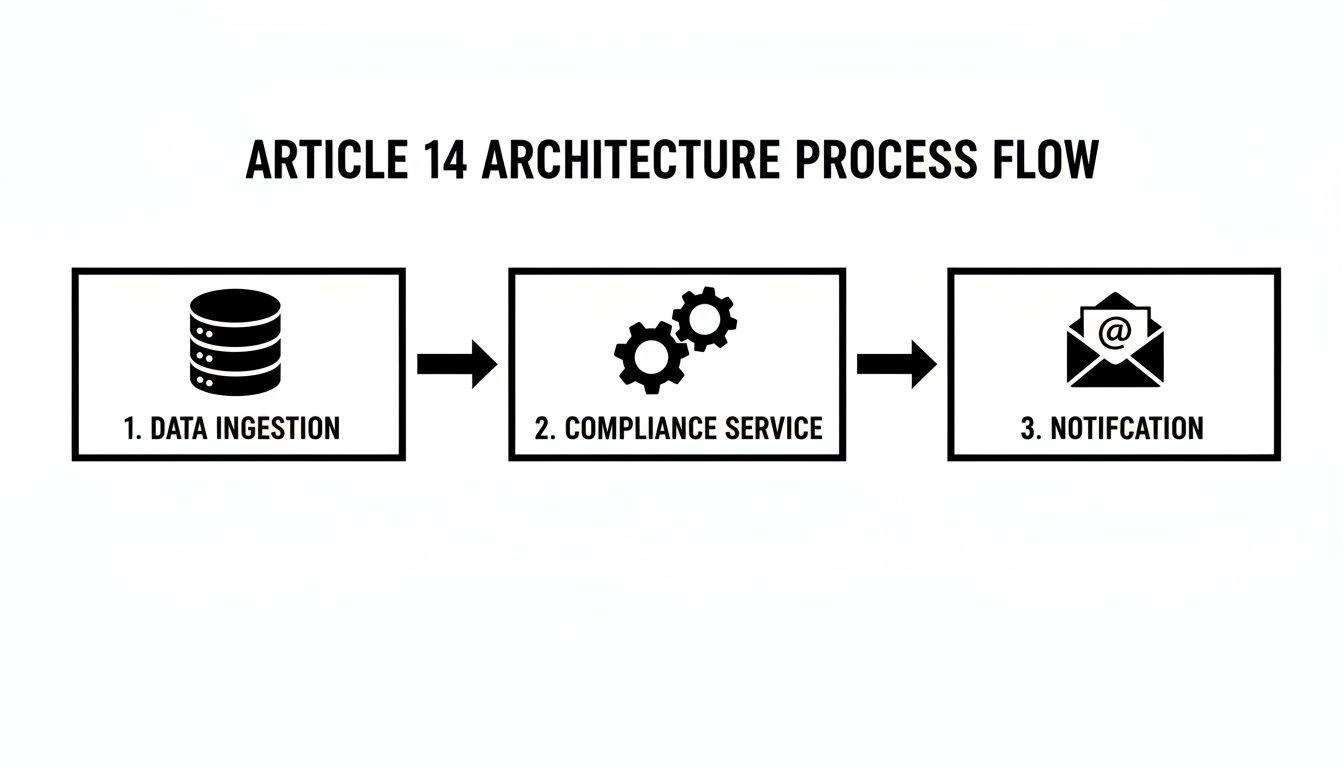

Event-Driven Architecture for Automated Notifications

An event-driven architecture is a robust pattern for automating Article 14 notifications. It decouples the data ingestion process from the compliance notification logic, creating a more resilient, scalable, and auditable system.

The workflow is as follows:

- Data Ingestion Service: A service responsible for obtaining data from third-party sources (e.g., an API client, a web scraper).

- Event Bus: Upon successfully ingesting indirectly acquired personal data, the service publishes an event (e.g.,

IndirectDataAcquired) to a central message bus like RabbitMQ or Apache Kafka. The event payload must contain the data subject’s contact information, the categories of data acquired, and critically, the data source. - Compliance Notification Service: A dedicated microservice subscribes to the

IndirectDataAcquiredevent. Its sole responsibility is to consume this event, construct the legally required Article 14 notice, and dispatch it via the appropriate channel (e.g., email API).

This decoupled pattern ensures that the core data ingestion pipeline is not blocked by the notification process. If the email service is temporarily unavailable, the event can be retried without affecting data acquisition. This achieves compliance without sacrificing system performance or reliability.

The Compliance Microservice Pattern

Implementing a dedicated Compliance Microservice is a practical way to realize this architecture. This service acts as a centralized hub for executing compliance-related logic. It subscribes to various business events across your system and enforces privacy rules programmatically.

For instance, when a “Lead Enrichment” service fetches new data, it emits an event. The Compliance Microservice, listening for this event, determines that an Article 14 notice is required and initiates the notification workflow.

A Compliance Microservice transforms abstract privacy requirements into a single, auditable, and maintainable software component. It centralizes compliance logic, simplifies updates in response to regulatory changes, and establishes clear ownership within the codebase.

This pattern is particularly effective for complex platforms that ingest data from numerous third-party sources, ensuring that Article 14 obligations are met consistently and automatically. You can read more about this philosophy in our article on integrating GDPR principles through Privacy by Design.

Immutable Audit Logs for Provability

Proving that you sent a notification is as important as sending it. In the event of a regulatory audit, you must provide a verifiable record of your compliance actions. The most robust technical solution is an immutable, append-only audit log.

When the ComplianceNotificationService dispatches a notice, it should simultaneously write a record to a secure log. Each log entry must contain:

- A unique identifier for the data subject.

- The precise timestamp of the notification attempt.

- The delivery channel used (e.g.,

email). - A hash of the notice content to prove what was sent.

- The delivery status returned by the provider (e.g.,

sent,delivered,bounced).

Storing these logs in a system like an append-only database or a write-once cloud storage bucket (e.g., AWS S3 Object Lock) ensures their integrity. This architectural choice provides the technical proof required to substantiate your compliance claims. A study on GDPR’s impact on tracking technologies found that after GDPR, the number of trackable consumers via cookies dropped by 12.5%, demonstrating that compliance architecture has measurable business consequences.

Real-World Scenarios and Implementation

Theory is useful, but compliance is achieved in code. Let’s examine how to apply these architectural patterns to common B2B software scenarios.

Scenario 1: Sales Intelligence SaaS Platform

Problem: A SaaS platform enriches sales leads by pulling professional data (job title, company) from a third-party data provider’s API. This is a clear Article 14 trigger.

Implementation:

- Event Trigger: The data enrichment service, upon receiving a successful API response from the provider, publishes a

ProfileEnrichedevent. The payload includes the lead’s email and a specific identifier for the data provider (e.g.,provider_name: "Clearbit"). - Queued Notification: A compliance service consumes this event and places a notification task into a dedicated queue. This decouples the systems and ensures resilience.

- Timed Dispatch: A worker process sends an email notice within the one-month deadline (ideally much sooner). The email template explicitly states your company’s identity, the purpose (sales lead enrichment), the categories of data, and names the third-party provider as the source.

Scenario 2: AI-Powered Recruiting Tool

Problem: An AI platform scrapes public sources like GitHub and professional networking sites to identify potential job candidates. The “source” is not a single API but a distributed set of public web pages.

Implementation:

- Log Data Provenance: The scraping engine must be designed to log the specific source URL for each piece of data it collects. Storing a

source_urlattribute alongside the data record is non-negotiable for traceability. - Automate First Contact Notice: The system is configured so that the very first communication sent to a candidate (e.g., an outreach email) automatically includes the Article 14 notice. This satisfies the “at the time of first communication” timing requirement.

- Provide Clear Source Information: The notice must be direct: “We identified your professional profile on publicly available sources, including platforms such as GitHub and professional networking sites, to assess suitability for roles with our clients.” This transparency is key. Documenting this process is also a critical input for a Privacy Impact Assessment.

Scenario 3: B2B Analytics Platform

Problem: A business intelligence platform integrates customer data from a partner company via API to provide joint analytics. This is a classic controller-to-controller data share that falls under Article 14.

Implementation:

- Contractual Clarity: The data-sharing agreement with the partner must explicitly define roles and responsibilities for providing Article 14 notices.

- Automated Ingestion Workflow: The data ingestion pipeline is designed to handle this. As data arrives from the partner API, it is tagged with the partner’s identity as the source.

- Targeted Notification: The system triggers a notification to the data subjects. The notice clearly states that their data was received from the specified partner for the purpose of joint analytics.

In all scenarios, the unifying principle is architectural traceability. Your system must be able to track where each piece of indirectly acquired data originated and trigger a documented, automated notification. A system that cannot do this is not only a compliance risk but is also architecturally deficient.

Conclusion: Privacy as an Architectural Choice

For founders, CTOs, and product leaders, the complexities of Article 14 GDPR distill down to a core principle: privacy is an architectural choice, not a feature.

A reactive, manual approach to compliance is unsustainable. It creates technical debt, introduces risk, and erodes the user trust that is essential for long-term success. The only scalable and defensible strategy is to embed transparency and accountability into the very fabric of your software systems.

Key Takeaways for Technical Leaders

- Map All Indirect Data Inflows: You cannot manage what you do not measure. The first step is to create a comprehensive inventory of all third-party data sources. If you cannot trace data to its origin, you have a critical architectural flaw.

- Automate Compliance Workflows: Implement event-driven systems that automatically trigger and log Article 14 notices upon data ingestion. Manual processes are unreliable and do not scale.

- Prioritize Data Provenance: Design your data models and pipelines to track the source of every piece of personal data. This is the foundation of provable compliance.

- Document Everything: Meticulously document your legal basis for processing, your data flows, and your justifications for any exemptions. This documentation is your first line of defense in an audit.

Viewing Article 14 not as a regulatory burden but as a set of engineering constraints leads to better software. Systems designed for transparency and accountability are inherently more robust, maintainable, and trustworthy—the hallmarks of any product built to last.

Frequently Asked Questions About Article 14

Do we need to send a notice for publicly available data?

Yes, in almost all commercial contexts. The fact that data is publicly available (e.g., on a professional networking site) does not exempt you from your obligations as a data controller. The moment you collect and process that data for your own purposes, you trigger the information duties of Article 14. You must inform the individual that you are processing their data and state that you obtained it from a public source.

How specific must we be about the data source?

As specific as possible. The goal is transparency.

- Best practice: Name the specific company or platform (e.g., “We obtained your professional contact information from Data Provider X”).

- Acceptable minimum: If naming the specific source is not feasible, you must describe the type of source (e.g., “from publicly accessible professional networking platforms”).

A generic statement like “from third-party sources” is insufficient and demonstrates a failure to meet the transparency requirement. Your system architecture should be designed to track provenance, making specific attribution possible.

Can we combine Article 14 notices with marketing emails?

Yes, this can be an effective way to meet the “at the time of first communication” deadline. However, the notice must be clear, prominent, and distinct from the marketing content. Burying the information in fine print within a promotional message is non-compliant. A best practice is to use a clearly labeled section (e.g., “Information About Your Data”) at the beginning of the email to present the required disclosures before the marketing content.

What if we have no contact information for the data subject?

This is one of the few scenarios where the “impossibility” exemption may genuinely apply. If you have obtained personal data but have no email address, phone number, or other means of contacting the individual, it may be impossible to provide a direct notice. However, this is not a complete waiver. You are still required to make the Article 14 information publicly available, typically through a detailed privacy notice on your website that describes this specific processing activity. You must be prepared to rigorously document and justify this decision.

How does Article 14 relate to the upcoming AI Act?

Article 14 GDPR and the EU AI Act share the goal of transparency but operate at different layers. The GDPR’s right to information, particularly the right to “meaningful information about the logic involved” in automated decision-making (Article 15), already creates a foundation. The AI Act will build on this by imposing more specific transparency obligations on providers of high-risk AI systems. A robust Article 14 implementation, which clearly documents data sources and processing purposes for training and operating AI models, is a critical and necessary step toward future-proofing your systems for AI Act compliance.

Building robust, compliant, and scalable software requires a partner that understands that privacy is an architectural principle. Devisia specializes in developing high-stakes digital products and AI-enabled systems where reliability and trust are paramount.

Learn how we help companies transform their vision into resilient, market-ready software at https://www.devisia.pro.