For a long time, travel artificial intelligence felt more like a future concept than a present-day necessity. That’s changed. Today, it represents a critical architectural upgrade—one that’s essential for survival and growth.

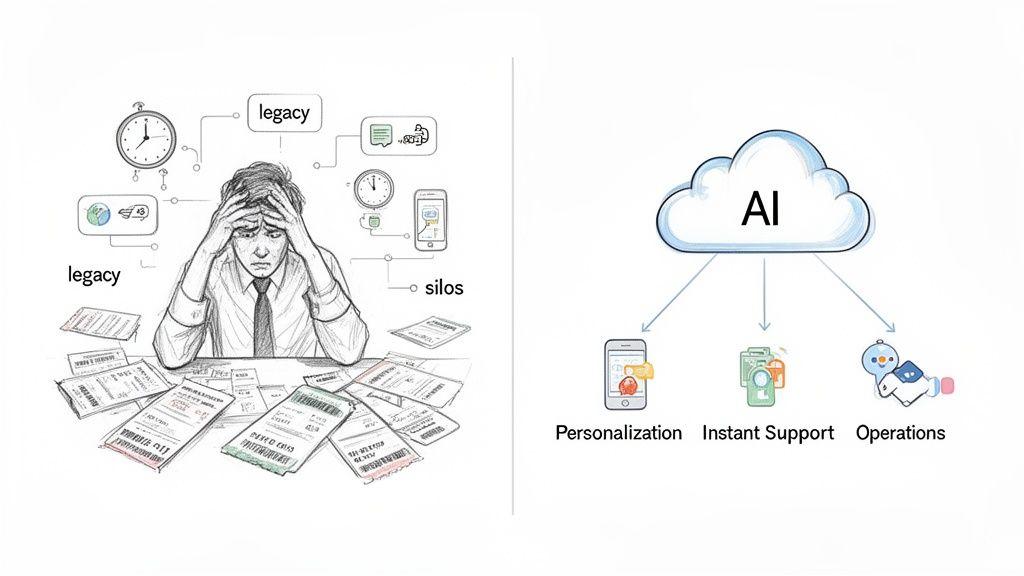

For technical leaders, this isn’t just about adding a new feature. It’s about fundamentally rethinking how travel services are engineered and delivered to solve the industry’s core problems: data overload, fragmented user experiences, and immense operational pressure that legacy systems simply can’t handle anymore.

The Problem: Legacy Systems vs. Modern Travel Demands

The travel industry operates on a foundation of massive, unrelenting complexity. Technical systems must juggle vast, volatile datasets—flight schedules, hotel availability, pricing that changes by the second, and a constant stream of user preferences. All of this happens against a backdrop of razor-thin margins and fierce competition.

The traditional approach, with siloed and rigid legacy systems, is no longer viable. These systems were never designed for the real-time, hyper-personalised service that modern travellers expect.

This architectural gap creates significant business challenges that directly impact revenue and operational efficiency. For founders and CTOs, the problem isn’t a lack of data; it’s the inability to process and act on that data in real-time. This leads directly to missed revenue opportunities, poor customer experiences, and bloated operational overhead.

Key Business Drivers for AI Adoption

Artificial intelligence offers a pragmatic architectural pattern for processing information and automating decisions at scale. Adopting it is not about hype; it is a direct response to tangible business pressures.

- Operational Overload: Manual processes for customer support, disruption management (like rebooking passengers after a cancellation), and resource planning are slow, costly, and prone to error. AI-driven automation executes these tasks at scale, 24/7, with high reliability.

- The Demand for True Personalisation: Generic offers are insufficient. Travellers now expect services that understand their specific needs—from dietary restrictions on a flight to accessibility requirements at a hotel—and build itineraries that reflect that understanding. Naive segmentation no longer meets user expectations.

- Competitive Necessity: Agile competitors are already using AI to optimise pricing, enhance user engagement, and streamline operations. In a market where efficiency is a primary driver of profitability, architectural stagnation means losing ground.

Market data validates this shift. For example, a significant majority of travellers now use AI for trip planning, a dramatic increase from just a few years ago. This trend is particularly strong among younger demographics, with reports from 2026 showing that a large percentage of millennials and Gen Z leverage generative AI for travel discovery. You can explore underlying data on platforms like Statista.com.

Building with travel artificial intelligence is not about adding a “smart” feature. It is a strategic decision to re-architect systems for agility, efficiency, and a fundamentally better customer experience—treating privacy and reliability as architectural choices, not afterthoughts.

Understanding the high-level strategy is the first step. However, the real value of travel artificial intelligence is realized not in presentations, but in the specific, practical applications that solve real-world operational problems.

For a technical leader, this means moving beyond generic terms like “chatbots” to identify high-impact use cases that fix a broken process or unlock new revenue. These are not speculative concepts; they are pragmatic solutions being built now that deliver a measurable return on investment.

Pragmatic AI Use Cases for the Travel Industry

Dynamic Pricing and Revenue Optimisation

The Problem: Static, rules-based pricing systems cannot keep pace with real-time market dynamics. They fail to account for sudden demand spikes, competitor actions, or local events, resulting in lost revenue—either by pricing too low when demand is high or too high during a lull.

The Solution: A dynamic pricing engine built on machine learning models offers a proactive approach. It ingests and analyzes a continuous stream of data to optimize pricing strategy.

- Input Signals: The engine processes competitor prices, historical booking patterns, flight and hotel availability, local event schedules, and macroeconomic indicators.

- Predictive Output: It forecasts demand and recommends optimal price points for flights, hotel rooms, or car rentals to maximize both revenue and occupancy.

This represents a fundamental shift from reacting to the market to proactively shaping revenue strategy based on predictive data analysis.

Advanced Personalisation with Embeddings

The Problem: Generic recommendations like “people who booked this also booked…” are outdated and ineffective. The challenge is to move beyond crude segmentation to true hyper-personalisation that understands the nuanced intent of each traveller. A superficial approach is a primary reason many AI personalisation projects fail, a topic we analyze in our guide to AI in the retail sector.

The Solution: The key technical enabler is embeddings. An embedding is a vector (a list of numbers) that represents a complex entity like a user’s travel history, a hotel’s amenities, or a destination’s reviews. By converting disparate items into a shared mathematical space, you can calculate the “distance” or semantic similarity between them.

For example, a user who consistently books boutique hotels with specific amenities and visits cities known for their art scenes has a distinct profile vector. An embedding-based system can match this vector to the vectors of undiscovered destinations or new hotels that share those exact latent characteristics. This moves beyond keyword matching to capture the semantic essence of a traveller’s preferences, enabling uniquely insightful recommendations.

Agentic Workflows for Operational Automation

The Problem: Many critical operational tasks in travel are complex, multi-step processes performed manually. Consider a corporate travel agent booking a trip with specific compliance constraints, or an airline employee rebooking hundreds of passengers after a flight cancellation. These workflows are slow, expensive, and a breeding ground for costly human error.

The Solution: An agentic AI provides a powerful architectural pattern for automation. An AI agent is an autonomous system capable of executing actions to achieve a goal. Using a capability known as tool calling, the agent can interact with other internal and external systems via APIs to:

- Query live flight availability.

- Check a hotel’s booking system.

- Verify a customer’s loyalty status.

- File a request with a third-party service provider.

By chaining these actions, an agent can automate an entire workflow, such as finding and booking an alternative flight, reserving a hotel, and notifying the passenger—all without human intervention. This delivers significant operational efficiency and frees up human experts to manage true edge cases.

Core Architectural Patterns for AI Integration

Implementing AI into a travel business requires a deliberate architectural approach. Simply connecting to a Large Language Model (LLM) API without a robust plan leads to unreliable, insecure, and prohibitively expensive systems.

The value lies in selecting the right architectural pattern for the right business problem. This involves designing a resilient system that orchestrates LLMs, proprietary data, external APIs, and the user interface into a cohesive, manageable whole. Failure to do so is a primary reason many AI pilots never reach production.

Powering Chatbots With Retrieval-Augmented Generation (RAG)

The Problem: A standard, off-the-shelf LLM has no knowledge of your specific business operations. It cannot answer questions about your hotel’s pet policy or flight cancellation rules. Without grounding, it is prone to “hallucination”—inventing plausible but incorrect information.

The Solution: Retrieval-Augmented Generation (RAG) is the essential architectural pattern that grounds an LLM in your company’s proprietary data, ensuring factual accuracy.

RAG is a system designed to inject relevant, real-time context into an LLM’s prompt before it generates a response. It transforms a generic model into a knowledgeable company expert.

The implementation involves a clear, multi-step process:

- Data Ingestion: Proprietary documents (policy PDFs, knowledge base articles, booking rules) are collected, segmented into smaller text chunks, and processed.

- Vectorisation: Each chunk is converted into a numerical embedding and stored in a specialized vector database optimized for semantic search.

- Real-time Retrieval: When a user asks a question (e.g., “What is the fee for an extra bag?”), the query is converted into a vector. The system then searches the vector database for the most semantically relevant text chunks from your documents.

- Augmented Prompting: These retrieved chunks are injected into the prompt alongside the original question. The instruction to the LLM is effectively: “Using only this provided information, answer the user’s question.”

This pattern is critical for building trustworthy customer-facing assistants but requires careful engineering to maintain data freshness, ensure retrieval accuracy, and manage the costs of embedding and API calls.

Agentic Workflows With Tool Calling

The Problem: Answering questions is insufficient for complex tasks like booking a multi-leg trip or rebooking a cancelled flight. These processes require the AI to take action, interact with other systems, and make sequential decisions.

The Solution: Agentic workflows that leverage tool calling are designed for this purpose. An AI agent is engineered to achieve a goal by executing a sequence of actions. It is given access to a predefined set of “tools,” which are functions that interface with your internal or external APIs.

Consider a user request: “Find and book a pet-friendly hotel in London for next weekend under £200.” An AI agent would decompose this task:

- Tool 1 (Search API): Call the internal hotel search API with parameters

city=London,dates=next_weekend, andpet_friendly=true. - Tool 2 (Filtering Logic): Process the API response, filtering out options that exceed the £200 price constraint.

- Tool 3 (Booking API): Upon user confirmation, call the booking API with the correct hotel ID and reservation details to complete the transaction.

Building a reliable agent is a significant architectural undertaking. It requires robust error handling, security guardrails to prevent unintended actions, and comprehensive observability. A poorly implemented agent could double-book a room or fail silently, creating a severe service disruption. The key is to design agents that are auditable and maintain a human-in-the-loop for final approval of critical actions.

AI Integration Patterns: A Comparative Overview

| Integration Pattern | Primary Use Case | Key Components | Implementation Trade-offs |

|---|---|---|---|

| Simple LLM Call | Creative content generation, text summarisation, simple classification. | LLM API (e.g., OpenAI, Anthropic), prompt templates. | Pros: Simple, fast to prototype. Cons: No access to private data, prone to hallucinations, lacks real-time context. |

| Retrieval-Augmented Generation (RAG) | Factual Q&A, customer support chatbots, internal knowledge search. | LLM API, vector database (e.g., Pinecone, Chroma), embedding model, data ingestion pipeline. | Pros: Grounded in facts, reduces hallucinations, can use proprietary data. Cons: More complex, requires data pipeline management, retrieval quality is critical. |

| Agentic Workflow (Tool Calling) | Complex multi-step tasks like booking, trip planning, and reservation management. | LLM with tool-calling ability, a set of defined “tools” (API functions), state manager, planning/reasoning logic. | Pros: Can perform actions and interact with external systems. Cons: High complexity, significant security risks, requires robust error handling and guardrails. |

| Fine-Tuning | Specialised tasks requiring a specific style, tone, or format that is hard to achieve with prompting alone. | Base model, curated training dataset, training infrastructure. | Pros: Highly specialised model behaviour. Cons: Expensive, time-consuming, requires a large high-quality dataset, and risks “catastrophic forgetting.” |

Choosing the right pattern involves matching the solution’s complexity to the problem’s complexity. A simple LLM call is sufficient for summarising hotel reviews, but you would use an agentic workflow for flight rebooking. RAG is ideal for a help-desk bot, but an agent is necessary for a full-service travel planner. A clear understanding of these patterns ensures you build a system that is powerful, reliable, and secure.

A CTO’s Roadmap: From AI Concept to Production

Taking a travel AI project from concept to a reliable production system requires a structured, incremental approach. Chasing the newest model or attempting a “big bang” launch is a common anti-pattern that leads to scope creep, budget overruns, and technical debt.

This five-phase roadmap is designed for technical leaders responsible for building and operating real software systems. It focuses on delivering tangible value while managing risk at each stage.

Phase 1: Discovery and Scoping

The first step is to identify a high-value, low-risk pilot project that solves a genuine business problem. Avoid building for technology’s sake. A successful pilot builds momentum and secures stakeholder buy-in for future, more ambitious projects.

Focus on a process that is currently manual, repetitive, and costly. Strong candidates include:

- Automating responses to the top 10 most frequent customer support queries.

- Classifying incoming guest reviews to identify recurring service issues.

- Generating first drafts of destination descriptions for marketing content.

Define clear success metrics, such as reducing average agent response time by 20% or cutting content creation costs. For the initial iteration, avoid complex, multi-system integrations that increase risk.

Phase 2: Pragmatic Architecture Design

With a well-defined scope, you can design a pragmatic architecture. This means selecting the right models and tools for the job, not over-engineering for a hypothetical future.

The decision to use a proprietary API versus an open-source model should be based on a clear analysis of performance, cost, and control. A detailed comparison of on-premises versus cloud deployments can provide context for this infrastructure choice. For many tasks, a smaller, faster model is more cost-effective and provides sufficient quality. Architect for modularity to enable swapping models or data sources later without a complete system rewrite.

Phase 3: Incremental Delivery and Testing

Adopt an agile, sprint-based delivery model. Build and test the system in small, measurable increments. Each sprint should deliver a functional component that can be validated with real data and, ideally, a small group of users.

For example, when building a RAG-based chatbot, the first sprint might focus solely on the data ingestion pipeline. The next could build the retrieval mechanism, and a third could integrate the LLM. This iterative process allows you to identify architectural flaws and performance bottlenecks early, when they are less costly to fix.

Phase 4: Human-in-the-Loop (HITL) Integration

For any process with real-world consequences—such as booking a non-refundable flight or handling a sensitive customer complaint—a human-in-the-loop (HITL) design is non-negotiable. This is a core principle of responsible AI engineering.

An HITL system is architected to allow human experts to validate, override, or correct AI-generated decisions before they are finalized. This creates a critical safety net, reduces operational risk, and provides a valuable feedback mechanism for continuous model improvement.

For example, an AI agent might propose a new itinerary for a customer whose flight was cancelled, but a human agent provides final approval before any tickets are issued. This combines the speed of AI with the judgment and accountability of a human expert.

Phase 5: Go-Live and Observability

Deploy the validated system to a limited user group first. The work does not end at go-live. Robust monitoring and observability must be in place from day one to track performance, cost, and user satisfaction against the initial business case.

Continuously track metrics such as API latency, token consumption, and the rate of incorrect responses (“hallucinations”). For instance, with reports suggesting that AI-driven personalisation can lead to a significant uplift in bookings—as detailed in these insights on AI travel planning—system reliability is paramount. The data gathered from monitoring validates the roadmap and provides the business case for future investment and expansion.

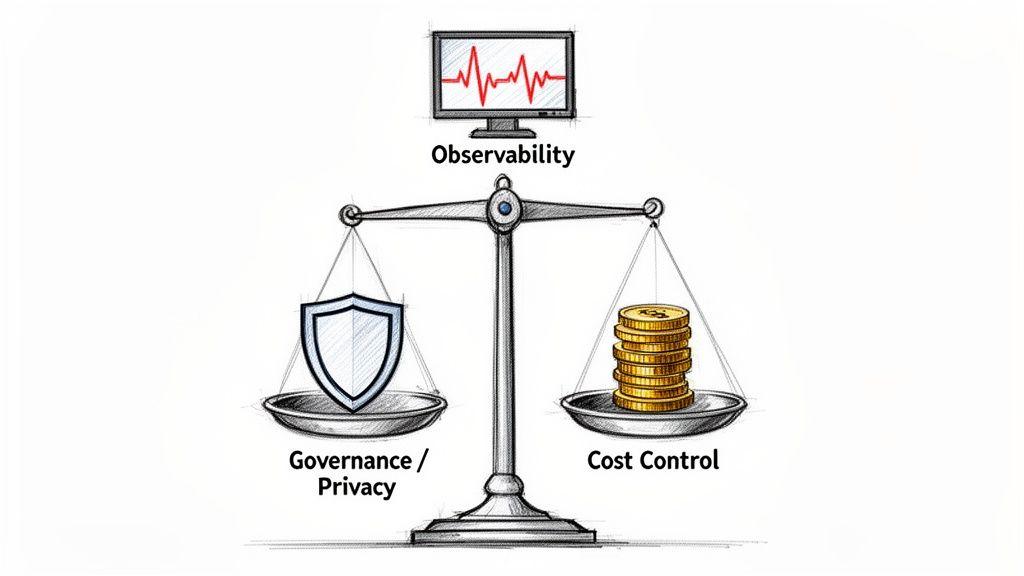

Governance, Cost, and Reliability in AI Systems

Launching a travel AI system without robust governance, cost controls, and reliability measures is an unacceptable business risk. For technical leaders, these non-functional requirements are as critical as the AI model itself. A naive approach leads to runaway API bills, compliance failures that erode user trust, and unreliable services that create more problems than they solve.

Governance and Compliance by Design

Travel companies are custodians of highly sensitive personal data, including names, passport numbers, payment details, and travel histories. This makes compliance with regulations like GDPR an absolute architectural requirement, not an optional feature. A data breach or a biased AI model represents a direct threat to business continuity.

Effective governance means embedding privacy and fairness into the system’s design from the outset. This translates to concrete engineering practices:

- Data Minimisation: Collect and process only the data that is strictly necessary for the task at hand. If an AI does not need a user’s entire travel history to suggest a hotel, do not provide it with that data.

- Anonymisation and Pseudonymisation: Implement processes to de-identify user data before it is sent to a third-party model or used for training.

- Bias Mitigation: Actively audit models for hidden biases. A model that consistently offers higher prices or worse options to certain demographics is not just unfair—it’s a significant liability.

The scale of AI adoption makes this urgent. With AI-driven travel planning now mainstream—as you can discover in these key insights for 2026—vast amounts of data flow through these systems. Upholding trust is paramount.

Implementing Practical Cost Controls

AI costs, particularly from LLM APIs, can escalate rapidly without proactive management. Every API call is metered in tokens, and a poorly optimised system can generate thousands in unexpected expenses.

Controlling costs requires a layered architectural strategy:

- Right-Sized Model Selection: Use the smallest, most efficient model that can accomplish the task effectively. Using an expensive, large-scale model for a simple classification task is an inefficient use of resources.

- Intelligent Caching: For frequent and repetitive requests, cache the AI-generated response. This simple technique reduces redundant API calls, latency, and cost.

- Request Batching: Where applicable, group multiple user requests into a single API call. This is often more efficient than numerous small, individual calls.

Ensuring Observability and Reliability

AI systems introduce unique failure modes, including hallucinations, performance degradation, and dependencies on external services. The system must be architected for resilience.

Robust observability is the foundation of reliability, providing the visibility needed to diagnose issues before they impact customers. To build a reliable travel AI system, you must implement:

- Comprehensive Logging: Log all critical interactions, including prompts, responses, latency, token counts, and errors.

- Performance Monitoring: Track key metrics in real time, such as response times, error rates, and cache hit ratios.

- Fallback Mechanisms: Design clear escape hatches. If the AI model fails or returns an error, the system must degrade gracefully, for example, by redirecting the user to a human agent or a standard search interface.

Many teams fail to build in these safety nets. A reliable system anticipates failure and is designed to handle it, ensuring a consistent and trustworthy user experience. For a deeper dive, consider our guide on governance, risk, and compliance software.

Conclusion: Key Architectural Takeaways for Leaders

For founders, CTOs, and product leaders, successful implementation of travel artificial intelligence is not about chasing hype. It is achieved by methodically applying proven technical patterns to solve specific, high-value business problems. The most effective systems are products of clear strategy and disciplined engineering.

Fundamentally, successful travel AI is a product of architectural discipline. Privacy, reliability, and cost control are not features you add later. They are core decisions you make on day one. This mindset is what separates lasting, valuable systems from expensive, brittle experiments.

As you move from concept to production, these principles provide a reliable compass for technical and strategic decisions.

-

Start with the Business Problem, Not the Tech: Pinpoint a concrete operational issue, such as reducing manual rebooking effort or improving personalisation for new customers. A well-defined problem dictates the appropriate architecture and provides clear metrics for success.

-

Choose the Right Architectural Pattern: Do not default to the most complex solution. A simple LLM call is effective for summarising reviews. A RAG pattern is ideal for factual Q&A. Reserve complex agentic workflows for multi-step tasks like itinerary planning. Over-engineering leads to higher costs and maintenance burdens.

-

Build-in Governance and Cost Controls from Day One: Architect for compliance by minimising data handling. Implement practical cost controls through smart caching and right-sized model selection. These are not afterthoughts; they are essential for long-term viability.

-

Prioritise Human-in-the-Loop for Critical Processes: For any action with a significant financial or reputational impact, design a human-in-the-loop (HITL) system. This approach blends AI’s speed with human judgment, creating a crucial safety net and a feedback loop for continuous improvement.

Adhering to these pillars will help you build travel AI systems that are not only powerful but also robust, secure, and cost-effective—turning a technological capability into a durable business asset.

Frequently Asked Questions About Travel AI

How do we choose between LLM APIs and open-source models?

This is a critical architectural decision with no single correct answer; it is a trade-off between speed-to-market, control, and cost.

-

Proprietary LLM APIs (e.g., OpenAI, Anthropic): These models offer state-of-the-art performance and enable rapid prototyping. The trade-offs are cost, which can become significant at scale, and data privacy. You are sending data to a third party, which requires robust governance and clear user consent, particularly under regulations like GDPR.

-

Self-Hosted Open-Source Models: Hosting your own model provides complete control over data, which can be a significant advantage for privacy and security. For high-volume, specialised tasks, it can also be more cost-effective in the long run. However, this approach demands significant in-house MLOps expertise, incurs infrastructure costs, and may not match the general reasoning capabilities of top-tier commercial models.

A hybrid approach is often optimal. Use powerful APIs for complex, creative, or low-volume tasks, and deploy smaller, fine-tuned open-source models for high-volume, repetitive functions like classification or data extraction.

What is the most common mistake in a first travel AI project?

The most common and costly mistake is starting with a technology and then searching for a problem to solve with it. It is easy to get caught up in the latest AI trend, but this “solutionism” often leads to over-engineered systems that provide little business value.

The most successful first projects are often focused on “boring” problems. They identify a painful, measurable, and repetitive manual task—such as answering the same ten customer questions repeatedly or manually triaging feedback—and automate it with the simplest effective AI solution. Securing a clear, quantifiable win on a small-scale project builds the trust and momentum needed to tackle more ambitious initiatives.

How can we ensure AI personalisation respects privacy and GDPR?

This is a non-negotiable architectural requirement. Failure to address privacy by design erodes user trust and creates significant legal and reputational risk.

A system that respects privacy is not just a legal obligation; it’s a competitive advantage. It demonstrates a commitment to your users that builds loyalty far more effectively than any personalisation feature alone.

Essential practices include:

- Data Minimisation: Architect your system to collect and use only the data absolutely necessary for the task. If a user’s last three searches are sufficient for a recommendation, the AI does not need their entire travel history.

- Anonymisation by Design: Build automated processes to strip Personally Identifiable Information (PII) before any data is sent to a third-party service or used in a way that is not strictly necessary.

- Explicit Consent and Transparency: Be radically clear with users about what data you are using and for what purpose. Provide simple, accessible controls for them to opt out.

Implementing these practices ensures that AI features enhance the customer experience without violating the trust you have worked to build.

At Devisia, we specialise in turning complex business requirements into reliable, maintainable software and AI-enabled systems. We provide a clear path from pragmatic architecture to production-ready solutions that deliver measurable value.

Learn more about our approach to building robust digital products