A privacy & cookies policy isn’t just a legal document. For a modern software company, it is a public-facing specification of how your system handles data. It’s a declaration of your technical architecture and a critical component for building trust with customers, particularly in a B2B context.

Why Your Privacy Policy Is an Architectural Choice

Many founders and CTOs treat the privacy policy as a final legal chore—a template to be filled out just before launch. This approach is a significant strategic error. It introduces technical debt, regulatory risk, and architectural inflexibility into the product from day one. A well-crafted policy is not a legal add-on; it is a foundational blueprint for your system’s design.

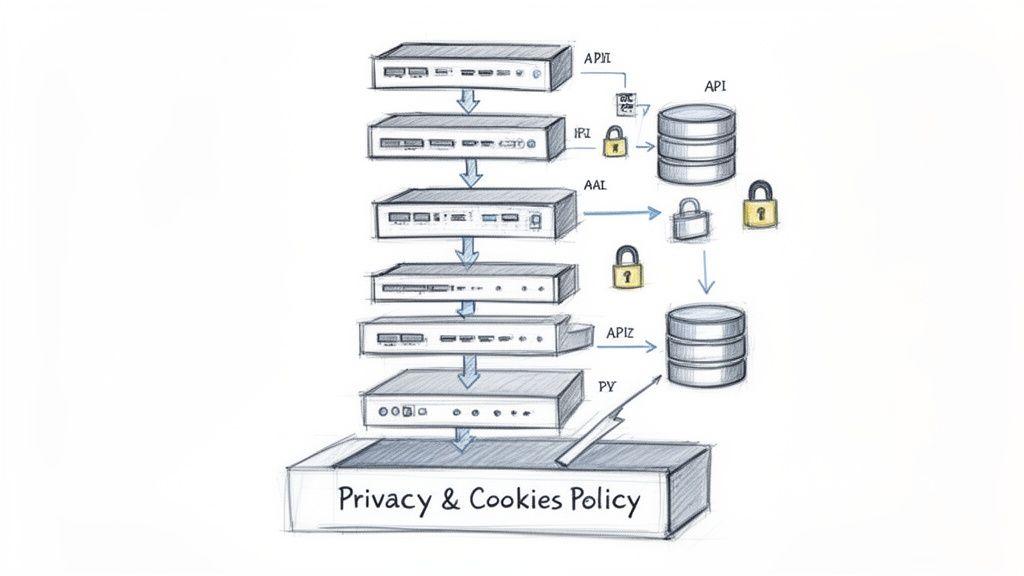

Every clause in that policy translates directly into a technical requirement. A commitment to data minimization dictates your database schemas and API payloads. A statement on data retention periods defines your data lifecycle management scripts and cron jobs. Disclosures about third-party sub-processors—like AWS, Stripe, or OpenAI—constrain service integrations and data flows.

The Problem: Policy as an Afterthought

When privacy is treated as a final checkbox, engineering teams build systems without clear data handling constraints. This leads to common anti-patterns:

- Over-collection of data “just in case.”

- Indefinite data retention in logs and backups.

- Ad-hoc integration of third-party services without a formal review.

- Inability to fulfill user rights requests (e.g., data deletion) efficiently.

This creates a dangerous gap between what the policy promises and what the system actually does, exposing the business to legal penalties and reputational damage.

The Solution: Policy as a Design Document

A robust privacy & cookies policy should be treated as a public-facing specification for your data architecture. If your code doesn’t match what your policy claims, you have a critical bug—one with legal and financial consequences.

Consider these direct links between policy and architecture:

- Data Collection: If your policy states you only collect data necessary for user authentication, your registration API and database tables must reflect that. Collecting “nice-to-have” data points without a stated purpose is a direct breach of your own policy and regulations like GDPR.

- User Rights: Honoring a user’s “right to be forgotten” is not a simple

DELETEcommand. It demands an architecture that can propagate that request across microservices, logs, backups, and third-party analytics platforms. A policy written without considering implementation makes this an engineering nightmare. - AI and Telemetry: For AI-driven systems, your policy must be explicit about what data is used for model training and how automated decisions are made. This transparency requires architectural patterns like data anonymization pipelines and auditable opt-out mechanisms.

Treating privacy as an afterthought forces expensive refactoring and exposes your company to compliance penalties. Approaching your policy as an architectural document from the start ensures your system is built on a foundation of trust and signals engineering excellence. For a deeper dive, see our article on data protection and privacy.

Navigating the Global Regulatory Maze

Building software for a global market means navigating a complex web of overlapping privacy laws. For SaaS and AI companies, this is a technical reality that shapes product design, build, and deployment. Attempting to address compliance one jurisdiction at a time is inefficient and introduces significant risk. A unified, principles-based architectural approach is essential.

The financial stakes are rising. Recent data shows the increasing costs of privacy compliance, with a growing number of firms spending millions annually. This is driven by the patchwork of U.S. state laws and assertive enforcement from international regulators.

Core Regulatory Principles for Engineering Teams

While specific laws vary, they are built on common principles that translate directly into technical requirements. Understanding these is the first step toward building a compliant architecture.

- Personal Data: Any information that could identify a living person. This includes not just names and emails, but also IP addresses, device IDs, location data, and behavioral patterns collected via telemetry. Your system must be able to identify and classify all instances of personal data it processes.

- Lawful Basis for Processing: You cannot collect or use personal data without a legitimate legal reason. For most SaaS companies, this is either user consent (e.g., for marketing cookies) or contractual necessity (e.g., processing an email to provide service access). Your architecture must be able to link every data processing activity to its lawful basis.

- User Rights: Regulations like GDPR grant individuals specific rights over their data, including the right to access, correct, and delete it. This requires robust technical workflows to locate and manage data across all systems, not just a manual support ticket process.

A common mistake is assuming a regulation doesn’t apply because your company isn’t physically located there. If individuals in the EU or California can use your product, their laws almost certainly apply to how you handle their data.

Key Regulations and Their Technical Implications

A few key regulations set the global standard. Their core demands are non-negotiable for any modern software business.

- GDPR (General Data Protection Regulation): The EU’s landmark law requires clear, opt-in consent for non-essential data collection, mandates data protection by design, and has strict rules for transferring data outside the EU. For engineers, this means implementing granular consent controls and ensuring any data sent to a non-EU service (e.g., a US-based cloud provider) is covered by a valid transfer mechanism like Standard Contractual Clauses (SCCs).

- CCPA/CPRA (California Consumer Privacy Act / California Privacy Rights Act): This framework gives Californians the right to know what data is collected and to opt out of its “sale” or “sharing”—a broad term that can include activities like targeted advertising. Technically, this requires systems that can reliably track and honor opt-out requests across your entire data landscape.

The engineering challenge is to translate these varied legal rules into a cohesive set of technical controls. A practical solution is to abstract common functions—like consent management and data deletion requests—into a unified compliance layer within your architecture. Our guide on the practical means of compliance explores this architectural approach.

Crafting the Essential Clauses for Your Policy

Using a generic template for your privacy & cookies policy creates a dangerous gap between your legal promises and how your SaaS or AI product actually operates. A boilerplate policy is a liability. For a technical audience—engineers, product owners, and discerning enterprise buyers—the policy should be an honest blueprint of your data architecture.

Data Collection and Purpose Specification

This is the core of your policy: telling users what data you collect and precisely why you collect it. Vague statements like “to improve our services” are insufficient. You must map every data point to a specific, legitimate purpose.

For a B2B SaaS platform, this means being granular. Instead of “contact information,” break it down:

- User Account Data: We collect

name,email address, andhashed passwordto manage authentication and provide access to the service (Lawful Basis: Contractual Necessity). - Billing Information: This includes

company name,address, andpayment details, processed by our sub-processor (Stripe) to fulfill our service contract. We do not store full credit card numbers on our servers. - System Telemetry: We gather anonymized or aggregated data such as

API response timesandfeature usage frequencyto monitor performance and resolve technical issues (Lawful Basis: Legitimate Interest).

This level of detail forces your engineering team to justify every field in your database and every event in your analytics pipeline. If you cannot articulate a clear purpose for collecting a piece of data, you should question whether it should be collected at all.

Third-Party Sub-processors and Data Transfers

No modern software exists in isolation. Your policy must provide a transparent list of all third-party services that process user data on your behalf. These sub-processors are a critical disclosure under GDPR and a focal point of enterprise security reviews.

For each sub-processor, you must name the service, explain its function, and link to its privacy policy.

A common blind spot is omitting infrastructure providers (e.g., AWS, Google Cloud), CI/CD tools that process source code, or customer support platforms like Zendesk. If they handle personal data, they are sub-processors.

If these services operate outside your users’ jurisdiction (e.g., using a US-based service for EU data), you must explain the legal and technical safeguards for international data transfers, typically by referencing mechanisms like Standard Contractual Clauses (SCCs).

Data Retention and User Rights

Your policy must state precisely how long you retain personal data. A defensible data retention period is tied directly to the purpose for which the data was collected.

- Active User Data: Retained for the duration of an active customer subscription.

- Post-Subscription Data: Anonymized or deleted within 90 days following contract termination, except for billing records, which are retained for 7 years to comply with financial regulations.

- Server Logs: Automatically rotated and deleted after 30 days.

Finally, your policy must describe the operational process for handling user rights requests—such as access, correction, or deletion (“right to be forgotten”). This is a technical workflow, not just a legal promise. Explain how a user can submit a request (e.g., via a specific email address or a button in their account settings) and the steps your system takes to fulfill it. Demonstrating an operationalized process is a strong signal of engineering maturity and trustworthiness.

Architecting Your Cookie Consent Strategy

A cookie banner is not a strategy. For a modern B2B SaaS platform, implementing a generic, non-functional banner is a direct path to regulatory fines and erosion of user trust. A proper cookie management system must be architected into your platform, reflecting both legal requirements and your application’s actual behavior.

This requires moving beyond superficial UI elements and thinking like an engineer. Every cookie your application sets must be identified, classified, and justified based on its function. This foundational work is what makes a privacy & cookies policy meaningful and defensible.

Classifying Cookies From an Engineering Standpoint

From a technical perspective, cookies serve different roles, and their legal status depends entirely on their function. A compliant architecture begins with their correct classification.

- Strictly Necessary: These are essential for the application to function, handling core tasks like session management or authentication. Under GDPR, they do not require user consent because the service would be broken without them.

- Performance: These cookies collect anonymous, aggregated data about system usage—page load times, feature adoption, error rates. They are the data source for engineering monitoring dashboards used to identify performance bottlenecks.

- Functional: These remember user choices to provide a more personalized experience, such as language preference or a UI theme (dark/light mode). They improve usability but are not essential for core service operation.

- Targeting: These cookies track user activity across websites to build interest profiles for advertising. While less common in B2B SaaS, they carry the highest regulatory risk and always require explicit, opt-in consent.

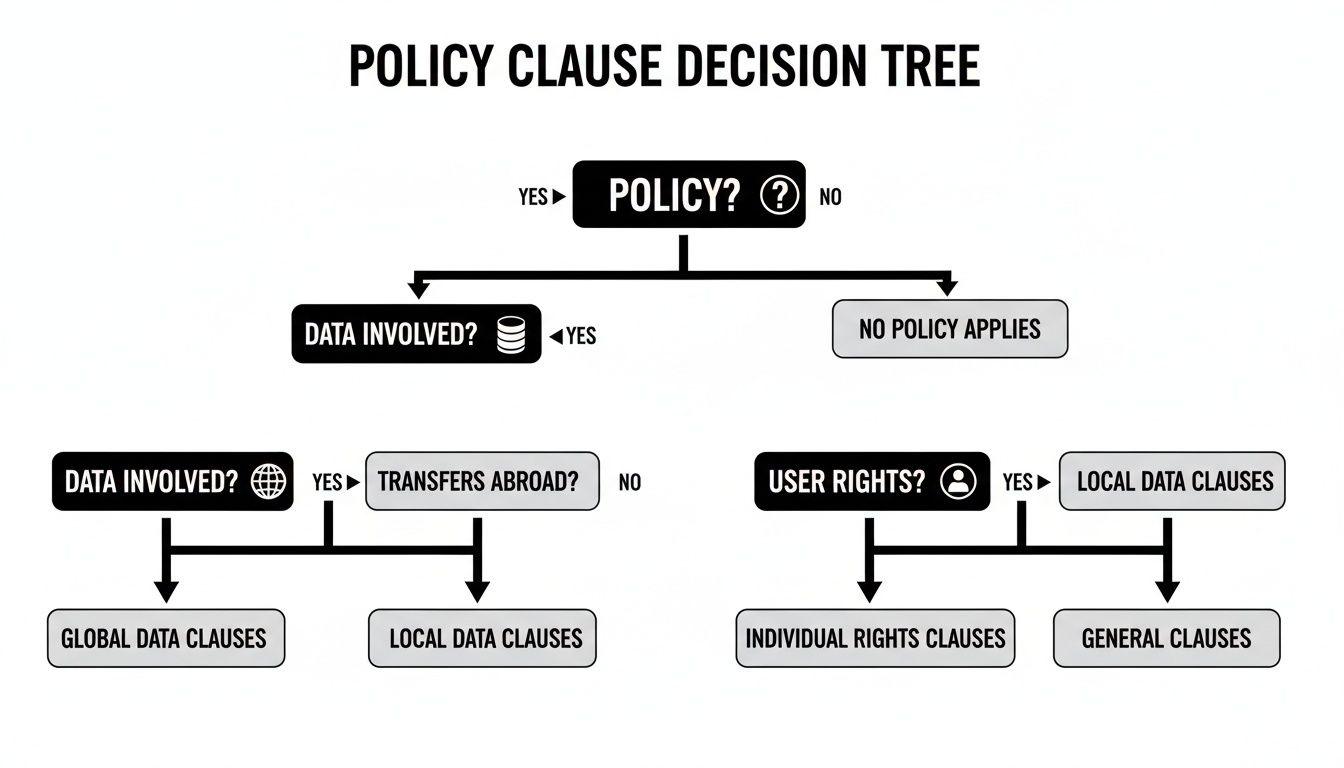

This decision tree helps visualize how to map these classifications to your data handling policies, user rights, and global data transfers.

The path each data type takes—from collection to transfer—demands specific policy clauses and technical controls.

Cookie Classification and Consent Requirements

The following table maps cookie categories to their consent requirements under a strict framework like GDPR.

| Cookie Category | Primary Purpose | Examples | GDPR Consent Model |

|---|---|---|---|

| Strictly Necessary | Core application functionality | Session IDs, authentication tokens, cart data | Exempt (No consent needed) |

| Performance | System monitoring and analytics | Page view counts, load time metrics, error logs | Opt-in (Explicit consent required) |

| Functional | User experience personalisation | Language settings, theme choice, UI preferences | Opt-in (Explicit consent required) |

| Targeting | Advertising and cross-site tracking | Ad network IDs, user interest profiles | Opt-in (Explicit consent required) |

This table should be the starting point for any technical discussion about implementing consent. Misclassifying cookies is a common and costly mistake.

Implementing Geographic Consent Models

A one-size-fits-all consent architecture is no longer viable. Your system must adapt based on the user’s location. The two dominant models, GDPR’s opt-in and CCPA’s opt-out, have fundamentally different technical requirements. See a detailed breakdown in our guide on designing opt-in and opt-out systems.

Correct implementation typically involves a Consent Management Platform (CMP). A robust CMP integrates with your frontend, blocks non-essential scripts and cookies until consent is granted, and maintains an auditable log of consent decisions. Naive implementations like “cookie walls” that deny service without consent are non-compliant in many jurisdictions. As browsers phase out third-party cookies, a first-party data strategy managed with a compliant consent architecture is no longer optional; it is a core component of your data architecture.

Addressing Privacy in AI and Telemetry Systems

Standard privacy policies often fail to address the unique challenges of modern AI, LLMs, and large-scale telemetry systems. For any team building with AI or using extensive telemetry, transparency is a core engineering function, not just a legal checkbox. A boilerplate privacy & cookies policy is inadequate for these data-intensive systems.

The rise of AI has forced a broad rethinking of data governance. Research on how AI is expanding data privacy scopes shows that a significant majority of organizations are expanding their privacy programs specifically due to AI. Your policy must now cover AI governance, technical guardrails, and human-in-the-loop controls to manage risks like data leakage and biased outputs.

Transparency in AI-Driven Automated Decision-Making

When your product uses AI to make decisions that have a meaningful impact on a user—such as qualifying leads, flagging content, or setting pricing—you have a legal and ethical obligation to be transparent. Regulations like GDPR (Article 22) and California’s rules on Automated Decision-Making Technology (ADMT) grant users specific rights regarding these processes.

Your privacy policy must explicitly state:

- That automated decision-making is occurring.

- The logic involved: A high-level, meaningful explanation of the factors influencing the outcome.

- The potential consequences for the user.

- The right to a human review: The exact process a user can follow to contest an automated decision and have it reviewed by a person.

This requires an architecture built to support such transparency, with capabilities to flag AI-driven decisions and route them for human review when challenged.

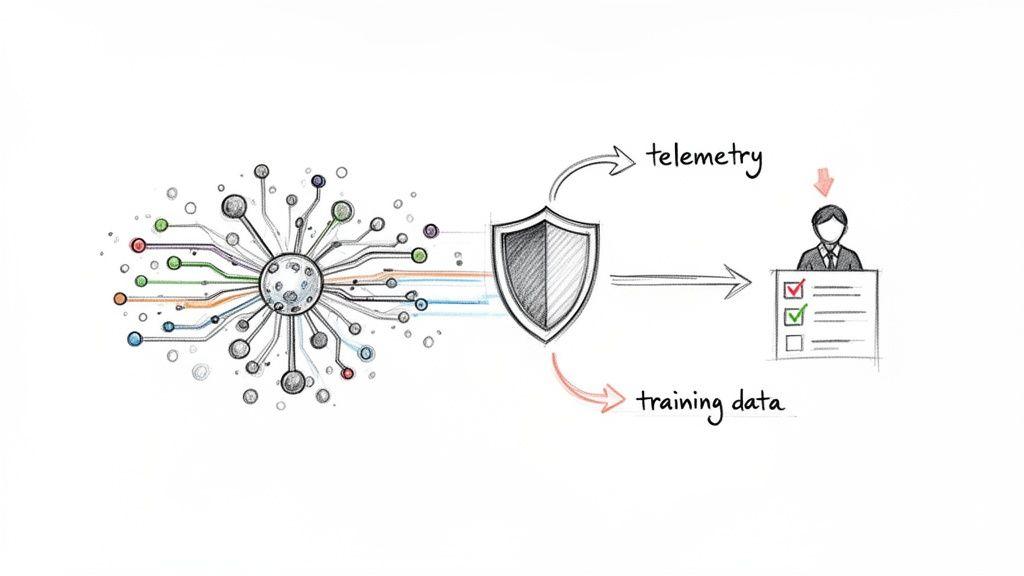

Drawing the Line Between Telemetry and Tracking

All modern SaaS products use telemetry for performance monitoring, debugging, and product analytics. However, a critical line exists between legitimate product telemetry and invasive user tracking. A vague policy claiming data is collected “to improve our services” erodes trust.

- Legitimate Telemetry focuses on system behavior and aggregate user actions (e.g., anonymized API response times, feature usage counts).

- Invasive Tracking focuses on an individual’s granular behavior for purposes beyond the core service (e.g., building detailed marketing profiles).

To maintain compliance and trust, your architecture must respect this distinction. By default, telemetry data should pass through anonymization pipelines that strip or aggregate personal identifiers before the data reaches analytics tools.

Architectural Patterns for Privacy-Preserving AI

If you are integrating third-party AI APIs or training proprietary models, privacy must be built in by design. Your privacy policy should then serve as the public record of these technical safeguards.

Consider incorporating these privacy-by-design patterns:

- Data Anonymization Pipelines: Before any customer data is used for model training, it must pass through a pipeline that redacts, hashes, or pseudonymizes all personally identifiable information (PII).

- Input/Output Guardrails: Implement filters for LLM-based features to prevent sensitive data from being included in prompts and to block harmful or inappropriate content in responses.

- Human-in-the-Loop (HITL) Controls: For high-stakes decisions or operations involving sensitive data, design workflows that require human verification and approval.

- Granular Opt-Out Mechanisms: Provide users with a clear, functional way to opt out of having their data used for AI training. This must be a real flag in your user database that your data pipelines respect.

Your privacy & cookies policy is the technical promise you make to users, partners, and regulators, demonstrating that you have engineered privacy into the foundation of your AI and telemetry systems.

Putting Your Privacy Policy into Practice

A privacy policy is a liability if it doesn’t reflect reality. Operationalizing your privacy & cookies policy means converting legal promises into durable, repeatable engineering and product workflows. It is about making compliance a sustainable practice, not a series of reactive fire drills.

This requires a cultural shift where privacy becomes an integral part of the software development lifecycle (SDLC). A policy is a living document; without robust internal processes, it will quickly diverge from your technical reality. The goal is to move from reactive box-checking to a proactive system of governance, where every new feature, third-party tool, or change in data handling triggers a privacy review by default.

Translating Policy into Technical Reality

Operationalizing a policy requires a clear playbook that connects legal text to engineering action. Start by treating your legal documents with the same discipline as your code: use a version control system like Git, with commit messages explaining what was changed and why.

This process makes every update deliberate and auditable. Key technical implementation steps include:

- Build a DSAR Workflow: A Data Subject Access Request (DSAR) is a formal request from a user to access or delete their personal data. Manual fulfillment is slow and error-prone. You need a semi-automated workflow to locate, collect, and securely deliver or erase a user’s data across all systems, including microservices and backups. This is a core engineering problem.

- Automate Policy Reviews in CI/CD: Integrate privacy checks into your development pipeline. For example, a pull request that adds a new third-party dependency or alters a database schema should automatically flag the need for a privacy impact assessment. This directly links code changes to policy commitments.

- Maintain a Sub-processor Inventory: Your policy must list all third-party services that process user data. This inventory requires a clear owner and a formal review process for onboarding any new tool, from an analytics platform to an AI API.

Establishing a Cadence for Review and Maintenance

A static policy is an outdated policy. You must establish a regular review cadence to keep your privacy & cookies policy accurate and compliant, triggered by specific events. A privacy policy review should be a mandatory gate in your release cycle for any major feature. If it touches user data in a new way, the policy must reflect that before deployment.

Mandatory triggers for a policy review include:

- New Product Features: Especially those collecting new data types or using data for new purposes.

- New Regulations: Such as emerging AI laws or updates to frameworks like GDPR or CCPA.

- Changes in Business Operations: Including expansion into new geographic markets.

By integrating these practices, you build privacy into your team’s muscle memory. This transforms your policy from a static legal document into a dynamic guide that governs how you build and maintain trustworthy software.

Frequently Asked Questions

Here are pragmatic answers to common questions from founders, CTOs, and product leaders about privacy and cookie policies.

Do We Really Need a Privacy Policy for an Internal B2B Tool?

Yes. If the tool processes any information that can identify a person—a name, work email, or IP address—it is processing personal data. Regulations like GDPR apply regardless of whether the application is public-facing or an internal B2B tool.

More importantly, enterprise customers will scrutinize your policy during vendor security reviews. A missing or vague policy is a major red flag, signaling a lack of engineering maturity that can terminate a procurement process. Your policy serves as a technical specification for how you handle their data.

How Detailed Does Our Cookie Disclosure Need to Be?

Vague statements like “we use cookies” are non-compliant. To obtain informed consent, you must at a minimum categorize the cookies you use:

- Strictly Necessary Cookies: Explain their function (e.g., “for user authentication and session management”).

- Performance/Analytics Cookies: State what they track (e.g., “to monitor application performance and identify bugs”).

- Functional Cookies: Describe what they enable (e.g., “to remember your language or UI preferences”).

- Targeting Cookies: If used, be direct (e.g., “for personalizing ads on other platforms”).

Best practice, particularly for B2B SaaS, is to provide a granular list of specific cookies, their purpose, and their expiration. This is typically managed in a dedicated cookie policy linked from your main privacy policy and consent management tool.

What’s the Biggest Mistake Engineering Teams Make?

The most common and costly mistake is treating the privacy policy as a static legal document, completely disconnected from the software development lifecycle (SDLC).

When a policy is written and forgotten, it immediately begins to drift from the application’s actual behavior. The engineering team adds a new analytics tool, a data retention script is modified, or an API starts collecting an extra field—all while the policy remains unchanged. This gap between your legal promise and your technical reality is a compliance failure waiting to happen, creating significant technical debt and regulatory risk.

The solution is to build privacy reviews directly into your engineering workflow. A pull request that modifies data schemas or adds a third-party service should automatically trigger a check: “Does this change align with our privacy policy?” This keeps the policy a living, accurate reflection of your system.

Can We Use a Single Policy for Global Users?

Yes, but it must be architected to meet the highest standard of the jurisdictions in which you operate. The most robust approach is to create a global baseline policy that adheres to the strictest principles, typically those of GDPR. This means embedding practices like data minimization, purpose limitation, and strong user rights into your core architecture.

On top of this baseline, you can add specific clauses or addendums for regional laws, such as the CCPA/CPRA’s “Do Not Sell or Share My Personal Information” requirement. Your consent management platform must also be architected to present the correct consent model (e.g., opt-in for EU users, opt-out for Californian users) based on geolocation.

At Devisia, we believe that a strong privacy posture is the result of deliberate architectural choices, not legal afterthoughts. We build scalable SaaS platforms and AI systems with privacy, security, and compliance integrated from the ground up. If you need a technical partner to turn your vision into a reliable digital product, see how we work at https://www.devisia.pro.