When a CTO hears “GIS software,” the legacy image of a digital map-making tool often comes to mind. This view is obsolete. A modern geospatial information system is an analytical engine, designed to integrate with your core software architecture. It connects disparate data to a specific location, uncovering operational patterns that remain invisible in spreadsheets and standard databases.

For a technology-driven business, the goal is to transform raw location data into automated, operational intelligence—not just static reports.

The Problem: Legacy GIS Architecture vs. Modern Software Demands

Many organizations possess high-value geospatial data but are constrained by legacy systems. These platforms—often monolithic, on-premise, and built for specialist analysts—are fundamentally misaligned with the needs of modern, scalable digital products. The core problem isn’t a lack of data; it’s an architectural bottleneck that prevents real-time use.

This misalignment creates tangible business friction and technical debt. A logistics company cannot dynamically reroute assets around live traffic because its routing and tracking systems are siloed. An insurance firm is unable to accurately price risk in real-time because its property database cannot be integrated with live weather feeds. These are not data science problems; they are system integration, performance, and architectural failures.

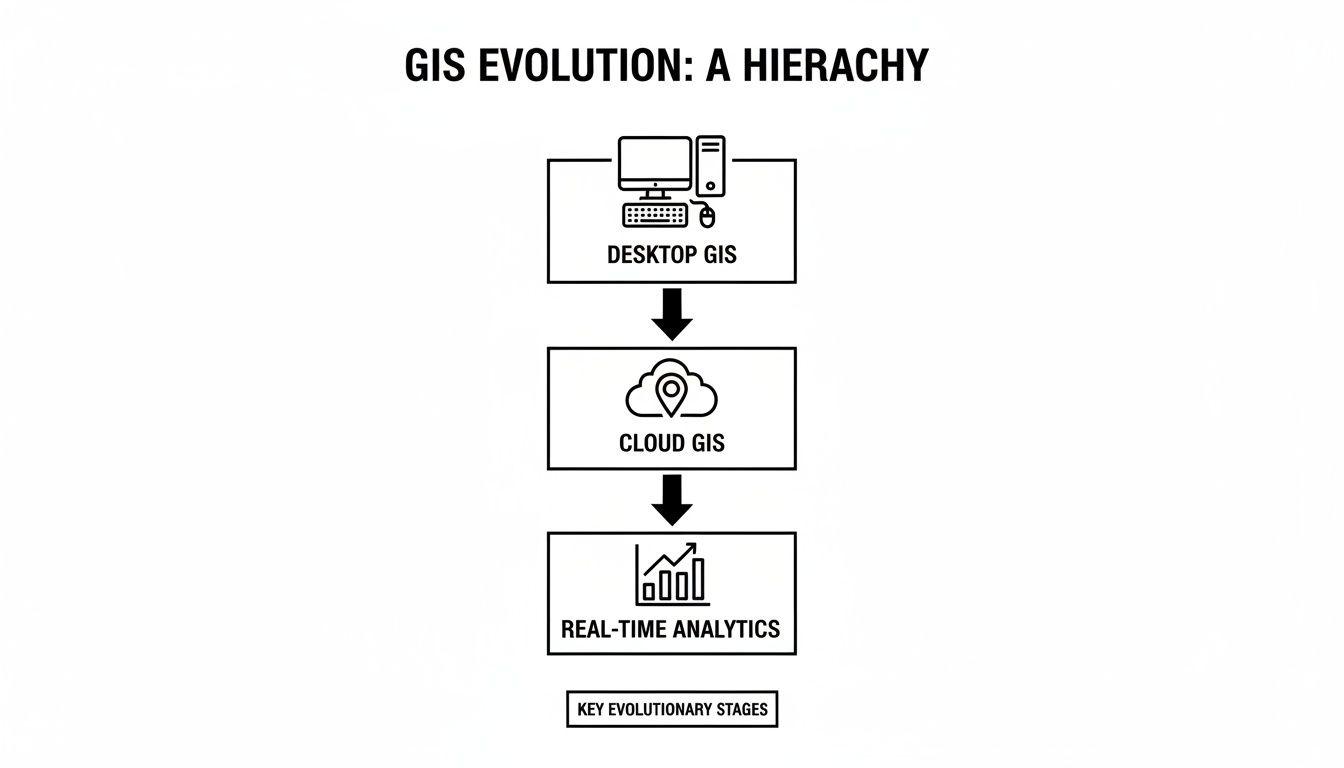

The solution has been a fundamental re-architecting of how geospatial information systems software is designed and deployed. The industry has moved decisively from monolithic desktop applications toward distributed, cloud-native platforms.

This shift is driven by three key architectural principles:

- API-First Integration: Modern GIS platforms are built to be components within a larger system. They use well-defined APIs (Application Programming Interfaces) to embed location intelligence directly into operational workflows, SaaS products, and customer-facing applications.

- Cloud-Native Scalability: By leveraging cloud infrastructure, these systems can elastically scale compute and storage resources on demand. This is a non-negotiable requirement for processing large, dynamic datasets from sources like IoT sensors, satellite imagery, and mobile devices without performance degradation.

- Real-Time Analytics: The focus has shifted from historical, batch-processed reports to real-time spatial analytics. This capability enables dynamic, automated decision-making, whether optimizing a delivery fleet or managing a smart energy grid under load.

A modern geospatial system is not a standalone tool. It is a critical data layer within a company’s digital architecture. Its primary function is to serve location-based insights to other services and applications, not just to a human analyst.

This evolution enables the solution of complex business challenges that were previously intractable. A modern GIS architecture can power a SaaS product that helps farmers optimize irrigation based on real-time soil moisture data. It can enable a smart city platform to autonomously manage emergency service dispatch during a flood event. By understanding this architectural shift, CTOs and product leaders can move beyond simple data visualization and begin engineering systems that automate complex, location-dependent workflows, creating reliable, maintainable software and a durable competitive advantage.

Understanding Core Architectural Patterns

Building a geospatial system that delivers long-term value requires sound architectural decisions from the outset. These choices directly impact performance, total cost of ownership (TCO), and the system’s ability to adapt to future business requirements. A naive or superficial architectural approach often results in a brittle system that is expensive to maintain and actively inhibits innovation.

At the heart of any robust GIS is a spatial database. This is a standard database engine that natively understands geography. A conventional database can store a list of addresses, but a spatial database like PostGIS (a widely-used extension for PostgreSQL) understands the physical and topological relationships between them.

It can execute queries like, “Which of these 10,000 assets are within a five-kilometer radius of this location and not within a designated flood plain?” efficiently, without performing a full table scan. This native capacity for complex spatial queries is a key differentiator between a high-performance system and a sluggish one.

Monolithic vs. Microservices Architecture

Historically, GIS applications were often built as monoliths. All components—data processing, visualization, and analytical tools—were bundled into a single, tightly-coupled application. While this approach can simplify initial development, it becomes a significant liability as the system scales. A failure in a minor component can cascade, bringing down the entire application. Updating a single feature requires a full re-deployment, creating a slow and risky release cycle.

The modern paradigm favors a microservices architecture. The system is decomposed into a collection of small, independent services, each responsible for a single business capability.

- Ingestion Service: Responsible for consuming and validating data from various sources (e.g., IoT sensor feeds, satellite imagery).

- Geocoding Service: Translates textual addresses into geographic coordinates (latitude and longitude).

- Routing Service: Calculates optimal paths between multiple points, considering constraints like traffic or vehicle type.

- Analysis Service: Executes complex spatial logic, such as identifying all properties within a potential wildfire risk zone.

This distributed model offers superior resilience and scalability. If the routing service experiences a surge in load, it can be scaled independently of other system components. For a more detailed examination of this pattern, refer to our guide on service-oriented architecture.

The diagram below illustrates this architectural evolution, from isolated desktop tools to dynamic, cloud-native platforms capable of real-time analytics.

This is not merely a technical preference; it is the enabling factor for businesses to transition from static reporting to extracting real-time, actionable insights from their location data.

The following table provides a pragmatic comparison of the trade-offs associated with these architectural patterns.

GIS Architectural Pattern Comparison

| Architectural Pattern | Best For | Key Benefits | Implementation Considerations |

|---|---|---|---|

| Monolith | Small-scale projects, MVPs, and teams with limited operational capacity. | Simple to develop, deploy, and test initially. Unified codebase. | Becomes difficult to scale, update, and maintain. A single point of failure. Technology lock-in. |

| Microservices | Complex, large-scale systems requiring high scalability, resilience, and agility. | Independent scaling, deployment, and development. Fault isolation. Technology diversity. | Higher operational complexity (deployment, monitoring, inter-service communication). Requires mature DevOps practices. |

| Event-Driven | Real-time applications like logistics tracking, IoT monitoring, and alert systems. | Highly scalable and resilient. Decoupled services. Excellent for responsive, asynchronous workflows. | Can be complex to debug and test. Requires careful design of event schemas, idempotency, and state management. |

The optimal pattern is contingent on specific project goals, team maturity, and the complexity of the problem domain. There is no universally correct answer.

Embracing Event-Driven Patterns

For applications that must react to changes in the physical world—such as logistics tracking, fleet management, or emergency response systems—an event-driven architecture is the superior model. Instead of inefficiently polling a database with queries like “What is new?”, the system is designed to react to events as they occur.

An event-driven GIS does not ask, “What is the current status of the delivery fleet?” It is architected to react to a stream of events like, “Truck #451 has departed the depot” or “Traffic incident reported on highway A1.”

When a delivery vehicle sends a location update, it publishes an “event.” Other services subscribe to these events and react accordingly. A notification service might alert the customer with an updated ETA, while an analytics service logs the trip’s progress for performance analysis.

This pattern is highly scalable and effectively decouples services, ensuring that a failure in one component does not cascade and halt the entire workflow. It is a foundational element for building responsive geospatial systems that deliver immediate, tangible business value.

Navigating the GIS Software Market Landscape

To make informed architectural and investment decisions, technical leaders must understand the structure of the geospatial market. It is not a fragmented space of small, competing tools but an ecosystem dominated by a few major platforms that dictate the industry’s pace and technical direction.

While the broader GIS market includes hardware and professional services, it is the software segment that captures the most value and drives innovation. This space is anchored by a few giants, most notably Esri’s ArcGIS platform, alongside powerful enterprise offerings from companies like Hexagon and Trimble.

The Dominance of Core Platforms

These platforms function as the operating systems of the geospatial world. They are vast, feature-rich environments developed over decades, supported by immense and sustained R&D investment. A market leader like Esri, for instance, reportedly reinvests around 28% of its revenue back into research and development.

This generates a high feature velocity—a continuous stream of new tools, APIs, and analytical capabilities. For companies building custom software, this presents both a challenge and an opportunity. Competing directly with the breadth of these platforms is rarely a viable strategy. However, their extensive and well-documented APIs provide a robust foundation for building specialized applications that solve specific, high-value business problems.

The core platforms provide the foundational engine and APIs. The strategic opportunity lies in building specialized applications and custom dashboards that connect this powerful engine to your unique business logic and operational needs.

This market structure fosters a vibrant ecosystem where thousands of developers and consultants build businesses by extending and customizing these core platforms. A competitive advantage can be carved out not by reinventing the geospatial engine, but by building targeted solutions that the large, generalized platforms do not address natively.

A Market Driven by Software and Growth

Market data confirms the primacy of software. The geospatial information systems software component is projected to command a dominant 53.60% share of the global market in 2026. This allows product leaders to build robust, maintainable systems that integrate with established platforms, leveraging their stability and feature sets. You can explore the complete market dynamics and projections for a comprehensive analysis.

This growth is not uniform globally. While North America remains a mature market, the Asia Pacific region is expanding rapidly, projected to grow at an 18% CAGR from 2026. This is driven by massive investments in urban planning, cloud adoption, and increased availability of high-resolution satellite imagery—all creating immense demand for spatial analysis.

For technical leaders, this landscape indicates clear strategic opportunities:

- Bespoke Web Applications: Develop custom tools that integrate with core GIS platforms via API to automate specific workflows in logistics, agriculture, or environmental compliance.

- AI-Enhanced Dashboards: Build dashboards that employ machine learning models to analyze spatial data, providing predictive insights for smart city management or portfolio risk assessment.

- Mobile-Integrated GIS: Create mobile applications that deliver location intelligence and data collection capabilities directly to field teams, improving operational efficiency and data accuracy.

Understanding this market structure is crucial. It reframes the “build versus buy” decision from a simple cost comparison to a strategic exercise. The operative question becomes: where can our engineering effort create the most durable value? The answer—whether leveraging existing platforms, building on top of them, or filling a niche with a fully custom solution—will shape your investment, architecture, and long-term competitive position.

Integrating AI and LLMs into Geospatial Systems

Historically, geospatial analysis was a bottleneck, performed by a small number of specialists through a slow, manual process. The central problem has always been extracting meaningful, real-time intelligence from complex spatial data—such as satellite imagery or IoT sensor networks—at scale. This required immense human effort.

The convergence of Artificial Intelligence (AI) and geospatial systems, a field now known as GeoAI, addresses this fundamental problem. This is not about cosmetic improvements to maps; it’s about using machine learning (ML) and large language models (LLMs) to automate analysis, identify predictive patterns, and enable non-specialists to ask complex spatial questions using natural language.

From Manual Analysis to Agentic Automation

The real-world impact of GeoAI lies in solving tangible business problems with automation. Instead of a GIS analyst manually identifying features on a map, a trained AI model can perform the task continuously and at scale.

- Automated Feature Extraction: A computer vision model can be trained to scan satellite imagery to automatically catalogue assets (e.g., solar panels, oil wells), detect illegal deforestation, or perform competitive analysis by counting vehicles in a competitor’s parking lot.

- Predictive Spatial Modeling: ML models can ingest historical weather patterns, vegetation indices, and topographic data to forecast wildfire risk, predict agricultural yields, or identify optimal sites for new infrastructure.

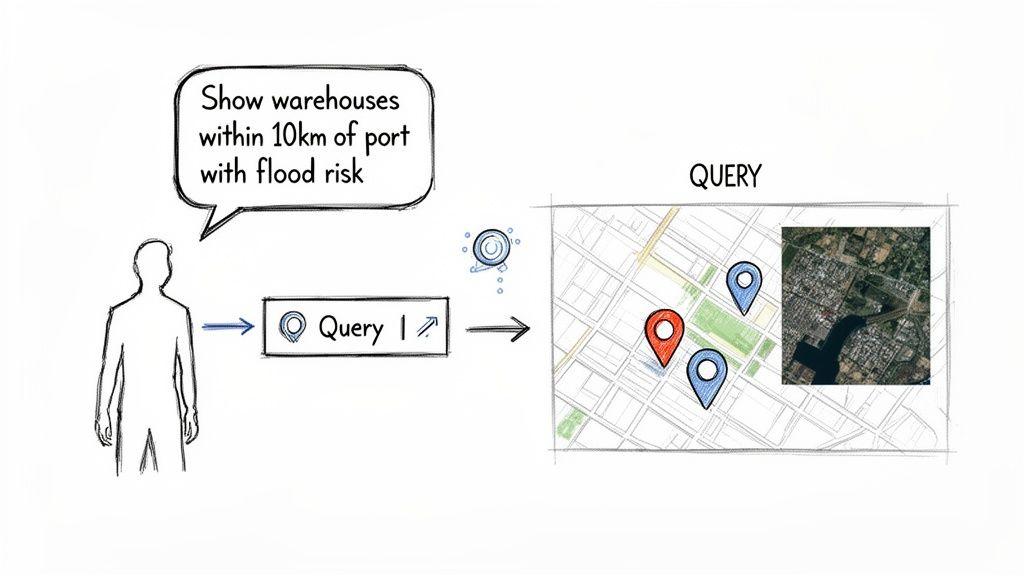

- Natural Language Interaction: This represents a paradigm shift. LLMs act as a “universal translator,” connecting a non-technical user to a complex geospatial database. This dramatically lowers the barrier to entry for accessing powerful spatial insights.

An LLM-powered agent can translate a natural language query like, “Show me all our warehouses within 10 kilometers of a major port that are in a high-risk flood zone,” into the precise spatial database queries required to generate an answer on a map, instantly.

This shift is a primary driver of market growth. The North American geospatial information systems software market is projected to expand by USD 14.57 billion between 2025 and 2030, at an accelerated CAGR of 25%. This is not just organic growth; it is an acceleration fueled by the transition from legacy desktop tools to cloud-native, AI-powered platforms. You can read more about the market forces driving GeoAI adoption.

Architectural Patterns for GeoAI Integration

A naive integration of AI models into a geospatial stack is a high-risk strategy. It introduces unacceptable risks such as model bias, data drift, and “hallucinations” (confident but incorrect outputs), which can lead to flawed business decisions. A robust architecture must include clear controls and maintain a human-in-the-loop for high-stakes decisions.

A pragmatic and maintainable architectural pattern is a multi-stage pipeline:

- Data Ingestion: Raw geospatial data (e.g., drone imagery, GPS tracks) is fed into the system.

- AI/ML Processing: A dedicated, isolated service runs the appropriate AI model (e.g., object detection) on the raw data. The output is not a visual map but structured data, such as the coordinates and classification of detected objects.

- Spatial Database Storage: This structured, AI-generated data is stored in a robust spatial database like PostGIS. Here, it can be queried, indexed, and cross-referenced with other business data.

- LLM Agent Interface: An LLM-powered agent acts as the user interface. It utilizes tool-calling capabilities to interact with the spatial database via a secure, well-defined API, translating human requests into formal queries and presenting the results.

This architecture decouples the AI models from the core database, making the entire system more modular, maintainable, and easier to upgrade. Crucially, it provides a clear chokepoint to implement human-in-the-loop (HITL) guardrails. For any high-stakes decision—such as approving an insurance claim based on AI-detected roof damage—the model’s output should be flagged for mandatory human verification before any action is taken. This ensures the AI functions as a reliable co-pilot, not an unsupervised actor prone to generating confidently incorrect results.

Implementing Security, Governance, and Operational Resilience

Treating data privacy as a feature to be added later is a critical and costly architectural error in any geospatial system. Location data is uniquely sensitive, linked to individuals, assets, and proprietary business operations. A naive “collect everything, secure it later” approach creates significant compliance risks under regulations like GDPR and NIS2 and is a direct invitation for data breaches.

Effective security and privacy are not features; they are architectural choices made at the design stage. This means embedding data protection into the fundamental structure of your geospatial information systems software.

Designing for Data Privacy and Compliance

The primary challenge with location data is the risk of re-identification. A series of anonymized location points can often be correlated to trace back to a specific individual or asset. A pragmatic, “privacy by design” approach focuses on data minimization and anonymization before the data is stored in a primary analytical database.

This involves several practical architectural techniques:

- Geospatial Aggregation: Instead of storing precise GPS coordinates, data can be aggregated into larger geographic units, such as postal codes or hexagonal grids (e.g., H3). This preserves analytical value for trend analysis while obscuring exact point locations.

- Data Anonymization and Pseudonymization: Apply cryptographic techniques to strip direct identifiers and replace them with irreversible hashes or rotating tokens. This is a mandatory step for complying with regulations that include the “right to be forgotten.”

- Granular, Location-Aware Access Control: Access control policies must understand geography. A regional manager should only be able to query data within their designated territory. This rule must be enforced at the database query level, not merely in the client-side application logic.

Privacy in a GIS is not about preventing data use; it is about enabling its use safely by reducing the “resolution” of personal data to the minimum level required for a specific business function.

By architecting these controls into the system from the beginning, compliance becomes an intrinsic property of the system, significantly reducing legal exposure and reputational risk.

Ensuring Operational Resilience and Cost Control

Beyond security, a modern geospatial system must be reliable and cost-effective. These architectures are often complex distributed systems composed of internal services and third-party APIs for functions like mapping, geocoding, and routing. A failure in any one dependency can trigger a cascading failure that impacts the entire application.

The foundation of operational resilience is system observability. This extends beyond simple uptime monitoring to include the collection of detailed logs, metrics, and distributed traces from every component in the GIS stack. This deep visibility enables engineering teams to rapidly diagnose performance bottlenecks, predict failures, and debug issues with precision.

To manage dependencies and prevent system-wide outages, you must engineer for failure.

- Implement Fallbacks: If the primary geocoding API fails, does the system automatically and gracefully failover to a secondary provider? Without this, a third-party outage becomes your outage.

- Use Caching Strategies: Repeatedly fetching the same static map tiles or geocoded addresses is both inefficient and expensive. A well-designed caching layer dramatically reduces API calls, lowering operational costs and improving system responsiveness.

- Set Rate Limits and Budgets: API calls to mapping and data services can become a significant and unpredictable operational expense. Implementing strict rate limits and automated budget alerts is crucial to prevent runaway costs from software bugs or denial-of-service attacks.

Building a resilient system requires a proactive, defensive mindset. Teams seeking to strengthen their operational posture should explore established frameworks for designing a robust disaster recovery plan for cloud systems. These principles are directly applicable to the distributed nature of modern GIS, ensuring the platform remains available and performant even when individual components fail.

Making the Strategic Build vs. Buy Decision

When incorporating a geospatial capability, the “build vs. buy” decision is one of the most critical strategic choices a technology leader will make. It is not merely a budgetary line item but a fundamental decision that impacts long-term control, total cost of ownership (TCO), and competitive differentiation. A superficial analysis can lock an organization into a path that either stifles innovation or becomes a financial black hole.

Purchasing an off-the-shelf SaaS platform is often the correct choice for standard, non-differentiating use cases. If business needs are well-defined and an existing product meets them, subscribing is almost always faster and more direct than building from scratch. Here, speed to market is the primary driver.

However, when a unique geospatial process is core to the business model, building a custom solution becomes a strategic imperative. If value is derived from a proprietary analytical model, a specialized data processing pipeline, or a user experience that generic tools cannot replicate, building is the only way to create and protect a durable competitive advantage.

A Decision Framework for Technical Leaders

To make a sound decision, one must look beyond the initial license fees or development costs. A choice based purely on upfront pricing often leads to unforeseen limitations and spiraling costs. This requires a trade-off analysis tied to long-term strategic goals.

For a deeper analysis of the infrastructure trade-offs involved, you can explore the considerations between on-premises vs. cloud deployment models.

The operative question is not “Which is cheaper upfront?” but “Which path grants us the most control over the factors critical to our success?” If your geospatial workflow is your product, you must own its architecture.

This framework helps evaluate the factors that truly determine the long-term success of the decision.

Build vs. Buy Decision Framework for GIS Software

| Decision Factor | Choose ‘Buy’ (Off-the-Shelf) When… | Choose ‘Build’ (Custom) When… |

|---|---|---|

| Strategic Importance | Geospatial is a supporting feature, not a core business differentiator. | The workflow gives you a unique competitive advantage or is your product. |

| Speed to Market | You need a working solution immediately for a standard, well-defined problem. | You have the time and resources to invest in a solution tailored for long-term strategic goals. |

| Total Cost of Ownership | Your needs fit neatly within standard subscription tiers without costly add-ons or custom development. | You need to optimize performance at scale, which will reduce long-term operational costs (e.g., API calls, compute). |

| Scalability & Control | The platform’s scaling limits and architectural constraints are acceptable for your projected growth. | You require absolute control over performance, architecture, data residency, and resource utilization. |

| Integration Needs | The platform provides pre-built connectors for your existing technology stack. | You require deep, custom integrations with proprietary or legacy systems that standard APIs do not support. |

Ultimately, the choice depends on where you must focus your engineering resources and what components of your technology stack you need to own versus rent.

The Hybrid Approach: A Pragmatic Compromise

For many organizations, the optimal solution is neither a pure build nor a pure buy. A hybrid model often provides the best balance of speed, cost, and control.

This strategy involves leveraging a robust commercial or open-source geospatial information systems software platform for foundational, commodity tasks—such as data storage, map tile rendering, and basic spatial indexing. On top of this foundation, you build your unique, high-value applications and services.

This approach allows you to focus your limited engineering resources where they create the most differentiation, on the custom logic that defines your business advantage, while avoiding the need to reinvent commodity features.

Partnering with a technical consultancy can accelerate this process, ensuring your custom layers are built on a robust, maintainable architecture that integrates cleanly with the underlying platform. This preserves your ability to innovate where it matters most while leveraging the stability of established technology.

Frequently Asked Questions

What Is the Most Significant Trend in Geospatial Software for 2026?

The most significant trend is the democratization of advanced spatial analysis through AI-powered agents. This is moving the use of GIS beyond a small group of expert analysts. Now, non-technical decision-makers can ask complex spatial questions in natural language.

For example, a supply chain manager can ask their system, “Which of our delivery routes scheduled for tomorrow face the highest risk of delay from projected weather and traffic?” An AI agent can interpret this request, query multiple APIs (weather services, live traffic data, internal routing systems), perform the spatial analysis, and provide a direct, actionable answer. This transforms geospatial information systems software from a tool for visualization into an engine for direct, automated action.

How Do I Ensure Location Data Security and Privacy?

Privacy must be built into the system architecture from the beginning; it cannot be effectively “bolted on” after the fact. The most robust approach begins with data minimization, employing techniques like geospatial aggregation to store data in larger grid cells (e.g., H3 hexagons) instead of precise coordinates. This is the essence of privacy by design.

Your objective should be to reduce the “resolution” of personal data to the minimum level necessary for a given business function. For macro-level trend analysis, for example, use postcode-level data instead of exact residential addresses.

In addition to minimization, you must implement granular, location-aware access controls at the database level. This is non-negotiable. It ensures a user in one sales territory cannot query sensitive data from another, enforcing security and data residency rules as a fundamental property of the system.

What Are the First Steps to Integrating GIS Capabilities?

Identify a single, high-value business problem that location intelligence can solve. Resist the common impulse to design a comprehensive, enterprise-wide GIS overhaul from the outset.

Start with a tightly-scoped pilot project to prove the concept and generate internal buy-in. An effective first step is often to simply enrich an existing dataset, such as a customer list, with geographic coordinates—a process known as geocoding. This small architectural enhancement unlocks immediate value, enabling basic but powerful spatial queries like, “Find all customers within a 10-kilometer radius of a proposed new store location.”

Building reliable, maintainable, and secure geospatial systems is a long-term engineering discipline. At Devisia, we partner with technology leaders to design and build custom GIS and AI solutions that function as durable business assets. Find out how we can help you build your next digital product at https://www.devisia.pro.