A data retention policy is more than a legal document defining how long your organization keeps data. It is a core architectural constraint that directly impacts system performance, security, compliance, and operational cost. A well-designed policy is the rulebook that prevents your systems from accumulating an endless, expensive, and high-risk data liability.

Failing to define and automate data retention is a direct path to crippling technical debt, security vulnerabilities, and non-compliance penalties.

The Problem: Data Hoarding as Technical Debt

Many engineering teams treat data retention as a compliance task delegated by the legal department—a checkbox to be ticked and forgotten. This perspective is dangerously incomplete. In modern software architecture, your approach to data retention is a fundamental product and engineering decision.

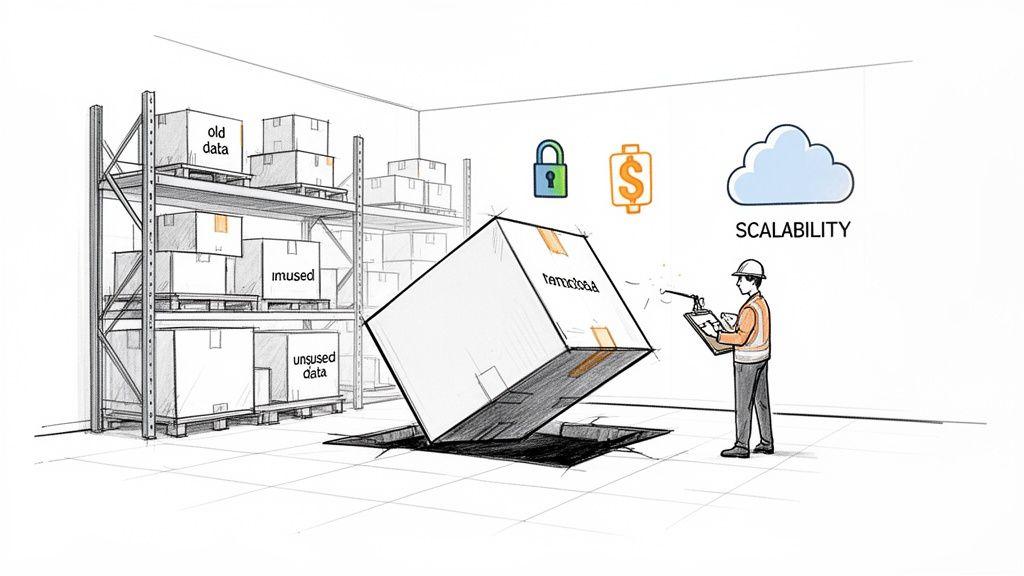

Think of your system’s storage as a warehouse. Without a clear policy, every piece of data ever generated—user logs, old transaction records, inactive user profiles, system metrics—is piled up indefinitely. Over time, that warehouse becomes cluttered, expensive to operate, and an attractive target for attackers. This state of unmanaged data accumulation creates a significant and growing liability.

The True Cost of Indefinite Data Storage

The consequences of failing to implement a proactive retention strategy grow exponentially with your business. When you hold onto data without a clear purpose, the information you store becomes more of a threat than an asset.

This liability manifests in several critical ways:

- Escalating Storage Costs: Cloud storage is a direct operational expense. As data volumes grow, so do your monthly bills for databases, object storage (Amazon S3, Azure Blob Storage), and backups. This eats directly into your gross margins.

- Increased Breach Impact: The more data you hold, the more valuable a target you become. Old, forgotten customer data is a goldmine for attackers, and a breach can lead to devastating financial penalties and reputational damage.

- Degraded System Performance: Bloated databases and massive tables lead to slower query execution, longer backup and restore times, and more complex schema migrations. This sluggishness directly harms the user experience and reduces engineering velocity.

- Severe Compliance Penalties: Regulations like GDPR, NIS2, and DORA mandate data minimization and purpose limitation. Retaining data without a clear business or legal justification can result in fines reaching millions of euros.

A proactive data retention policy transforms data from a potential liability into a managed asset. It is a cornerstone of building scalable, secure, and compliant software systems. A well-defined policy demonstrates a mature understanding of risk and a genuine commitment to privacy by design.

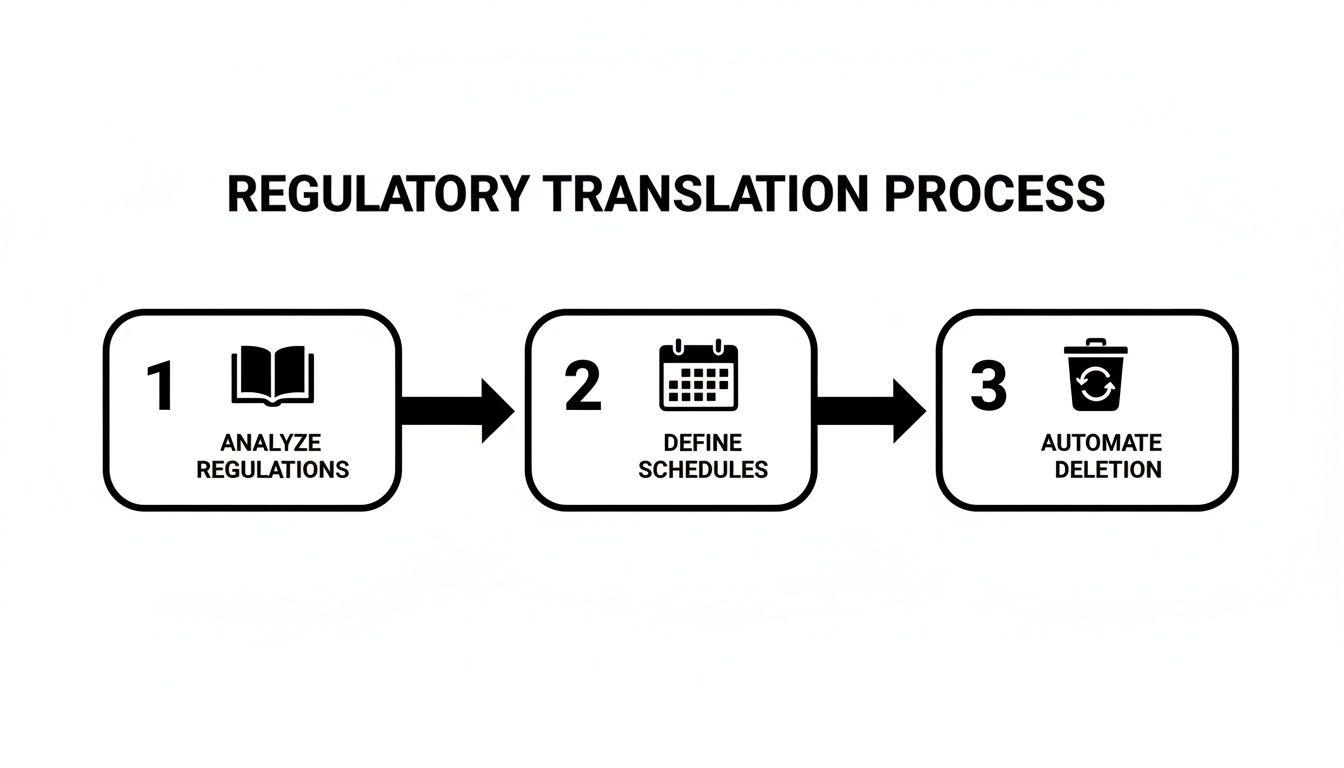

Translating these principles into automated technical workflows is a core challenge of modern software architecture.

Translating Global Regulations Into Retention Schedules

Developing a functional data retention policy requires translating complex legal requirements into specific, actionable schedules. This is a shared responsibility for engineering, product, and compliance leaders. Applying a single, generic retention period to all data is a naive approach that leads to either compliance breaches or the accidental destruction of critical business records.

The objective is to create a set of defensible schedules for each category of data your system handles. For every data type, you need a documented justification for why you retain it for a specific period. This process begins by mapping legal principles to the data flowing through your systems.

Core Principles Guiding Retention Periods

Most data protection laws, from GDPR to CCPA, are built on a handful of common-sense principles. Understanding these allows you to make sound architectural decisions even when a regulation does not specify a precise timeframe.

- Purpose Limitation: You can only retain data for the specific, legitimate purpose disclosed to the user at the time of collection. Once that purpose is fulfilled, the data’s retention period expires.

- Data Minimization: You should only collect and retain data that is strictly necessary for that stated purpose. This principle directly challenges the legacy practice of keeping data “just in case.”

- Storage Limitation: Personal data must be kept in a form that permits identification of individuals for no longer than is necessary. Afterward, it must be securely deleted or irreversibly anonymized.

The fundamental question your retention schedule must answer for every data type is: “Why do we still need this?” If you lack a clear, documented business or legal justification, the default action under regulations like GDPR is to dispose of it.

Mapping Regulations to Data Types

In practice, these principles require you to classify your data and assign distinct retention periods. For example, over-retaining data is a major compliance risk; studies show that a high percentage of data kept beyond its necessary lifecycle contains sensitive personal information, creating a liability for both legal penalties and increased storage costs.

Here is a practical breakdown of common data types and their typical retention schedules.

Regulatory Retention Period Cheat Sheet

This table provides a baseline for creating your own detailed retention schedule, mapping common data types to their governing regulations and typical retention periods.

| Data Type | Governing Regulation(s) | Common Retention Period | Key Consideration |

|---|---|---|---|

| User Account Data | GDPR, CCPA/CPRA | Active account + 30-90 days | The grace period allows for account reactivation and prevents accidental data loss. Post-grace period, data must be anonymized or permanently deleted. |

| Financial & Tax Records | Tax Laws, AML, SOX | 7-10 years | This is often a non-negotiable legal requirement that overrides a user’s “right to be forgotten” for these specific records. |

| Application & Audit Logs | SOC 2, ISO 27001, NIS2, DORA | 90-365 days | This balances security investigation needs with the high storage volume of logs. Critical security event logs may require longer retention based on risk assessment. |

| Customer Support Data | Contractual Obligations, GDPR | 1-3 years after resolution | Avoids indefinite retention of potentially sensitive conversations while enabling quality review and historical context for recurring issues. |

This table is a starting point, not a definitive legal guide. Your specific business contracts, industry standards (like HIPAA for healthcare), and local laws will introduce additional requirements.

Let’s examine these categories with an engineering lens:

1. User Account Data This includes core profile information like name, email, and authentication details. You need it as long as the account is active. Upon a user-initiated deletion, a grace period of 30-90 days is a reasonable practice to handle accidental deletions. After this period, the data must be either permanently deleted or fully anonymized.

2. Transaction & Financial Records This covers invoices, payment histories, and subscription records. Here, financial and tax laws dictate retention. Many jurisdictions require these records to be kept for 7-10 years for audit purposes. This is a legal obligation that takes precedence over a user’s right to erasure for this specific data class.

3. Application & Audit Logs These system-generated logs track user actions, API calls, and security events. They are vital for debugging and incident response but accumulate rapidly. A retention period of 90-365 days is a common and defensible standard, giving security teams a sufficient window for forensic analysis while managing storage costs.

4. Customer Support Communications Support tickets, emails, and chat transcripts often contain a mix of technical details and personal data. Retaining them for 1-3 years after an issue is resolved is a practical compromise. It allows your team to reference past interactions for context and quality assurance but avoids indefinite retention of sensitive conversations. For teams focused on compliance, see our deep dive into GDPR’s Article 30 requirements.

Architectural Patterns for Automated Data Retention

A data retention policy on paper is useless without technical implementation. Moving from manual cleanups to a fully automated system is where the real engineering work begins. The goal is to build auditable, resilient workflows that enforce retention rules as a background process.

A successful strategy translates legal rules into defined schedules and robust automation.

Automating Data Lifecycles in Cloud Storage

Modern platforms rely heavily on cloud object storage like Amazon S3 or Azure Blob Storage. These services offer powerful, native features for automating data retention. Instead of writing custom cron jobs to scan and delete old files, use built-in lifecycle policies.

For example, an AWS S3 Lifecycle policy allows you to define rules to:

- Transition data automatically: Move objects from a standard (hot) storage class to an infrequent access (warm) or archive (cold) tier after a specified period (e.g., 90 days). This significantly reduces costs for data that must be retained but is rarely accessed.

- Schedule object expiration: Define a rule to permanently delete objects after their retention period ends, such as purging application logs after 365 days.

This offloads the mechanics of retention to the storage layer, simplifying your application code and increasing reliability.

Designing Robust Deletion Workflows for Databases

While lifecycle policies work well for unstructured files, removing structured data from a database requires a more sophisticated approach. Running a direct DELETE FROM users WHERE... query in production is risky, can cause performance degradation, and is difficult to audit.

A robust deletion workflow must be idempotent and graceful.

Idempotency ensures that executing a deletion job multiple times for the same data yields the same result as running it once. This is a critical property for building resilient systems that can recover from failures without corrupting data or causing unintended deletions.

A superior pattern is a multi-step, asynchronous process:

- Flagging: A scheduled job runs periodically (e.g., daily) and identifies records that have passed their retention date. Instead of deleting them immediately, it flags them for deletion by setting a

deletion_pending_attimestamp. - Processing: A separate, idempotent worker process consumes these flagged records in small, manageable batches and performs the actual deletion. This prevents long-running

DELETEqueries from locking tables and impacting application performance. - Auditing: Every step—from flagging to final deletion—is recorded in an immutable audit log. This provides a verifiable record of what was deleted, when, and under which policy rule.

Hard Deletion vs. Soft Deletion vs. Anonymization

When removing data, you have three primary techniques. The choice depends on your compliance requirements, technical constraints, and business needs.

- Hard Deletion: The data is permanently and irretrievably removed from the database. This is the cleanest option from a privacy perspective and is the standard for fulfilling “right to be forgotten” requests.

- Soft Deletion: A record is marked as deleted by setting a flag (e.g.,

is_deleted = true). The data remains in the database, which simplifies recovery but can create compliance risks if the “deleted” data is not eventually purged according to a defined policy. - Anonymization: Personally identifiable information (PII) is removed or obfuscated, but the remaining anonymized record is kept for analytical purposes. For example, a user’s name and email are replaced with a random ID, but their transactional history is retained for trend analysis. True anonymization is technically challenging but offers a powerful way to balance compliance with business intelligence.

Implementing Legal Holds

A critical but often overlooked requirement is the legal hold—a directive to suspend deletion for specific data related to litigation or an investigation. Your architecture must support this without halting your entire retention system.

The naive approach is to disable all deletion jobs, which would put you in breach of your retention policy for all other data.

A better implementation involves adding a “legal hold” attribute to your data models. Before your workflow deletes any record, it must first check for this flag. If a hold is active, deletion is skipped for that specific record or user, while all other data continues through its normal lifecycle. This targeted suspension ensures you meet legal obligations without creating a wider compliance failure.

Navigating Data Retention in AI and LLM Systems

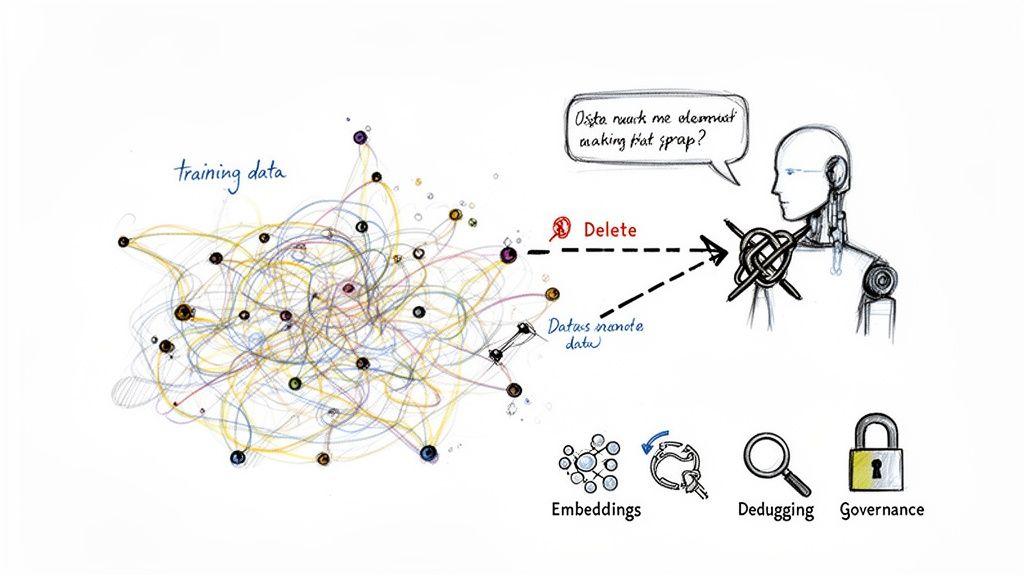

When you introduce AI and Large Language Models (LLMs), conventional data retention policies are insufficient. The way these systems learn from and retain information is fundamentally different from a relational database. Applying traditional database retention rules to an AI pipeline is a dangerously naive approach.

The core problem is data entanglement. Once a model learns from a piece of data, that information is embedded within the model’s parameters. Surgically removing a single user’s input after training is often technically impossible without retraining the entire model—an expensive and impractical process.

The Lifecycle of Data in AI Systems

To build a workable policy, you must classify the different types of data your AI system uses and generates. Each type has a unique value and risk profile.

- Training and Fine-Tuning Datasets: The foundational data used to build or specialize your model. This data is often retained as long as the model version is active to allow for auditing, debugging, and future retraining.

- User Prompts and Inputs: The queries and commands users provide to the system. This data is invaluable for monitoring misuse and improving the product, but it is also laden with privacy risks.

- Model Outputs and Responses: The content generated by your AI. Retaining this data is useful for quality control and safety monitoring, but it creates a massive, fast-growing storage footprint.

- Embeddings in Vector Databases: These numerical representations of data power similarity searches. While not human-readable, they are derived from source data and can contain echoes of sensitive information. They must be managed as part of the data lifecycle.

This is where a robust approach to data protection and privacy by design becomes non-negotiable.

Practical Retention Strategies for AI Data

Managing this landscape requires a targeted, pragmatic approach that balances model performance, user privacy, and operational cost.

The central challenge is that the ‘right to be forgotten’ collides with the technical reality of how models learn. A responsible AI governance model acknowledges this conflict and implements controls to manage it.

Instead of aiming for the impossible goal of perfect data deletion from a trained model, focus on controlling inputs and rigorously managing outputs.

This requires a few key controls:

- Purpose-Built Data Lakes: Isolate AI training data in a dedicated environment with strict access controls. Tag every dataset entering this environment with its purpose and an explicit expiration date.

- Distinct Policies for Interaction Logs: Treat LLM interaction logs (prompts and responses) differently from foundational training data. A much shorter, more aggressive retention period—such as 30-90 days—is often appropriate. This provides a window for quality review and abuse monitoring while minimizing privacy exposure.

- Human-in-the-Loop for Data Pipelines: Implement checkpoints where a human reviews data before it is used for fine-tuning. This control is effective at preventing sensitive or inappropriate information from being permanently incorporated into your model.

- Linked Deletion for Embeddings: Your system must have a process to delete the embeddings in your vector database whenever the original source data is deleted. The two are inextricably linked and must be managed together.

By implementing these granular controls, you build AI systems that are not only powerful but also compliant and trustworthy.

Building a Practical Governance Framework

A data retention policy without clear ownership is merely a document. To be effective, it requires a practical governance framework that assigns clear accountability for enforcement, review, and updates. A common mistake is treating policy creation as a one-time project. In reality, data retention policies must be living documents that evolve with your product, contracts, and the regulatory landscape.

Defining Stakeholder Roles and Responsibilities

Effective governance starts with a cross-functional team that can balance business needs, technical feasibility, and legal obligations.

- Product Leaders: Define the legitimate business purpose for retaining data. They justify why specific information is necessary for product features to function and for delivering user value.

- CTOs and Engineering Leads: Own the technical implementation. They are responsible for designing automated deletion workflows, selecting appropriate storage patterns, and implementing tools for legal holds and archival.

- Compliance Managers or Data Protection Officers (DPOs): Act as the legal guardians. They interpret regulations (like GDPR, CCPA, or HIPAA) and ensure retention schedules are compliant and defensible under audit.

A policy becomes real when these three perspectives—product, engineering, and compliance—are in constant dialogue. Without this collaboration, engineering will build systems that don’t meet legal needs, and product goals will be at odds with both.

The Data Governance Committee Model

For growing organizations, formalizing a data governance committee or assigning a Data Steward is a crucial step. This creates a central point of accountability for reviewing and updating the policy periodically. This model is particularly important for frameworks like SOC 2, where auditors look for evidence of established governance. You can read more about data retention’s role in SOC 2 compliance on linfordco.com.

A strong governance structure ensures your policy remains aligned with business reality and legal mandates. For companies navigating these complexities, exploring governance, risk, and compliance software can provide critical support. A lean, agile governance process is what turns your policy from a static document into a dynamic tool for managing risk.

Your Actionable Data Retention Implementation Plan

This checklist breaks down the key takeaways from this guide into a concrete project plan for translating your data retention requirements into robust, automated software.

Phase 1: Initial Discovery and Planning

This foundational phase is about understanding what data you have and your obligations related to it. Rushing this step leads to flawed policies and technical debt.

- Assemble Your Cross-Functional Team: Bring leaders from Product, Engineering, and Legal/Compliance together. A policy built in a silo is doomed to fail.

- Conduct a Data Inventory: Map every type of data your system collects, processes, and stores. This includes user data, system logs, transaction records, support chats, and AI training sets.

- Classify Your Data: For each data type, classify its sensitivity (e.g., public, confidential, PII) and its business purpose. This classification is the bedrock of your retention schedule.

Phase 2: Policy Definition and Design

With a clear inventory, you can now define the rules of engagement for your data.

A well-designed policy is your organization’s single source of truth for the data lifecycle. It’s a defensible document that answers the critical question: “Why do we still have this data?”

- Map Regulatory Requirements: Link each data category to its governing laws (GDPR, CCPA, HIPAA, etc.) and any contractual obligations.

- Define Retention Schedules: For every data category, define a specific retention period. Document the start trigger (e.g., account closure), the duration (e.g., 90 days, 7 years), and the end-of-life action (e.g., delete, anonymize).

- Choose Technical Solutions: Select architectural patterns that fit your stack. Will you use cloud lifecycle rules? Build asynchronous deletion workflows? How will you handle legal holds?

Phase 3: Implementation and Ongoing Governance

This is where your policy becomes a functional part of your software.

- Assign Ownership and Implement: Assign clear responsibility for building the technical controls. A designated Data Steward or governance committee should own the process and its outcomes.

- Test and Validate: Rigorously test your deletion and anonymization workflows. Ensure they are idempotent, auditable, and can handle edge cases like legal holds correctly.

- Document and Train: Update internal documentation and train relevant teams—especially engineering and customer support—on their roles and responsibilities.

- Monitor, Audit, and Evolve: Schedule annual reviews of your policy to adapt to new regulations, product features, and business needs. Implement logging to provide an auditable trail of all data disposal actions.

Common Questions (and Practical Answers) on Data Retention

Here are answers to common questions we hear from technical and product leaders during implementation.

What’s the Real Difference Between Archiving and Deleting?

Archiving and deleting serve different purposes and have different cost and compliance implications.

- Data Archiving is moving inactive data to a cheaper, slower storage tier for long-term retention. The data is still available for legal or audit purposes.

- Data Deletion is the permanent, irreversible removal of data.

Your data retention policies must clearly define when to archive (to save costs) and when to delete (to meet legal obligations).

How Do We Actually Handle a ‘Right to Be Forgotten’ Request?

A “Right to be Forgotten” request under GDPR is a legal mandate that requires a reliable technical process. Your architecture must be able to:

- Locate all of a user’s personal data across all services and databases.

- Trigger a workflow to permanently delete or irreversibly anonymize it.

- Respect legal holds and other obligations that prevent deletion of certain data (e.g., financial records).

This is a non-trivial engineering challenge that tests the maturity of your data architecture.

Can We Keep Anonymized Data Forever?

In principle, yes. If data is genuinely and irreversibly anonymized, it is no longer personal data and falls outside the scope of most privacy regulations. However, truly anonymizing data is difficult. Many teams mistakenly implement pseudonymization, where data can be re-identified. Pseudonymized data is still considered personal data and remains subject to retention schedules.

How Often Should We Review Our Data Retention Policy?

Review your policy at least annually, or immediately following a significant change such as:

- Launching a new product or major feature.

- Expanding into a new jurisdiction with different laws.

- A change in the regulatory environment (e.g., a new privacy law).

Treating your policy as a living document is the only way to manage risk effectively and ensure your compliance efforts remain relevant.

At Devisia, we build reliable software with privacy as an architectural choice, not an afterthought. We provide a clear path to meaningful, maintainable software. Learn more about our approach.