For too long, data protection and privacy have been treated as compliance checkboxes. This view is a technical and strategic error. For B2B leaders, they are deliberate engineering choices that define modern, resilient software systems.

Getting this right is not primarily about avoiding fines; it is about safeguarding customer trust, reducing architectural debt, and building a durable competitive advantage.

Why Data Protection Is a Foundational Architectural Concern

The conversation around data protection has matured. It is no longer a last-minute task for the legal department or a feature tacked on before launch. It is now a core principle of sound software engineering and a pillar of business strategy.

For founders, CTOs, and product leaders, viewing privacy through a purely compliance-focused lens is a strategic mistake. Regulations like GDPR are not just bureaucratic hurdles; they codify fundamental user rights that translate directly into architectural requirements. A system not built with privacy in mind is, by definition, a flawed system. It carries latent risks that eventually surface as crippling fines, reputational damage, and expensive, painful refactoring.

The Architectural Mandate

Treating privacy as an architectural choice means it shapes decisions from day one of the development lifecycle. This philosophy is about building systems that are secure and compliant by design, not by accident.

Privacy by design is a proactive, preventative approach that begins before a single line of code is written. It is about making fundamental architectural choices that minimise data exposure by default. Security asks, “Is the data protected?” Privacy by design asks, “Do we even need this data in the first place?”

This guide offers a pragmatic, engineering-first approach to data protection. We will cut through the legal jargon and provide CTOs and product managers with actionable guidance on:

- Core Principles: Translating legal requirements into concrete engineering constraints.

- Architectural Patterns: Implementing data minimisation, pseudonymisation, and robust access controls.

- Modern Threats: Securing your tech stack against contemporary cybersecurity risks.

- AI-Specific Challenges: Navigating the unique privacy complexities of AI and LLM systems.

This proactive stance is not just about managing risk. A robust data protection framework is a powerful market differentiator. It signals a deep respect for customer data that builds long-term loyalty and trust. Understanding the role of a data protection manager can also offer key insights into how to make these principles operational.

Navigating the Global Data Protection Landscape

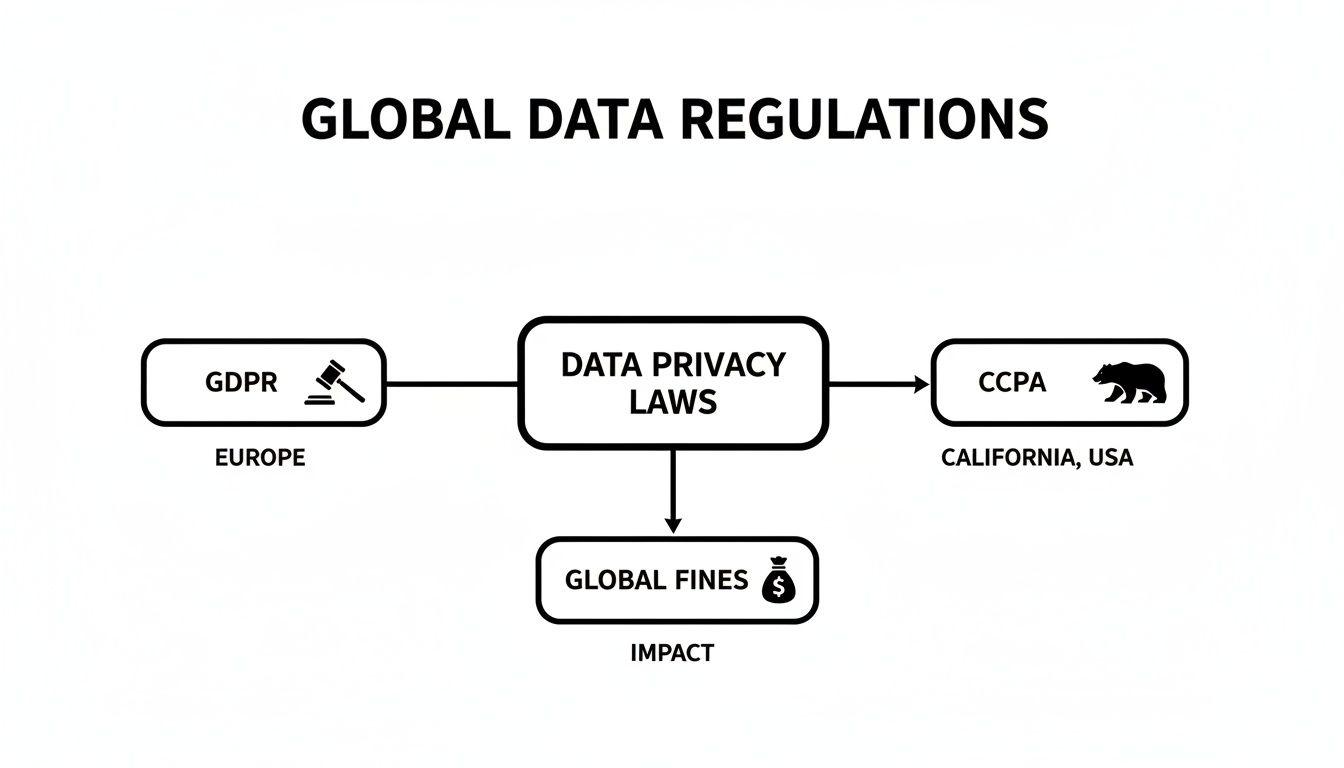

For engineering and product leaders, the global web of data protection regulations—GDPR, CCPA, NIS2, DORA—can feel like an intimidating morass of acronyms.

The key is not to memorise every clause, but to understand the core engineering principles that connect them. These laws are not just legal documents; they are technical specifications that dictate how modern software must be built. They translate abstract legal ideas like lawful basis, data minimisation, and fundamental user rights into hard engineering constraints. For a system to be compliant, its architecture must be built on these pillars. A policy-based approach alone is insufficient.

From Legal Principles to Engineering Constraints

A lawful basis means you must have a clear, documented reason for every piece of personal data you process. This is not a box-ticking exercise; it demands a direct, demonstrable link between the data collected and the service provided. If you collect a user’s location, your system must have a feature, like local delivery tracking, that requires it.

Data minimisation is the engineering discipline of collecting only what is absolutely essential and, just as importantly, deleting it when it’s no longer needed. A naive approach is to create a single, monolithic “users” table with dozens of nullable columns, “just in case.” A privacy-first architecture, however, would partition data into distinct, purpose-driven services. This ensures a system for behavioural analytics, for instance, has zero access to a user’s payment details.

Finally, user rights like access, rectification, and erasure are not features you can bolt on later. They demand specific architectural choices from day one.

A Data Subject Access Request (DSAR), or the ‘right to know,’ is not a support ticket; it is an architectural stress test. It requires your systems to locate, aggregate, and export every piece of a user’s data across all your microservices, databases, and third-party logs. Without a robust data map and automated workflows, this becomes a frantic, manual, and nearly impossible task.

This reality forces engineering teams to design systems with data retrieval and deletion capabilities from the very beginning.

The Real-World Consequences of Non-Compliance

The motivation to implement these principles correctly is increasingly financial. The compliance landscape has matured, and regulators are applying unprecedented pressure. Under frameworks like GDPR, companies face fines of up to €20 million or 4% of their global annual turnover for significant failures.

The United States is following a similar trajectory. By 2026, 20 states will be enforcing their own consumer privacy laws, creating a complex patchwork for any company operating nationwide. You can explore more about these evolving figures and what they mean for business leaders.

This shift proves that regulators are no longer just reading policy documents; they’re inspecting the code and the infrastructure. A weak hashing algorithm, an insecure API, or the inability to honour a deletion request are all technical failures with very real financial consequences.

Key Data Protection Regulations at a Glance

Viewing these regulations through an engineering lens makes them far less intimidating. Instead of getting lost in legal text, we can focus on what they mean for our architecture and product features. The table below translates key regulations from legalese into engineering tasks.

| Regulation | Geographic Scope | Core Principle for Engineers | Example Implementation |

|---|---|---|---|

| GDPR (General Data Protection Regulation) | European Union | Consent & Lawful Basis | Building a granular consent management platform where users can opt-in to specific data uses (e.g., analytics vs. marketing) and easily revoke that consent. |

| CCPA/CPRA (California Privacy Acts) | California, USA | Right to Opt-Out & Transparency | Implementing a “Do Not Sell or Share My Personal Information” link and engineering the backend to respect this flag across all advertising and data-sharing integrations. |

| NIS2 Directive | European Union | Cybersecurity & Incident Reporting | Creating robust, centralised logging and automated alerting systems that can detect a breach and gather necessary report data within the strict 24-hour notification window. |

| DORA (Digital Operational Resilience Act) | EU Financial Sector | Third-Party Risk & Resilience | Architecting systems with clear failover mechanisms and performing mandatory penetration tests on critical third-party software dependencies. |

Ultimately, navigating the global data protection landscape is an engineering challenge. By translating principles into architecture, technical leaders can build systems that are not only compliant but also more robust, trustworthy, and resilient by design.

Implementing Privacy by Design in Your Software Architecture

The legal theory is clear. But how do we translate data protection and privacy from a compliance checklist into actual, working software? This is where Privacy by Design ceases to be a buzzword and becomes a set of engineering principles.

The goal is to make privacy the default state, not an optional add-on. It means building systems where data exposure is structurally minimised from day one. This is not about appeasing lawyers; it is about creating a durable, maintainable architecture. A common but dangerous shortcut is to create monolithic user data stores, hoarding information “just in case.” This approach creates a massive liability. A single breach of that central database could expose every piece of user data you hold.

A privacy-first architecture rejects this model. Instead, we treat data collection with surgical precision. Every component in the system should only access the absolute minimum of information it needs to perform its function. That is the foundation of trustworthy and maintainable software.

Core Architectural Patterns for Privacy

To put Privacy by Design into practice, engineers can rely on several battle-tested patterns. These are the building blocks for systems that are both compliant and resilient.

-

Data Minimisation: This is the most critical principle. Before collecting any data, ask: is this absolutely essential for the feature to function? For example, instead of storing a user’s exact date of birth to verify their age, you can store a simple boolean:

is_over_18. The requirement is met without holding sensitive Personally Identifiable Information (PII). -

Data Segregation: Separate different types of data, both logically and physically. A user’s profile information (name, email) should not live in the same database—or even the same schema—as their behavioural analytics data (clicks, page views). This containment ensures that a compromise in one system doesn’t automatically bleed into another. Your analytics service should query an event log with anonymous IDs, not the main customer database.

-

Pseudonymisation: Replace direct identifiers with reversible, non-identifying tokens. A user with ID

123in the main database might becomeE7A8B9in the analytics system. This allows you to track user journeys and aggregate behaviour without directly handling their personal data, dramatically reducing your risk profile.

These patterns are not abstract ideas; they have a direct impact on how you design services and databases.

From Data Storage to Access Control

Once the data is stored securely, the next critical layer of data protection and privacy is controlling who can access it. A robust access control model is non-negotiable.

Role-Based Access Control (RBAC) is a standard pattern that is incredibly powerful for enforcing the principle of least privilege. An engineer’s role should not grant them carte blanche access to production databases. A customer support agent’s role, for instance, might only grant read-only access to a specific customer’s non-sensitive data, and only when an active support ticket justifies it.

Implementing RBAC correctly means that no single person or system holds the keys to the entire kingdom. Access should be temporary, audited, and strictly limited to a specific, justifiable task. This drastically reduces the risk of both insider threats and the damage from a compromised account.

Adopting these patterns requires a long-term mindset. The initial engineering investment is higher than taking shortcuts, but the ROI is significant. You build a system that is easier to audit, simpler to maintain, and far less risky. Compliance with regulations like GDPR becomes a natural side effect of good architecture, not a frantic, last-minute scramble.

This flowchart makes it clear: regional laws like GDPR and CCPA now have a direct, global financial impact. Strong privacy architecture is not just a best practice—it is a business necessity, regardless of where you operate.

Securing Your Tech Stack Against Modern Threats

A robust privacy architecture is only half the story. Without equally strong security controls, it is a house with glass walls. True data protection and privacy depend on the ability to defend your systems against a threat landscape that is more complex and faster-moving than ever.

Relying on legacy models like perimeter defense is dangerously outdated. Today, a single weakness—a misconfigured API, a vulnerability in your software supply chain, or one successful social engineering attack—can escalate into a catastrophic data breach. For technical leaders, the challenge is to shift from a reactive posture to embedding security throughout the entire software development lifecycle (SDLC). This means building a defense-in-depth strategy where multiple, overlapping controls work in concert. If one layer fails, others are ready to detect, contain, and neutralise the threat.

Understanding the Evolving Threat Landscape

Today’s attackers are not just launching brute-force attacks; they are strategic, efficient, and they exploit common weaknesses with alarming speed. The numbers paint a stark picture: you can review key data privacy statistics to see the full scope of the challenge.

The key takeaway is that attackers are relentlessly targeting the weakest links in your infrastructure and software supply chain, with a laser focus on stealing data for extortion. A reactive, perimeter-based security model is no longer a viable defense.

Actionable Mitigation Strategies for Engineering Leaders

Securing your tech stack means moving from abstract principles to concrete, technical controls. A pragmatic approach focuses on high-impact areas that directly shrink your attack surface and improve your response time.

-

Secure API Design: Many breaches originate from poorly secured APIs. You must enforce strict rate-limiting, use robust authentication like OAuth 2.0, and implement fine-grained authorisation. Every single API endpoint handling sensitive data must validate user permissions on every request—never trust a check made on the client side.

-

Automated Dependency Scanning: Your application is only as secure as its weakest dependency. Integrate automated tools like Dependabot or Snyk directly into your CI/CD pipeline. These tools continuously scan for known vulnerabilities in your open-source libraries. A critical vulnerability in a third-party package is a critical vulnerability in your product.

-

Robust Logging and Anomaly Detection: You cannot stop an attack you cannot see. Implement centralised, structured logging across all your services and infrastructure. These logs are not just for debugging; they are a vital security tool for spotting unusual activity, like a sudden spike in failed login attempts or unexpected data access patterns from a service account.

An effective incident response plan is not a document that gathers dust. It is a battle-tested playbook that your team drills regularly. It must define clear roles, communication channels, and technical steps for isolating systems, preserving evidence, and recovering services. When a breach occurs, confusion is your enemy.

Implementing these controls demands a fundamental shift in mindset. Security is not a separate phase tacked on at the end of development; it is an integral part of writing high-quality, reliable code. If you need expert guidance, engaging with specialised cybersecurity consultancies can bring in the expertise needed to assess your risks and implement the right controls. A secure tech stack is the bedrock upon which genuine data protection and privacy are built.

Practical Data Protection for AI and LLM Systems

Integrating AI and Large Language Models (LLMs) into your products introduces entirely new data protection and privacy challenges. These are not just bigger versions of old problems; they are fundamentally different risks that demand new architectural thinking.

Treating an LLM as just another API call is a critical mistake. It is a shortcut that can—and often does—lead to serious data leaks and regulatory trouble. Unlike traditional software, AI models have complex, often unpredictable behaviors. Their outputs are probabilistic, not deterministic. This means they can accidentally leak sensitive information absorbed during training or from a user’s prompt. This creates unique failure modes that your standard security measures are not built to handle.

For engineering and product leaders, this means designing systems with a clear-eyed view of how data flows to, through, and from the model. Every prompt containing customer data is a potential privacy incident waiting to happen.

Unique Risks in AI and LLM Integration

The risks here go far beyond simple data storage. The very nature of how these models process information opens up new attack surfaces and the potential for unintended data exposure.

-

Training Data Contamination: If personal data makes its way into a model’s training set, the model can “memorise” and later regurgitate that data in its responses. This is not a bug; it is a persistent, hard-to-fix data leak that violates core privacy principles.

-

Prompt Injection and Data Leakage: A malicious actor can craft a prompt to trick the model into ignoring its safety instructions to reveal sensitive system information or data from other users’ sessions. This is a new class of injection attack specific to LLMs.

-

Inadvertent PII Exposure: A model tasked with summarising a customer support ticket might include the customer’s name, email, or other personal details in its summary, even if instructed not to. This probabilistic behaviour makes simple output filtering an unreliable defense.

These risks are not theoretical. They are practical engineering problems that demand specific, architectural solutions.

Architectural Patterns for Safer AI Systems

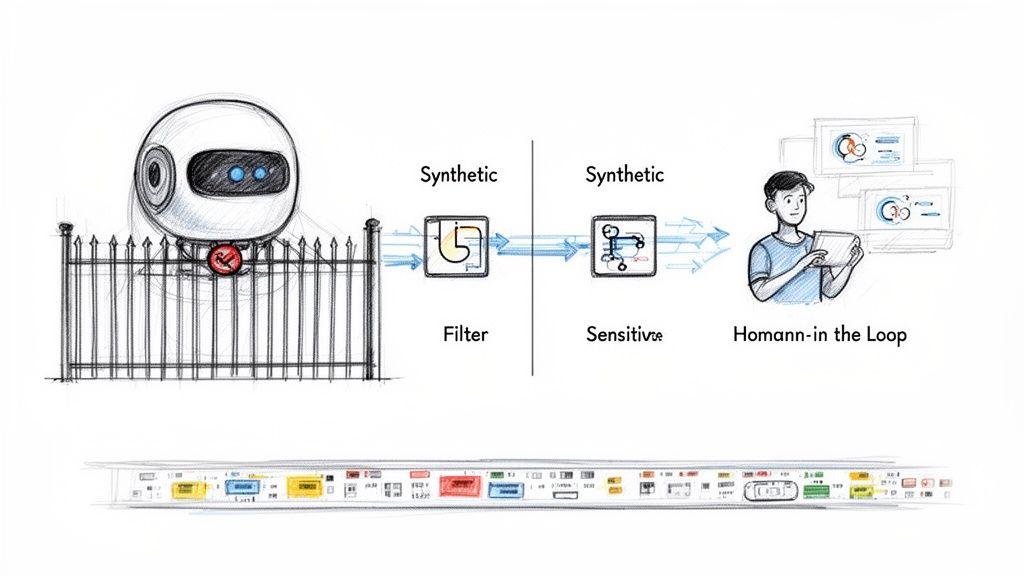

To mitigate these challenges, you must architect your system on the core assumption that the model is a potential point of failure and design explicit safeguards around it. This means creating layers of control to protect data before it ever reaches the model and to sanitise outputs before they are shown to a user.

A critical architectural mistake is allowing direct communication between your core application databases and a third-party LLM. The correct approach is to architect a ‘buffer’ or ‘proxy’ service that intercepts all requests, sanitises the data, and manages interaction with the model.

This intermediate service becomes your crucial control point for implementing privacy-enhancing patterns.

Implementing Practical Mitigation Strategies

Building a robust privacy posture for AI involves a mix of data-centric and model-centric controls. These strategies work together to form a layered defense.

-

Input Sanitisation and Anonymisation: Before any data is sent to an LLM, a dedicated service should scrub it for PII. Use techniques like Named Entity Recognition (NER) to find and replace names, emails, and phone numbers with generic placeholders (e.g.,

[CUSTOMER_NAME]). -

Implementing Guardrails: A “guardrail” is a separate process or even another model that validates both the user’s prompt (input guardrail) and the model’s response (output guardrail). It acts as a security checkpoint, blocking harmful prompts, checking for PII in responses, and ensuring the output aligns with your policies.

-

Human-in-the-Loop (HITL) Workflows: For high-stakes decisions, such as those in healthcare or finance, AI outputs should not be fully automated. You must architect a workflow where a human expert reviews and approves the model’s suggestion before any action is taken. This is your final, critical check against model errors and biases.

-

Robust Logging for Auditability: Every prompt sent to the model and every response received must be logged with associated metadata (e.g., user ID, session ID). This audit trail is non-negotiable for debugging, monitoring for misuse, and demonstrating compliance to regulators.

By adopting these patterns, teams can build powerful AI-enabled features without compromising their commitment to data protection and privacy. It is a necessary investment to manage the complex risks that come with this powerful technology.

Building a Culture of Lasting Data Protection

Real data protection and privacy are not one-off projects or sprints to check a compliance box. They are an ongoing, strategic commitment. A successful approach goes beyond technical fixes; it demands a cultural shift where every engineer, product manager, and leader sees privacy as their responsibility.

This shift starts by rejecting the tired idea that privacy is a roadblock to innovation. Instead, you must frame it as a core ingredient of product excellence. A pragmatic, engineering-first approach is the only sustainable way to build software that is secure, compliant, and trustworthy by design.

From Architecture to Organisational DNA

Embedding privacy into your company’s DNA makes it a shared priority. This means continuous education, transparent processes, and the right incentives. When privacy is treated as an architectural choice from the beginning, it naturally grows into a cultural one.

To foster this environment, leadership must champion privacy as a pillar of the company’s success. This requires investment in training, tools, and giving teams the time to build things the right way.

Lasting data protection is the result of deliberate, consistent effort. It is achieved when privacy-conscious decisions become the default for everyone involved in building and running your software, from the system architect to the junior developer.

This cultural foundation is what turns good intentions into reliable, maintainable systems that customers can trust.

A Practical Checklist for Leaders

Here is an actionable checklist to help you assess your current posture and identify priorities:

- Review Your Data Map: Do you have a living document that tracks all personal data, its purpose, its location, and its retention period? If not, this is your first and most critical task.

- Assess Access Controls: Are your RBAC policies actually enforcing the principle of least privilege? Conduct regular audits to ensure no one has more access than they absolutely need.

- Evaluate Vendor Risk: Have you thoroughly vetted the privacy and security practices of all your third-party services? Pay special attention to AI and analytics platforms.

- Test Your Incident Response Plan: When was the last time you conducted a tabletop exercise or drill? A plan that only exists on paper is useless under pressure.

- Prioritise Privacy in Roadmaps: Are you allocating engineering time for privacy work? This includes refactoring code to minimise data collection or implementing new Privacy-Enhancing Technologies (PETs).

Ultimately, building a culture of data protection transforms privacy from a cost center into what it should be: a competitive advantage that builds customer trust and drives long-term growth.

Frequently Asked Questions

When it comes to data protection and privacy, B2B leaders often face the same practical questions. Here are direct answers to common issues.

Where Should a Startup Begin with Data Protection and Privacy?

Start with a data map. Before writing code for a new feature, document exactly what personal data you need, why you need it, and how long you plan to keep it. This simple exercise is the core of the data minimisation principle and the foundation of modern regulations like GDPR. It forces clarity from day one.

From there, bake privacy into your architecture. Build consent mechanisms into your UI, hash passwords correctly, and implement basic access controls. Most importantly, do not collect sensitive data unless it is absolutely critical for the product to function. A simple privacy policy and an internal data map are your most valuable first assets.

How Does Privacy by Design Differ from a Standard Security Review?

A standard security review typically happens late in the development cycle. Its purpose is to find and fix vulnerabilities, like an SQL injection risk. It is a reactive process.

Privacy by Design is a proactive discipline that starts before development begins, shaping the architecture to minimise data exposure by default.

For example, instead of storing a user’s date of birth, you might only store a boolean flag indicating if they are over 18. Security asks, ‘Is the data we store protected?’ Privacy by design asks, ‘Do we even need to store this data in the first place?‘

What Is the Single Biggest Mistake Companies Make with AI and Privacy?

The biggest mistake is treating a third-party AI model as just another API call, without architecting the data flow.

Too often, companies send sensitive customer data directly in prompts, not realising that this data may be logged by the provider or even used for future model training. This creates a significant privacy risk and a potential compliance nightmare.

The correct approach is to architect a “buffer” layer. This is an intermediate service that anonymises or pseudonymises data before it ever reaches the external model. For highly sensitive use cases, running your own on-premise or private cloud models offers a much higher degree of control and safety.

Building reliable digital products with a clear focus on privacy requires an architectural mindset from the start. Devisia helps companies turn their vision into maintainable software, embedding security and compliance into the core of every system. Learn more about our pragmatic approach to custom software and AI development at https://www.devisia.pro.