A familiar pattern plays out in early-stage software teams. Launch is close, procurement is waiting, and someone drops a generic terms of service template into the repo so the site can go live.

Nothing breaks that day. The product signs users up, invoices go out, prompts flow through the AI layer, and everyone moves on.

The trouble starts later. A customer asks whether your team can use uploaded documents to improve the product. Another wants an export after termination, but the contract never defined format, timing, or what counts as customer data. A support dispute turns into an SLA dispute because “maintenance” was left vague. An AI feature produces a harmful output and the disclaimer turns out to be broad, but technically useless.

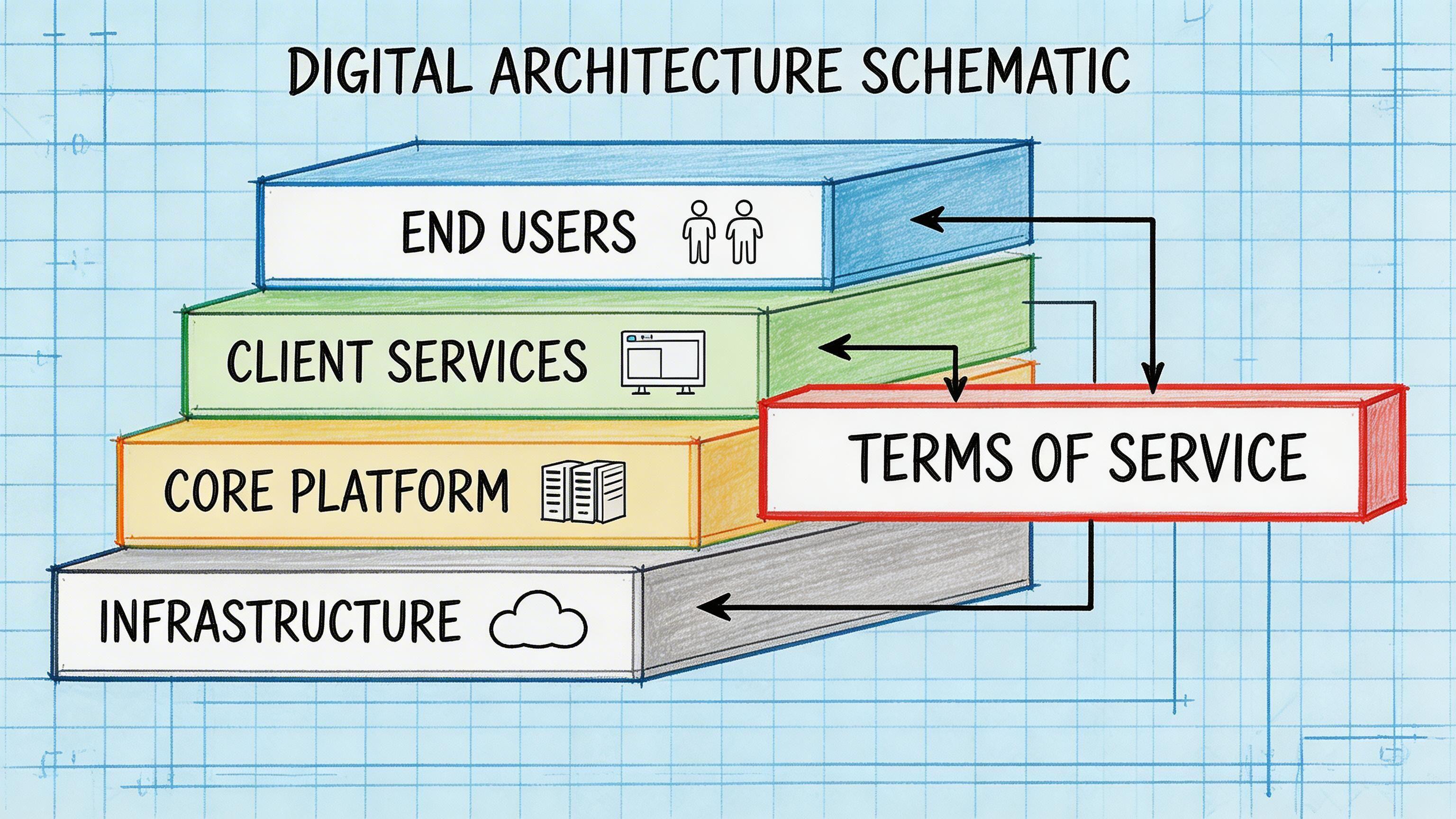

That’s why technical leaders should stop treating terms of service as footer text. In practice, they act more like an operating contract for the system. They shape what data you may process, how you log consent, which controls you need in production, and where liability sits when something fails.

Why Your Terms of Service Are a Product Not Just a Policy

The fastest way to create long-term risk is to treat terms of service as paperwork. Teams copy a template because it feels harmless. Legal will “clean it up later”. Engineering assumes it has no bearing on architecture.

That assumption fails in real products.

A SaaS platform’s terms of service define who may use the product, what they may upload, what happens if they abuse the service, when access can be suspended, which party owns outputs, and what the provider can do with customer data. Those aren’t abstract legal issues. They affect account models, moderation controls, storage design, support workflows, procurement conversations, and incident response.

A 2017 Deloitte study reported that 91% of U.S. consumers accept terms of service without reading them, as summarised by the Berkeley School of Information blog at https://blogs.ischool.berkeley.edu/w231/2021/07/09/do-we-agree-to-these-terms-and-conditions/. For a technical team, that isn’t permission to bury aggressive clauses. It’s a warning. If most users consent blindly, your product is depending on a mechanism that may not hold up well when data use, AI behaviour, or service limits are challenged.

Where copy-paste terms usually fail

Three problem areas show up repeatedly:

- Data rights drift. The product starts with analytics, then adds embeddings, retrieval, or model evaluation. The contract still uses broad language written for a simple web app.

- Operations and support ambiguity. “Commercially reasonable efforts” appears everywhere, but nothing maps to monitoring, escalation, response windows, or customer communication.

- Liability mismatches. The platform relies on third-party APIs, model providers, cloud storage, and subprocessors, but the terms still assume a monolithic application with predictable failure modes.

Terms of service become dangerous when they describe a product you no longer operate.

What a stronger view looks like

Treat the document like part of the product surface.

If your roadmap includes usage-based billing, admin roles, AI-generated content, human review queues, retention controls, or customer-specific integrations, the terms should reflect those realities. They should also be written plainly enough that product, engineering, security, and legal can all test whether the system can honour them.

That is the practical shift. Terms of service aren’t there to look complete. They’re there to align behaviour, architecture, and risk.

The Architectural Role of Terms of Service

Many CTOs think of terms of service as a legal appendix. A better mental model is an API contract between provider and user. It declares the allowed inputs, the boundaries of use, the error conditions, and the consequences of misuse.

When viewed that way, clauses stop looking like boilerplate. They start looking like system requirements.

Clauses that map directly to architecture

A few examples make this concrete.

| Clause area | What it means technically | What often goes wrong |

|---|---|---|

| Data retention | Scheduled deletion jobs, storage lifecycle rules, backup policies | The contract promises deletion, but archives or logs keep the data indefinitely |

| Acceptable use | Rate limiting, abuse detection, moderation queues, admin review tooling | The terms ban abuse, but the system has no way to detect or enforce it |

| Account responsibility | Identity, access control, session management, audit trails | Shared accounts and weak role separation undermine the clause |

| Service changes | Versioning, deprecation policy, feature flags, migration windows | Product teams make material changes with no notice workflow |

| Suspension and termination | Disablement logic, export workflows, evidence preservation | Access is cut off before billing, security, and legal records are coordinated |

The point isn’t that the document replaces engineering design. It doesn’t. The point is that weak legal text often hides weak operational design.

Privacy is an architectural choice

A lot of teams still separate privacy work from system design. They write a privacy policy after implementation, add a consent checkbox, and assume they’re done.

That breaks down quickly in SaaS and AI products because the legal promise has to be enforceable in production. If the terms say customer content won’t be used for training, you need a real control boundary around that data. If the terms distinguish service telemetry from customer content, your observability stack has to preserve that distinction. If the terms reserve a right to suspend abuse, your platform needs signals, workflows, and access controls to do it consistently.

Practical rule: Never publish a clause that your logging, storage, permissions, and support processes can’t verify.

This is especially important when product teams use tools like OpenAI, Anthropic, vector databases, Datadog, S3-compatible storage, Stripe, or workflow engines. Each tool introduces data movement. The terms should match the actual movement, not the architecture diagram from six months ago.

A short explainer is useful if your team needs a common baseline before rewriting terms:

Read the terms like an engineer

A useful review exercise is to put legal text next to system behaviour.

Ask questions like these:

- Can we prove consent? If challenged, can we show the exact version accepted, timestamp, user identity, and presentation method?

- Can we enforce the rule? If the terms ban scraping, credential sharing, or unsafe prompts, which controls enforce that?

- Can we honour the promise during failure? If a customer terminates, can we export data cleanly and delete it according to the contract?

- Can we explain data use plainly? Not just to a regulator. Also to procurement, security reviewers, and enterprise buyers.

Teams that do this early usually write better terms and build cleaner systems. Teams that don’t often discover the mismatch during sales diligence, an incident, or a dispute.

Essential Clauses for Modern SaaS Platforms

Most SaaS terms of service cover the same broad categories. The difference between a strong document and a risky one is whether each clause matches how the platform operates.

User accounts and responsibility

This clause is usually drafted as a simple access rule. In practice, it defines your identity model.

If the platform supports SSO, delegated administration, team workspaces, or service accounts, the terms should say who is responsible for account activity and what counts as authorised use. A single-user clause copied from a consumer app won’t fit an enterprise environment with role-based access control.

For engineering teams, this should line up with:

- Authentication paths such as password login, SSO, or magic links

- Authorisation models such as admin, editor, analyst, and viewer roles

- Audit logging for login events, privilege changes, and destructive actions

A mismatch here creates support pain. Customers dispute who approved a change. Internal users share credentials. Offboarding fails because the contract assumed one account per human when the system allows broader access patterns.

Payment, renewal, and service suspension

Billing language often gets less attention than privacy language, but it drives product behaviour.

A subscription clause should fit the billing engine you use. If you support usage-based fees, annual commitments, trials, overages, or workspace-level billing, write that clearly. Don’t borrow terms written for a fixed monthly app if your product charges on events, seats, or API consumption.

Operationally, this connects to:

- invoicing and tax handling

- dunning workflows

- partial suspension versus full termination

- access to historical data during billing disputes

The riskiest mistake is blunt suspension logic. If a payment failure automatically disables the whole workspace, you may interrupt legitimate operations, trigger data access disputes, and create avoidable escalation.

Acceptable use and abuse controls

This clause is where legal expectations meet production controls.

For a SaaS product, acceptable use isn’t only about offensive content. It can include API abuse, scraping, credential sharing, reverse engineering, automated extraction, model probing, and misuse of collaboration features.

Good drafting names behaviours that matter to your system. Good engineering provides proportionate controls.

Examples include:

- Rate limits to slow abusive automation

- Queue-based review for flagged content or suspicious outputs

- Administrative suspension tools with evidence capture

- Appeal and review paths so enforcement isn’t arbitrary

If the product includes community or shared data features, this section should also connect to moderation practice. Otherwise the clause exists only on paper.

Intellectual property and customer content

Here, many SaaS templates become dangerous.

A basic clause should separate at least four categories:

- the provider’s software and documentation

- customer-uploaded data and content

- system-generated metadata and logs

- outputs created by the platform

Without that separation, ownership disputes become inevitable. This is even more important when features generate reports, summaries, classifications, or other derived artefacts.

A practical drafting rule is to avoid vague phrases like “all data generated through the service”. That wording tends to collapse customer content, telemetry, and outputs into one bucket.

For teams that are also refining contractual boundaries around processing roles, a related explanation in https://devisia.pro/en/blog/data-protection-agreement is useful because many SaaS disputes arise from mixing terms of service with data processing obligations.

Maintenance, support, and service levels

In this area, generic SaaS terms often fail procurement and create avoidable conflict after launch.

According to the 2025 ISTAT Digital Economy Survey, 42% of Italian SMBs have faced terms of service disputes related to maintenance and service level agreements, as cited at https://mikeconlow.substack.com/p/unserved-and-underserved-is-a-distinction. The practical lesson is simple. Don’t write “ongoing maintenance” unless you define what that includes.

What to specify instead of vague support language

- Uptime expectations. Define whether this belongs in the terms, an order form, or a separate SLA.

- Support scope. State whether support covers bug fixing, onboarding, training, integration help, and configuration requests.

- Planned maintenance. Explain notice expectations and whether maintenance windows affect availability commitments.

- Version support. If APIs or integrations are versioned, say how long deprecated versions remain supported.

- Exit mechanics. Define export format, request path, and what happens after termination.

If support promises aren’t technically measurable, they won’t survive a dispute.

This matters even more in custom or semi-custom SaaS. Many teams operate a product that still includes customer-specific connectors, bespoke reports, and incremental releases. Their terms should acknowledge that reality instead of pretending the platform is a static off-the-shelf service.

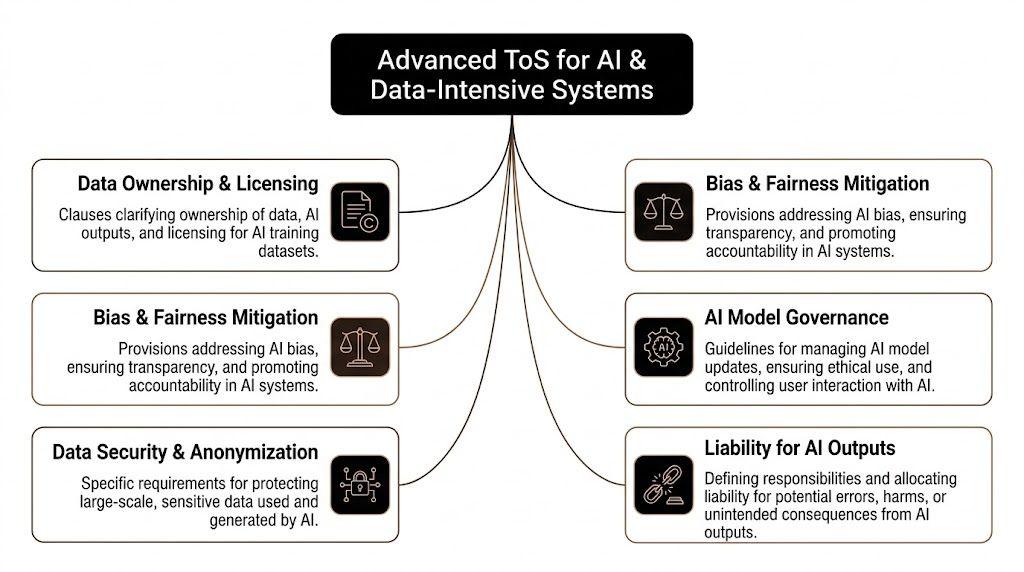

Advanced Clauses for AI and Data-Intensive Systems

Standard SaaS terms don’t cover the hard parts of AI systems. They don’t tell customers whether prompts are stored, whether uploaded files may be used for training, who owns embeddings, what happens when an agent calls external tools, or who is accountable when generated output is wrong.

That gap is no longer a niche issue. A 2025 ENISA report revealed a 35% increase in AI Act enforcement actions against companies with non-compliant terms of service, referenced at https://kb.bimpactassessment.net/en/support/solutions/articles/43000739491-understanding-impact-business-models-serving-underserved-populations. The practical message is that generic AI disclaimers won’t carry much weight if the product performs automated processing without specific, transparent terms.

Data use for training and product improvement

This clause should be precise. “We may use data to improve the service” is too broad for AI products.

For systems using LLMs, embeddings, ranking models, or evaluation datasets, you need to separate:

- customer content submitted to the service

- operational metadata needed to run the feature

- de-identified analytics, if you use them

- content that may be reused for training or benchmarking, if you allow that at all

A useful pattern is to default to a narrow licence for service delivery and make any broader use a separate, explicit choice. That choice should be reflected in the data pipeline, not only in the text. If the contract says training is opt-in, your storage and routing architecture should prevent accidental reuse.

Ownership of outputs and derived artefacts

AI products generate new categories of material. Summaries, classifications, tags, embeddings, recommendations, code suggestions, extracted entities, and workflow actions all raise different ownership questions.

Don’t bundle them under one sentence.

A stronger structure distinguishes between:

| Item | Contract question | Technical implication |

|---|---|---|

| User input | Who owns it and who may process it | Storage scope, encryption, retention, export |

| AI output | Who may use it and under what limits | Download, sharing, downstream use, auditability |

| Embeddings and indexes | Are they customer data, provider data, or a derived artefact | Deletion logic, tenancy separation, retraining boundaries |

| Feedback signals | Can thumbs-up, edits, or labels be reused | Logging design and product analytics controls |

For retrieval systems in particular, embeddings deserve specific treatment. They may not be readable like raw text, but they are still operationally sensitive because they encode customer information and often remain in vector indexes long after source files are deleted unless cleanup is deliberate.

Liability for AI output and automated actions

Many teams try to handle this with one disclaimer saying AI output may be inaccurate. That’s rarely enough.

The contract should reflect how the AI feature is used.

If the product only drafts suggestions for a user to review, that is different from a system that classifies claims, triggers workflows, scores people, or dispatches actions through tool-calling. The more automation you add, the weaker a generic “use at your own risk” sentence becomes.

A practical approach is to separate three states:

- Assistive output that requires human review before action

- Semi-automated workflows where the system proposes actions but a user approves them

- Automated execution where the service acts without immediate review

Each state should have its own terms, control requirements, and internal approval standard. Here, legal drafting and system design need to meet. If your terms say some functions require human review, then the product must have an actual review gate.

A disclaimer can describe uncertainty. It can’t replace a missing control.

Acceptable use for model behaviour

AI systems need narrower acceptable use clauses than ordinary SaaS products.

The clause should address misuse patterns that are specific to model-enabled systems, such as attempts to extract system prompts, bypass safety controls, generate prohibited content, or use the service to process data categories the provider doesn’t support safely.

This can also cover use of external tools by agents. If an agent can send emails, update records, call internal APIs, or query third-party services, the terms should reserve the right to apply stricter controls, suspend risky workflows, or require additional configuration by the customer.

Model changes, fallbacks, and service evolution

A hidden AI contract issue is model volatility. Providers change model versions, latency shifts, output quality varies, and fallback paths may alter behaviour.

The terms should leave room to update underlying models and safety systems, but not so broadly that customers lose predictability. If your product relies on multiple providers or allows provider switching for resilience, say so in a way that matches the architecture.

Useful topics to address include:

- model substitutions for reliability or safety

- feature availability by plan or region

- temporary disabling of high-risk functions

- logging and review of agent actions

- customer responsibilities when connecting external tools or APIs

What works better than a broad AI disclaimer

- Narrow data licences tied to service delivery

- Explicit opt-in language for training, evaluation reuse, or public benchmarking

- Defined output ownership rules instead of vague “generated content” wording

- Human review requirements for high-impact actions

- Clear operational rights to suspend unsafe workflows or abusive prompt patterns

Teams that adopt these clauses early usually avoid the worst mismatch in AI contracting. They don’t describe the AI as magic, and they don’t pretend legal wording can compensate for weak governance.

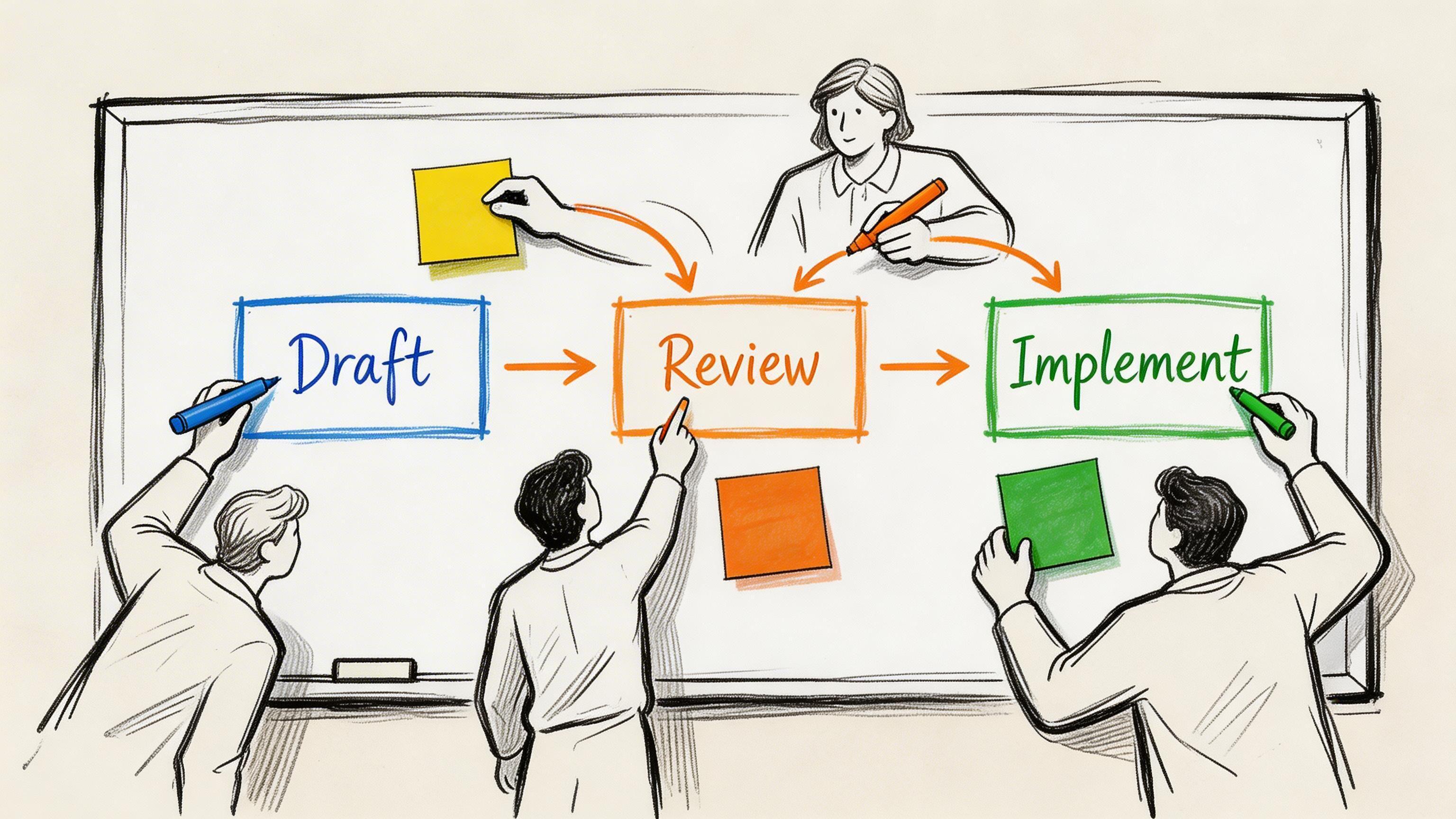

Best Practices for Drafting and Implementation

A solid terms of service process is collaborative. Legal can’t do it alone, and neither can product or engineering. The document only works when all three functions test it against reality.

Start with the system, not the template

The first drafting pass should come from a product and architecture inventory.

List what the service does:

- who can create accounts

- what data enters the system

- where that data flows

- which subprocessors or providers are involved

- what outputs the system generates

- how billing, suspension, and deletion work

- where AI features introduce additional decisions or automation

Only then should legal drafting begin. If you start from a template, the team tends to preserve assumptions that aren’t true in your environment.

Use a structured review workflow

A practical workflow usually looks like this:

-

Product review

Product owners map features, plans, customer promises, and known roadmap changes. -

Engineering review

Engineers validate whether the contract maps to actual controls such as retention jobs, access controls, consent logging, export tools, and abuse handling. -

Security and privacy review

This pass checks data flows, subprocessors, retention promises, and incident-related clauses. -

Legal consolidation

Counsel turns the operational decisions into enforceable language and removes contradictions. -

Implementation review

The team tests sign-up flows, acceptance capture, admin interfaces, and update notices before release.

This is also where policy boundaries matter. If your team is refining how terms of service interact with privacy and cookie notices, https://devisia.pro/en/blog/privacy-cookies-policy is a useful companion because many implementation failures come from presenting overlapping documents in a confusing way.

Don’t ignore data use restrictions

This is one of the most important negotiation points in modern software contracts.

TechContracts Academy analysis found that 70% of cloud providers claim broad rights to use customer data for “product improvement”, and that negotiating specific “no third-party sharing or AI training” clauses can reduce data-related legal disputes by 40% during enterprise audits, according to https://www.techcontracts.com/2022/11/03/ten-key-data-terms-in-it-contracts-an-issue-spotter-updated/.

For implementation, this means the contract should be paired with technical boundaries such as scoped storage, tenancy separation, and reviewable data paths. Otherwise the clause may be narrower than the system.

Capture consent like an audit event

Browsewrap in a footer is weak for anything material. If the terms matter, acceptance should be captured through a deliberate interaction tied to the user or authorised workspace representative.

What to log:

- Version identifier of the exact terms accepted

- Timestamp and account identity

- Presentation context such as sign-up, renewal, or updated terms prompt

- Jurisdictional or language variant where relevant

Store this as a proper audit event. Don’t leave it in ephemeral front-end logs.

The enforceability of acceptance often depends less on the PDF and more on whether your system can prove the user actually saw and accepted the relevant version.

Versioning and change management

Terms of service should change when the product changes materially. That requires a process.

Create a version history with effective dates, internal approval records, and release notes for major changes. If you add AI features, usage-based billing, new regions, or external integrations, assess whether that requires updated acceptance.

A simple operational checklist helps:

- Check product drift against the current document every release cycle

- Review customer-facing settings so controls match written promises

- Test termination flows including export and deletion

- Validate support language against the actual service desk process

- Coordinate customer communication when changes affect rights or obligations

The best drafting process isn’t the most elaborate one. It’s the one that keeps the contract and the system aligned over time.

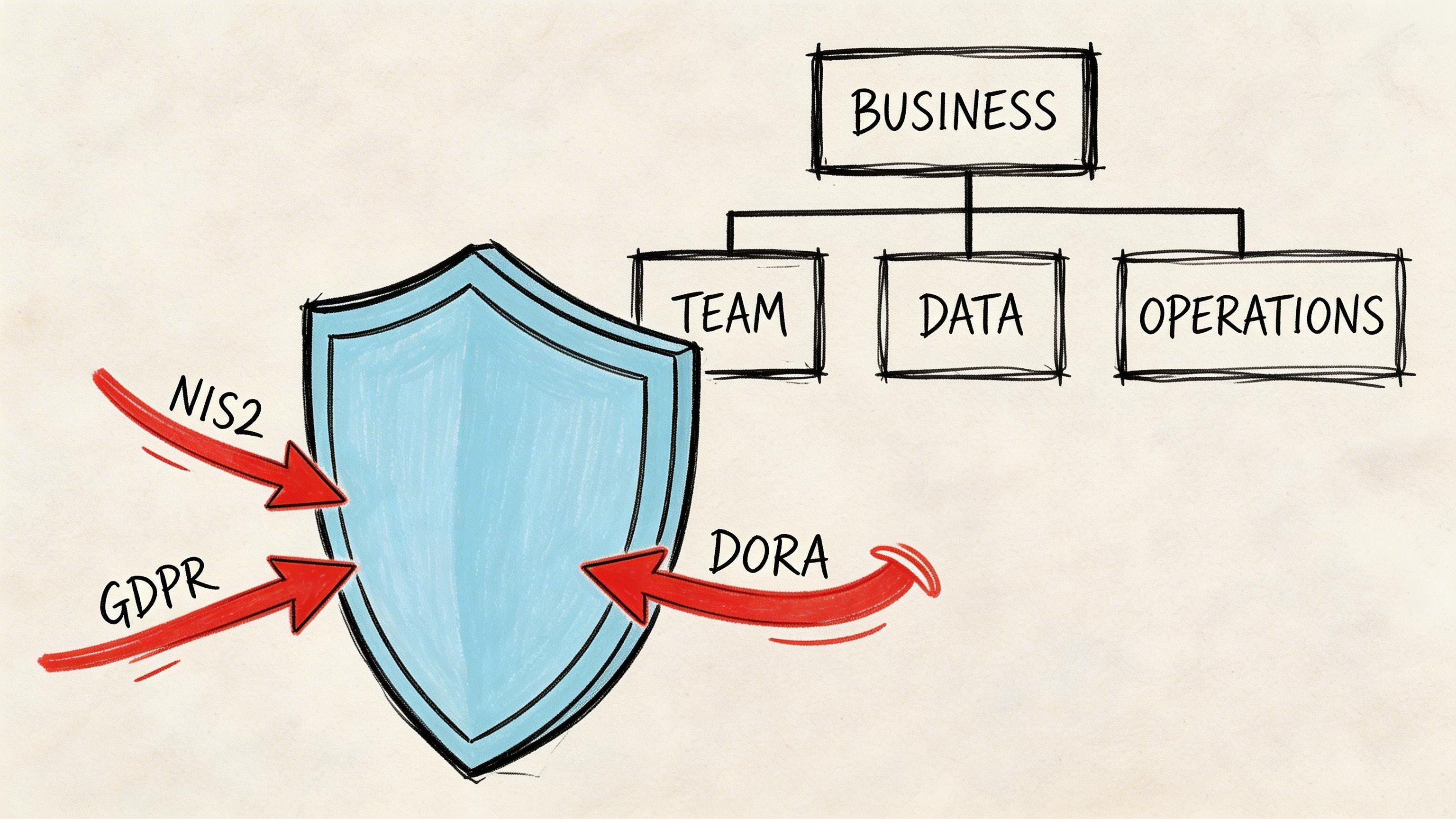

Managing Compliance Risk and Liability

Terms of service become a board-level issue when something goes wrong. An outage, security incident, failed integration, or disputed AI output turns the document from background text into a live risk instrument.

Liability caps are only the start

Many SaaS templates include a limitation of liability clause and stop there. That misses the harder question, which is what sits outside the cap.

According to JD Supra, standard liability caps in SaaS terms of service often limit provider liability to 2 to 6 months of fees, and failing to create specific carve-outs for data security breaches or IP infringement can shift up to 80% of the financial risk to the customer. The cited analysis appears at https://www.jdsupra.com/legalnews/eight-data-related-provisions-that-8864737/.

That matters because not all failures are commercially equivalent.

A minor service interruption and a confidentiality breach shouldn’t always be treated the same way. Nor should a dispute over delayed support be treated like unlawful reuse of customer IP. If the cap applies too broadly, enterprise buyers will push back. If it is drafted carelessly, the provider may absorb more exposure than intended.

Typical risk areas that need carve-outs

- Security incidents involving customer data or confidential information

- IP infringement claims tied to software, content, or generated outputs

- Wilful misconduct or gross negligence, where the parties usually expect different treatment

- Payment obligations, which usually shouldn’t disappear behind a general cap

The exact shape depends on the product and market. The key point is that the cap must reflect the specific failure modes of the service.

Terms of service and regulatory obligations

A contract won’t make GDPR, NIS2, or DORA obligations disappear. What it can do is support a defensible operating model.

For example, terms of service can:

- define customer and provider responsibilities around account security

- reserve rights to suspend unsafe behaviour or compromised integrations

- describe service boundaries so customers understand what the product does and doesn’t guarantee

- direct incident communications and escalation paths

- align service commitments with the controls you can maintain

That alignment becomes more important as software estates grow more interconnected. A SaaS platform with cloud hosting, external identity, payment processors, observability tooling, and AI providers has multiple operational dependencies. Terms that pretend the provider controls every layer create false expectations. Terms that disclaim everything create procurement friction and trust problems.

A more balanced view of legal and technical obligations in regulated environments appears in https://devisia.pro/en/blog/mezzi-di-conformita, especially for teams translating control frameworks into operational responsibilities.

Real scenarios that expose weak drafting

Consider three common cases.

Scenario one. A prolonged outage

If the terms don’t distinguish between maintenance, third-party dependency failures, and provider negligence, the customer and provider will argue from different assumptions. Support may promise one thing while legal text implies another.

Scenario two. An integration-triggered data incident

A customer connects a third-party workflow tool. Data is synced improperly. If your terms don’t define integration responsibility, access rights, and customer configuration obligations, liability gets murky quickly.

Scenario three. AI-generated content causes downstream harm

If the product’s AI output is used in decision-making, broad disclaimers won’t help much unless they are paired with clear use restrictions, review requirements, and an architecture that supports those controls.

Strong terms don’t remove risk. They make risk legible, bounded, and easier to govern.

A practical way to review liability language

Use these questions:

| Review question | Why it matters |

|---|---|

| What is the cap tied to | Fees paid under the relevant order, all fees, or something else |

| Which claims sit outside the cap | Security, confidentiality, IP, misconduct, and payment often differ |

| Are warranties realistic | Promising more than the system can support creates direct exposure |

| Does the clause fit the delivery model | Multi-tenant SaaS, managed services, and AI workflows have different risk profiles |

| Can operations support the promise | Monitoring, export, security, and incident processes must match the text |

Weak terms of service usually fail in one of two ways. They are so broad that enterprise customers won’t accept them, or so careless that they don’t protect the provider when the hard case arrives. The answer isn’t more aggressive wording. It’s tighter alignment between architecture, operations, and legal allocation of risk.

Conclusion From Document to Asset

Terms of service are often treated like the least interesting document in a software business. That’s a mistake.

For SaaS and AI products, they do real work. They define data boundaries, govern user behaviour, shape support expectations, allocate responsibility, and influence what controls engineering must build. If the document is vague, copied from the wrong product, or left unchanged while the platform evolves, it creates friction in sales, confusion in operations, and unnecessary exposure in disputes.

The most useful shift is simple. Stop thinking of terms of service as passive legal text. Treat them as part of the product architecture.

What technical leaders should do next

- Audit the current document against the actual system. Check account models, AI features, data flows, retention logic, billing rules, and termination mechanics.

- Remove broad language that hides real risk. Phrases like “product improvement”, “ongoing maintenance”, or “generated content” usually need tighter definitions.

- Map clauses to enforceable controls. If the terms promise deletion, human review, suspension rights, or restricted data use, the platform should support that operationally.

- Review liability structure with real failure modes in mind. Outages, data incidents, IP disputes, and automated decisions don’t belong in one undifferentiated bucket.

- Version and maintain the document. New regions, new AI workflows, new subprocessors, and new pricing models should trigger a review, not an afterthought.

A good terms of service document won’t make a weak product safe. But a precise one can strengthen a mature product considerably because it forces the company to state what the system does, what it doesn’t do, and how responsibility is shared when conditions are less than ideal.

That is why strong teams revisit terms of service whenever they revisit architecture. Both are expressions of the same thing. The system you run, and the risks you are prepared to own.

If your team is building or operating software where privacy, security, AI governance, and long-term maintainability all need to align, Devisia can help translate those requirements into reliable product decisions, delivery architecture, and practical compliance-ready implementation.