The search for software development companies often begins after the same pattern repeats a few times. A core workflow no longer fits the SaaS product they bought two years ago. Teams export data into spreadsheets to finish work the system can’t handle. Product, operations, compliance, and finance all want different things from the same tool, and every workaround makes the process harder to support.

At that point, the decision isn’t really about buying code. It’s about choosing whether your business will keep adapting itself to software, or whether software will finally adapt to the business.

That choice matters more now because the market is crowded with vendors that look similar from the outside. Their websites promise speed, AI, scalability, and smooth delivery. What matters is less visible: architectural judgement, delivery discipline, security posture, privacy by design, and whether the partner can still support the system after the launch excitement is gone.

When Off-the-Shelf Software Is Not Enough

A lot of businesses don’t outgrow software in one dramatic moment. They outgrow it slowly.

A CRM needs a manual export before billing can happen. A support team logs customer issues in one system, while engineering tracks fixes in another. A dashboard shows yesterday’s numbers because no one wants to risk touching the brittle integration that feeds it. The organisation is still functioning, but every new requirement costs more time, more coordination, and more tolerance for hidden failure.

That’s where software development companies become relevant. Not as coding vendors, but as partners that can model how the business works and turn that into a system people can operate without constant improvisation.

The real gap is operational, not cosmetic

The problem usually isn’t that the existing software looks dated. It’s that the underlying workflow no longer matches how the company earns money, serves customers, or manages risk.

Mid-market companies are often the hardest hit. They don’t have enterprise budgets for heavyweight platforms, but they’ve outgrown generic SaaS. BrainSell’s analysis of underserved mid-market companies notes that cost, change management, and data migration risks are primary barriers, and that this gap is rarely discussed because most content targets either startups or large enterprises.

That observation matches what shows up in practice. Teams don’t need software with more tabs. They need fewer handoffs, clearer ownership, and systems that fit existing operations without creating a maintenance trap.

Practical rule: If your team needs a recurring spreadsheet export to complete a core process, the issue probably isn’t user training. It’s system design.

What good software partners actually do

A serious development partner translates business constraints into technical decisions. That includes questions like:

- Workflow fit: Which steps should be automated, and which should stay manual because they require judgement or approval?

- Data boundaries: Where should sensitive data live, who can access it, and what needs an audit trail?

- Long-term ownership: Can your team understand and extend the system a year from now without rewriting it?

- Integration discipline: Which systems are the source of truth, and which ones should only consume data?

These decisions define whether custom software becomes an asset or a future liability.

Off-the-shelf tools are still useful. They’re often the right answer for standard processes such as payroll, commodity ticketing, or basic internal collaboration. Custom development becomes the better choice when the business depends on a workflow, control model, or product experience that generic software can’t support cleanly.

That’s also why a company should think beyond launch-day requirements. Building software around today’s workaround usually creates tomorrow’s legacy problem. A better approach is to shape the system around stable business capabilities and keep delivery incremental. Devisia’s article on building custom software without creating future problems is useful reading if you’re trying to avoid that common mistake.

Decoding Provider Types and Engagement Models

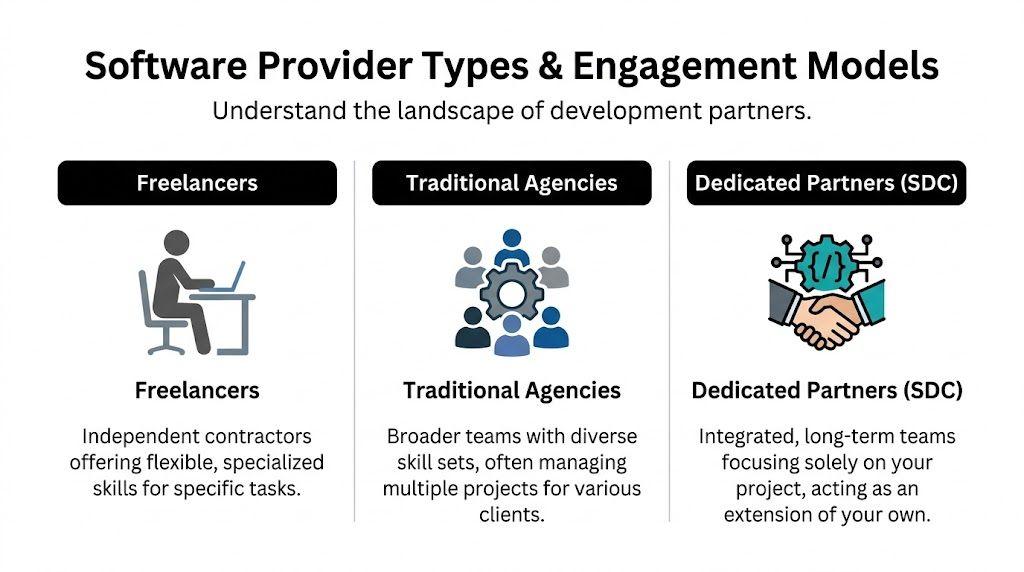

Not all software development companies solve the same problem. Some supply hands for a task. Some package design and development as a project. A smaller group acts like a long-term engineering partner and takes responsibility for delivery, architecture, and maintainability.

That difference matters because most failed engagements don’t fail on code alone. They fail on unclear ownership, weak process, and a mismatch between project uncertainty and commercial model.

Freelancers, agencies, and dedicated partners

A freelancer can be the right choice when you already have product leadership, architecture, QA, and operational ownership in-house. They’re useful for narrow tasks, specialist input, or extra delivery capacity.

The downside is concentration risk. If one contractor disappears, the context often disappears with them. That’s a serious issue when the system includes integrations, security obligations, or operational dependencies.

Traditional agencies usually provide broader coverage. You can get design, frontend, backend, and project management in one package. That works reasonably well for defined scopes such as a marketing platform, a customer portal, or an internal dashboard.

Dedicated partners are different. They’re better suited to software that evolves with the business. Instead of treating the work as a campaign with a finish line, they structure teams, backlog, architecture, and governance for ongoing delivery. That matters even more in a talent-constrained market. Entrepreneur’s coverage of the developer shortage notes a projected global shortfall of four million developers by 2025, which shifts the problem from hiring individuals to securing a reliable team with proven processes.

Engagement model matters as much as provider type

A good provider under the wrong commercial model still creates friction. The model needs to reflect how much uncertainty exists in the work.

| Model | Best For | Cost Structure | Risk Profile |

|---|---|---|---|

| Fixed Price | Well-defined builds with limited ambiguity | Predetermined budget for agreed scope | Lower budget uncertainty, higher change friction |

| Time & Materials | Evolving products, unclear scope, technical discovery | Pay for actual time spent | More flexible, needs active oversight |

| Dedicated Team | Ongoing product development or platform ownership | Monthly team cost based on agreed capacity | Best for continuity, requires internal alignment |

Fixed price works when scope is genuinely stable

Fixed price is useful for contained projects where inputs and outputs are known. Think of a narrow integration, a migration utility, or a relatively standard portal with few unknowns.

It breaks down fast when stakeholders are still discovering what they need. In those cases, vendors either absorb risk and rush quality, or they protect margin by saying every useful change is out of scope.

Time and materials is honest about uncertainty

For products with moving requirements, Time & Materials is often the cleanest arrangement. It lets the team adjust based on discovery, user feedback, or technical constraints without pretending the path was fully knowable on day one.

The trade-off is governance. If you don’t have clear priorities, acceptance criteria, and decision-makers, this model can drift.

The best Time & Materials engagements feel tightly managed, not loosely defined.

Dedicated teams suit software that won’t stop changing

If you’re building a SaaS product, replacing legacy operations, or introducing AI into core workflows, a dedicated team usually fits best. You’re buying continuity, shared context, and a system of delivery rather than isolated output.

That doesn’t mean outsourcing responsibility. It means extending your capability with a team that can carry architecture, QA, DevOps, and release discipline together. If you’re comparing this route with staff augmentation or classic outsourcing, Devisia’s guide to IT outsourcing models and trade-offs gives a practical framework.

Core Services of Modern Development Partners

A modern development partner shouldn’t just offer “web development” or “AI integration” as labels. The real question is whether they can design systems that stay operable under real business conditions: changing requirements, access control, compliance obligations, traffic spikes, poor source data, and the awkward edge cases that appear after launch.

Bespoke web applications and operational dashboards

Custom web applications are most valuable when they eliminate repeated manual coordination.

A well-designed internal platform can route approvals, expose role-based views, enforce business rules, and keep a proper audit trail. A dashboard worth paying for doesn’t just visualise data. It gives teams one place to act on it.

Good partners pay attention to the boring details that determine whether these tools help:

- Access and identity: Single sign-on, role mapping, session management, and least-privilege permissions.

- Source-of-truth discipline: Clear ownership of master data, with controlled synchronisation to connected systems.

- Operational resilience: Error handling, retry logic, background jobs, and visibility into failed tasks.

- Privacy by design: Sensitive fields minimised where possible, separated when necessary, and handled according to retention requirements.

When these basics are skipped, teams get a polished interface sitting on top of fragile logic.

Scalable SaaS platforms

Building SaaS properly is different from assembling a web app with subscription billing. Product architecture has to account for tenant boundaries, release management, observability, supportability, and how features evolve over time.

The design choices start early. Will each tenant share infrastructure with strong logical isolation, or do some customers need stricter separation? How are permissions modelled? How do you introduce new plans and entitlements without hardcoding exceptions? Can the team release weekly without breaking customer-specific configurations?

These are the questions that separate maintainable platforms from expensive rewrites.

One sensible place to compare service scope is Devisia’s custom software and AI delivery services, which outline a product-minded approach to bespoke applications, SaaS platforms, dashboards, and AI-enabled systems. That kind of framing is useful because it treats architecture, implementation, and ongoing maintenance as one continuous responsibility.

AI and LLM integration

AI is no longer a niche add-on for software development companies. Clutch’s 2025 software development research reports that 84% of developers are using or planning to use AI tools, and 71% of organisations use generative AI. That changes client expectations. A modern partner should understand how to integrate LLMs, embeddings, and AI workflows as part of normal product delivery.

But practical AI work is not “call an API and hope for the best”.

A reliable implementation usually needs several controls working together:

- Prompt and orchestration design: Separate task instructions, domain context, and tool access instead of putting everything in one giant prompt.

- Guardrails: Constrain outputs, validate formats, and block unsafe actions.

- Human-in-the-loop controls: Route uncertain, high-impact, or regulated decisions to a person.

- Observability: Log prompts, tool calls, latencies, failures, and model outputs appropriately for debugging and governance.

- Fallback strategy: Decide what the product should do when a model times out, returns low-confidence output, or exceeds cost thresholds.

AI features fail in production when teams optimise for demo quality and ignore control flow, data quality, and operational accountability.

This is especially important in regulated environments. If a system processes personal data, generates customer-facing decisions, or assists staff in sensitive workflows, privacy and compliance can’t sit in a late-stage review. They need to shape architecture from the start.

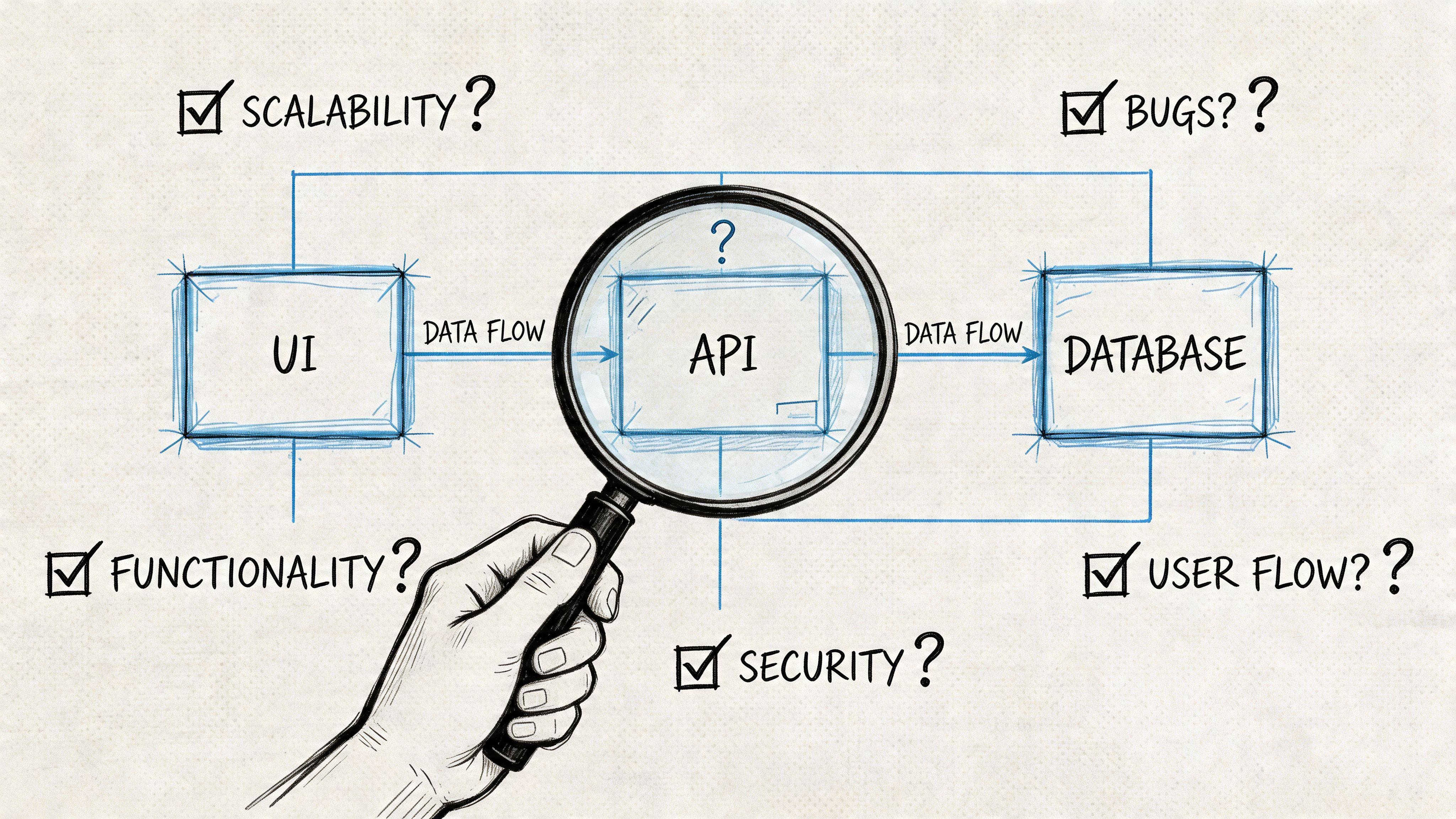

The Vetting Process How to Evaluate Potential Partners

Most buyer mistakes happen before the contract is signed. Teams evaluate portfolios, design polish, and hourly rates, but skip the questions that reveal how the partner builds software.

That’s risky because a weak process can look efficient in a proposal. The problems usually appear later, when releases slow down, defects recur, or no one can explain why a system behaves the way it does.

Look for process maturity, not just technical vocabulary

Any vendor can list React, Node.js, Python, Kubernetes, or OpenAI on a sales page. That tells you almost nothing.

A stronger signal is delivery maturity. Wildnet Edge’s analysis of firms with mature processes notes that top-tier software firms with CMMI Level 3 appraisal can reduce deployment risks by up to 40% and achieve 25-30% faster time-to-market for complex AI and ML projects compared with non-appraised firms. Even if you’re not selecting on certification alone, the underlying message is practical: repeatable engineering process reduces avoidable risk.

Ask for specifics on how they ship software:

- Testing strategy: What is covered by unit, integration, and end-to-end tests?

- Release process: How do they promote code across environments and handle rollback?

- Code review discipline: Who reviews what, and how are architectural decisions challenged?

- Operational readiness: What monitoring, logging, and alerting are standard?

- Security hygiene: How are secrets managed, dependencies reviewed, and access rights controlled?

If the answers stay abstract, assume the process is weaker than advertised.

Evaluate architecture through trade-offs

You don’t need vendors to agree on every stack choice. You do need them to explain trade-offs clearly.

A credible partner should be able to discuss why they’d choose a modular monolith over microservices for an early-stage product, or when they’d reverse that decision. They should be able to explain how they’d isolate sensitive data, manage asynchronous workflows, or structure a multi-tenant SaaS permission model without hand-waving.

Useful questions include:

- How do you prevent technical debt from accumulating during fast delivery?

- What happens if one of your senior engineers leaves mid-project?

- How do you handle scope changes without turning every discussion into a commercial dispute?

- How would you document the architecture so an internal team can take it over later?

- What do you consider a reason not to use AI in a workflow?

The last question is especially revealing. Mature teams know where deterministic logic is safer than generative output.

Privacy, security, and compliance need concrete answers

If your system touches customer data, financial operations, or internal business controls, ask about privacy by design, GDPR, NIS2, and DORA in operational terms.

A capable partner should talk about data minimisation, retention boundaries, auditability, access control, incident handling, and environment separation. They should also explain how these requirements affect architecture, delivery speed, and cost.

What you want to hear is not “we’re compliant”. What you want to hear is how they design systems so compliance requirements are easier to meet and easier to prove.

This short video is useful if you want a visual sense of how experienced teams think about evaluating technical delivery partners.

Red flags that should slow you down

Some warning signs show up consistently:

- Evasive ownership: They can describe what developers do, but not who owns architecture, QA, release quality, or security review.

- Speed-first promises: They emphasise how fast they can start, but not how they prevent rework.

- No clear handover model: They assume you’ll stay dependent on them, or they have no documentation standard.

- Shallow discovery: They jump to implementation before understanding workflows, constraints, or existing systems.

- AI theatre: They position AI as mandatory everywhere, instead of explaining where it’s useful and where it introduces risk.

If a vendor can’t explain how they recover from mistakes, they probably haven’t built a delivery process that expects reality.

Understanding Project Costs and Timelines

The first quote you receive for a software project is usually less informative than it looks.

Buyers often compare providers as if they’re pricing the same thing. They usually aren’t. One quote may assume discovery is complete, integrations are straightforward, and security requirements are standard. Another may include architecture work, QA automation, deployment setup, and operational safeguards. The cheaper number can hide the more expensive outcome.

What actually drives cost

Cost is shaped by uncertainty as much as by feature count.

A workflow with several third-party integrations is more expensive to build than a standalone interface, even if the screen count looks similar. A platform with role-based access, audit requirements, and data retention constraints will cost more than a visually comparable internal tool. AI features add another layer because you’re paying not only for user experience, but also for orchestration, evaluation, fallback behaviour, and monitoring.

The same logic applies to data work. InData Labs’ review of mature data engineering practices notes that DataOps and data-mesh approaches can prevent cascading failures that cost enterprises over $100K in downtime. That’s why data architecture isn’t a side concern. If your product depends on analytics, automation, or AI, poor data foundations create expensive instability later.

Why timelines slip

Timelines usually stretch for one of four reasons:

- Requirements keep changing: Not because stakeholders are careless, but because they learn from seeing the product.

- Legacy systems are messier than expected: The integration point in the diagram rarely matches the actual system behaviour.

- Decision-making is slow: Teams wait for approvals on scope, UX, legal review, or security exceptions.

- Quality work was omitted from the estimate: Testing, migration rehearsal, observability, and deployment hardening often get under-scoped.

A more realistic way to estimate is to define a narrow first release with clear acceptance criteria, then sequence later capabilities behind it.

A practical way to discuss timeline ranges

For planning purposes, think in release shapes rather than promises.

A proof of concept is meant to answer a technical or product question. It shouldn’t carry production expectations. An MVP should support a real workflow for a limited audience with sufficient stability to learn from live use. A broader production release needs stronger non-functional work such as security controls, support tooling, logging, backup strategy, and controlled rollout.

Cheap software is often just expensive software with the first invoice reduced.

The useful conversation with software development companies is not “How fast can you build this?” It’s “What level of reliability, change tolerance, and operational readiness are we buying in each phase?”

Sample Workflows and Case Study Insights

The best way to assess a partner is to imagine the work in motion. Not as a sales deck, but as a sequence of decisions under constraint.

Startup journey from idea to validated MVP

A founder has a credible product concept, but the feature set is still fluid. The wrong move is to commission a full platform immediately.

A better workflow starts with focused discovery. The team identifies the core user action, maps the smallest end-to-end journey that proves value, and designs only the flows needed to support that journey. Architecture stays intentionally modest. A modular monolith, straightforward hosting, and a narrow data model are usually enough.

Delivery then moves in short increments:

- Problem framing: Clarify user, workflow, and success criteria.

- Prototype and validation: Test assumptions before building deep infrastructure.

- MVP implementation: Build only what is required for a controlled live release.

- Usage review: Observe what users do, then re-order the roadmap.

This approach works because it preserves optionality. The startup learns without committing to unnecessary complexity.

SME modernisation and legacy replacement

An established company has a legacy internal system that staff understand but no one wants to maintain. Data quality is uneven. Reporting is inconsistent. The business can’t tolerate a hard cutover.

The right pattern is phased replacement. Start by mapping the existing process, especially the hidden manual steps users never mention in initial interviews. Then define a target workflow and decide which parts can move first without breaking operations.

A sensible sequence often looks like this:

- Parallel analysis: Understand old data structures, business rules, and exception handling.

- Incremental replacement: Introduce new modules around the old core instead of replacing everything at once.

- Migration rehearsal: Test imports, reconciliation, and rollback before the final move.

- Controlled rollout: Move one team or business unit first, then widen adoption once edge cases are understood.

A provider that pushes for a “big bang” replacement without strong operational reasons is increasing risk, not reducing it.

Enterprise AI integration into a core process

An enterprise wants to automate part of a high-volume workflow such as document triage, support classification, or internal knowledge retrieval. The business case is real, but so are the control requirements.

The workflow should begin with boundaries, not models. Define what the AI is allowed to do, what it may suggest, and what always requires human confirmation. Then design retrieval, prompt structure, validation rules, and logging so the system is explainable enough to operate.

A robust implementation usually includes:

- Task decomposition: Split retrieval, reasoning, formatting, and action execution into separate stages.

- Human review gates: Keep approval in place for high-risk or ambiguous outcomes.

- Fallback behaviour: Route failures or uncertainty to deterministic paths.

- Monitoring and refinement: Review outputs, failure cases, and drift in live conditions.

The strongest AI systems in business operations don’t pretend to be autonomous. They’re designed to be supervised.

These examples differ in scale, but the pattern is consistent. Good software development companies reduce uncertainty early, make architectural commitments gradually, and protect the business from fragile shortcuts.

Your Actionable Checklist for Selecting a Partner

Choosing among software development companies gets easier when you reduce the decision to verifiable signals. A strong partner doesn’t need perfect answers to every question. They do need coherent answers that connect product goals, engineering practice, and operational reality.

Technical and architectural due diligence

- Architecture fit: Can they explain why the proposed architecture suits your current stage, not just your aspirational future state?

- Maintainability plan: Do they describe code organisation, documentation, testing boundaries, and how future changes will be made safely?

- Integration thinking: Have they identified source systems, failure modes, and operational dependencies early?

Process and communication clarity

- Delivery rhythm: Do they have a clear cadence for planning, demos, QA, releases, and issue escalation?

- Decision ownership: Is it obvious who owns product decisions, technical direction, and acceptance of completed work?

- Scope handling: Can they explain how changes are assessed, prioritised, and costed without creating constant conflict?

Commercial and contractual alignment

- Model fit: Does the engagement model match the uncertainty level of the project?

- Visibility: Will you get transparent reporting on progress, risks, and budget burn?

- Exit readiness: Can your team take over the codebase, infrastructure, and documentation if needed?

Security and compliance posture

- Privacy by design: Do they talk about data minimisation, access boundaries, and retention as design choices?

- Operational security: Can they explain secrets handling, environment separation, audit logging, and incident response expectations?

- Regulatory awareness: Do they understand how requirements such as GDPR, NIS2, or DORA affect architecture and operations?

A final test is simple. Ask the partner what could go wrong in your project. The better answer is rarely comforting, but it is usually specific. That’s the partner you can work with.

If you’re assessing software development companies and want a technical second opinion, Devisia helps teams evaluate product scope, architecture, AI fit, privacy implications, and delivery trade-offs before expensive mistakes are locked in.