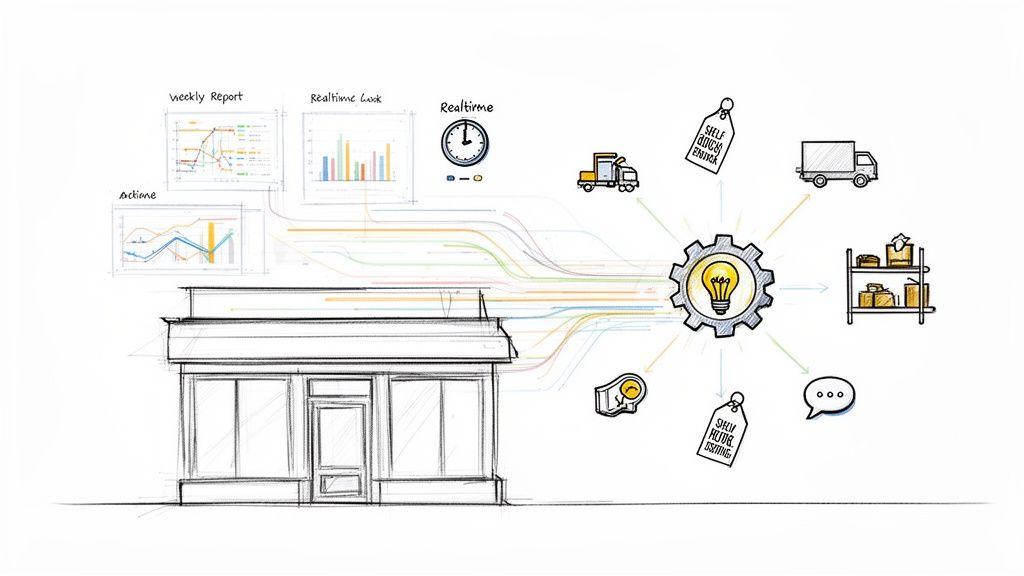

Integrating “Retail AI” is not about purchasing a single, futuristic product. It is about engineering a collection of specialized software systems to solve specific business problems, from mitigating inventory waste to personalizing customer interactions. These systems are not magic; they are decision-making engines designed to convert slow, manual data analysis into real-time operational actions that drive efficiency and revenue.

For founders, CTOs, and product leaders, the opportunity lies in treating retail artificial intelligence as a core capability built into the business architecture, not as an isolated experiment. This shift in mindset is critical for moving from reactive analysis of historical sales reports to proactive, data-driven actions, such as a system flagging a potential stockout at a specific store this afternoon. The objective is to augment human decisions with computational speed and precision.

The Problem: Moving Beyond Hype to Practical Implementation

Many retail businesses struggle with operational inefficiencies and undifferentiated customer experiences. The problem is not a lack of data, but a failure to process it at a speed and scale that can inform immediate action. Traditional methods rely on manual analysis, which is slow, error-prone, and incapable of handling the complexity of modern retail operations. This leads to persistent issues that erode margins and customer loyalty.

From Manual Analysis to Automated Action

The primary function of an AI system in retail is to automate the process of turning vast datasets—customer behavior, inventory levels, supply chain logistics, market trends—into actionable outputs. This capability is why the global AI retail market, valued at USD 3.2 billion in 2023, is projected to reach USD 13.86 billion by 2026, reflecting a compound annual growth rate of approximately 52%. With 88% of executives planning to increase AI spending, the focus is on compressing decision-making cycles from weeks to minutes.

At its core, implementing retail AI is an architectural choice. It prioritizes the construction of scalable, maintainable, and governable systems for data-driven operations over reliance on intuition-based reactions. This engineering approach makes the business more resilient and responsive.

Core Problems Solved by Retail AI Systems

A pragmatic approach to AI implementation focuses on solving persistent business challenges rather than adopting technology for its own sake. This lens makes the value tangible and provides a clear path to generating ROI.

Key problems addressed by well-architected AI systems include:

- Impersonal Customer Journeys: Rule-based recommendation engines often fail. AI systems can analyze browsing history, purchase data, and semantic context to deliver relevant product recommendations.

- Inventory Inefficiency: Traditional forecasting leads to stockouts and overstock. Machine learning models can predict demand with greater accuracy by analyzing multiple variables, reducing both waste and lost sales.

- Operational Blind Spots: Physical store operations are often a black box. Computer vision can automate the monitoring of shelf availability or analyze foot traffic patterns, providing quantitative data to optimize store layouts and staff allocation.

Focusing on these concrete issues is the foundation for a successful digital transformation in retail. It enables technical leaders to build systems that solve current problems while being architecturally prepared for future requirements.

Matching AI Solutions to Core Retail Challenges

Effective implementation of retail AI requires mapping specific business challenges to the appropriate technical capabilities. This approach demystifies the technology stack and clarifies the path from problem to solution, which is essential for founders and CTOs making strategic architectural decisions. The goal is to understand precisely which AI tools solve which problems and how they are engineered.

Here is a breakdown of common applications.

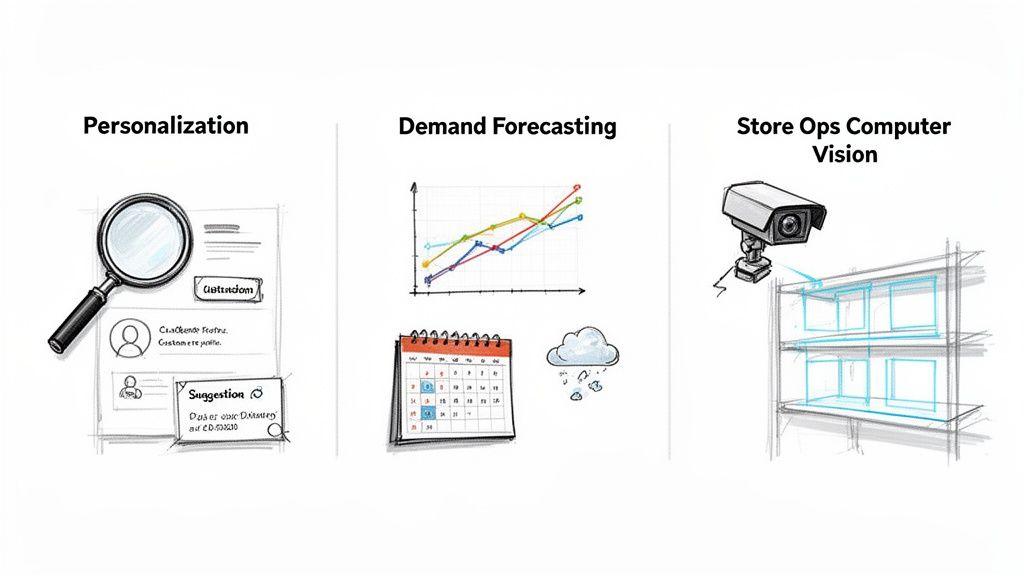

Enhancing Personalization with LLMs and Embeddings

Problem: Generic, rule-based product recommendations frustrate customers and reduce conversion rates. These systems lack an understanding of user intent and the semantic relationships between products.

Solution: Modern retail artificial intelligence systems use Large Language Models (LLMs) and embeddings to understand nuance and intent.

-

Embeddings: These are numerical vector representations of unstructured data like product descriptions, images, or user browsing paths. They enable the system to grasp “semantic similarity.” For example, the model learns that a “lightweight jacket for spring” is conceptually closer to a “windbreaker” than to a “winter parka,” even if the keywords don’t match. This allows for more contextually relevant recommendations.

-

LLMs: These models excel at interpreting natural language queries. A customer can type “outfit for a summer dinner party,” and an LLM can translate that ambiguous request into a structured query that the product catalog can process. It can also parse complex, multi-faceted requests like “durable, waterproof hiking boots for wide feet under £200” into a precise set of filters.

This combination shifts the recommendation engine from suggesting what other people bought to what this specific user is most likely to want.

Optimizing Inventory with Predictive Forecasting

Problem: Traditional inventory management is a high-stakes balancing act. Overstocking ties up capital, while understocking leads to lost sales and customer dissatisfaction. Forecasting based on historical sales alone is insufficient to handle market volatility.

Solution: Machine learning (ML) models can generate more accurate demand forecasts by analyzing dozens of variables simultaneously.

The key architectural shift is from reactive ordering to predictive replenishment. An ML model integrates multiple variables to anticipate future demand with greater precision, directly impacting the bottom line by minimizing both waste and missed opportunities.

A well-architected forecasting system synthesizes data from multiple sources:

- Historical Sales Data: Analyzing trends and seasonality down to the individual SKU level.

- External Factors: Integrating real-world signals like weather forecasts, local events, or social media trends.

- Promotional Schedules: Factoring in how planned discounts or marketing campaigns will influence demand for specific items.

This multi-faceted analysis enables the precise placement of products across the retail network, optimizing stock levels system-wide.

Improving Store Operations with Computer Vision

Problem: For brick-and-mortar retailers, the physical store environment is often a data-poor black box. Monitoring shelf availability, customer flow, and queue lengths is typically a manual, slow, and expensive process.

Solution: Computer vision automates in-store monitoring and analysis. By connecting AI models to existing in-store cameras, retailers can gain real-time operational intelligence. A fine-tuned vision model can be trained to detect empty shelves, identify misplaced products, or recognize long checkout queues, triggering automated alerts to staff via inventory and management APIs.

AI adoption is no longer a niche strategy. Up to 90% of retailers are implementing AI, driven by customer expectations—71% of shoppers now anticipate AI as part of their experience. The trend is accelerating, with Adobe reporting a 1,950% year-over-year increase in retail traffic from chat interactions during Cyber Monday 2024. You can explore more data on these retail technology trends.

Architecting for Scalability, Maintenance, and Security

Moving retail artificial intelligence from a proof-of-concept to a core business capability depends entirely on its architecture. A model that performs well in a development environment is useless if it cannot be reliably and securely integrated into production systems.

For CTOs and engineering leaders, the challenge is not just building a model but designing a system that is maintainable, scalable, and cost-effective. This requires proven architectural patterns that connect AI services with a complex web of existing systems, including Enterprise Resource Planning (ERP), Product Information Management (PIM), and Point of Sale (POS).

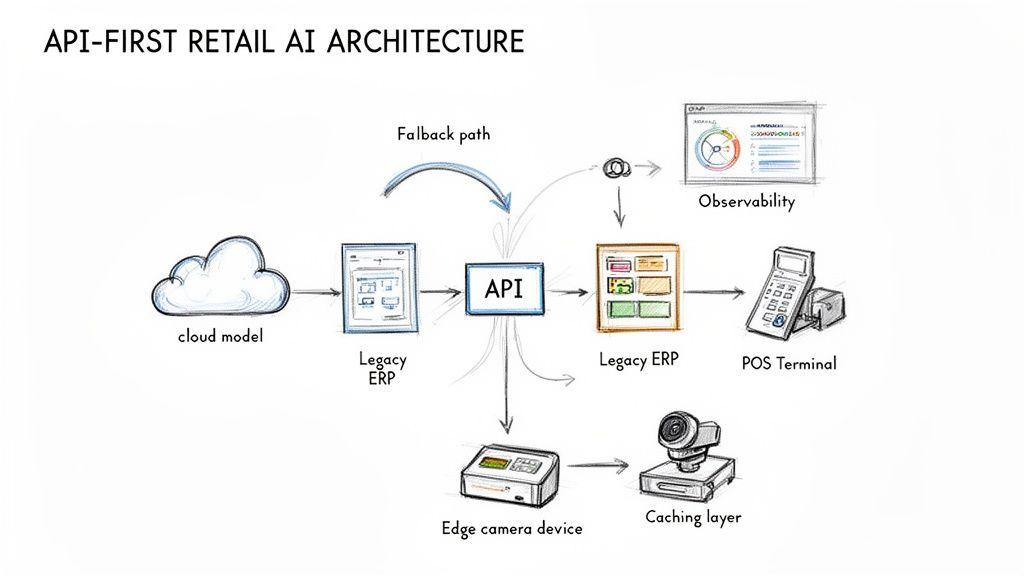

An API-First Integration Strategy

The most robust pattern for integrating AI into a retail environment is an API-first approach. This strategy treats AI capabilities—whether custom-built models or services from providers like OpenAI—as modular, independent services consumed via well-defined APIs.

This architectural choice provides several advantages:

- Decoupling: Core systems (e.g., e-commerce platform, inventory software) are not hardwired to a specific AI model. This allows for updating, replacing, or A/B testing different models without re-engineering the entire backend.

- Interoperability: It provides a standard interface for modern AI tools to communicate with legacy monolithic systems. An API gateway can act as a translator, converting a request from a legacy ERP into a format a cloud-based LLM can process.

- Controlled Access: APIs serve as a natural chokepoint for implementing security, authentication, and rate-limiting. This ensures services are used correctly and helps manage operational costs.

This approach treats AI as just another service within a modern, microservices-oriented architecture—a familiar and manageable paradigm for engineering teams.

An API-first design is fundamental to operationalizing AI in retail. It transforms a model from a standalone asset into a reusable, governable component that can be securely integrated across multiple business functions, from customer-facing apps to back-office workflows.

The Trade-Off: Real-Time Inference vs. Batch Processing

Not all retail AI tasks have the same performance requirements. A common architectural mistake is applying a one-size-fits-all processing model, leading to either excessive costs or poor user experience. The key decision is choosing between real-time inference and batch processing.

Real-time inference is required for customer-facing applications where low latency is critical.

- Use Cases: Personalised product recommendations, dynamic pricing, chatbot interactions.

- Architectural Needs: Low-latency APIs, GPU-accelerated endpoints, and potentially edge computing for in-store scenarios. The infrastructure must be provisioned for peak demand.

Batch processing is more suitable and cost-effective for back-office operations where decisions are not as time-sensitive.

- Use Cases: Demand forecasting, bulk generation of product descriptions, customer segmentation analysis.

- Architectural Needs: These jobs can be scheduled during off-peak hours using CPU-based instances. The focus is on throughput and efficiency, not immediate response time.

Selecting the right processing model for each use case is a critical trade-off. Attempting to run a massive forecasting model in real time is prohibitively expensive, while forcing a customer to wait minutes for a product recommendation will destroy conversion rates.

Designing for Resilience, Governance, and Cost Control

AI systems, particularly those dependent on third-party APIs, can fail. An API may become unavailable, a model might return an unexpected result, or latency could spike. A resilient architecture anticipates these failures and includes mechanisms to handle them gracefully. A naive implementation that ignores governance and security is not just technical debt—it’s a business liability.

Key non-functional requirements include:

- Robust Observability: You cannot manage what you do not measure. The system requires comprehensive logging and monitoring to track model performance (accuracy, drift), operational metrics (latency, error rates), and cost. Dashboards that correlate API calls to specific business functions are essential for financial governance.

- Strategic Caching: Many AI-driven queries are repetitive. Caching responses to common requests—such as recommendations for a popular product or a summary of its reviews—dramatically reduces API calls, latency, and operational costs.

- Resilient Fallbacks: When an AI-powered service fails, the system must degrade gracefully, not crash. For example, if an AI-powered search goes offline, the system should automatically revert to a simpler, rule-based search algorithm.

- Privacy by Design: This principle mandates that privacy is an architectural choice, not a bolt-on feature. Techniques like data anonymization (stripping identifiers before processing) and data minimization (collecting only necessary data) are fundamental for compliance with regulations like GDPR. Building on a sound first-party data strategy is crucial.

- AI-Specific Security: The models themselves are assets that can be attacked. Your architecture must defend against threats like model inversion attacks (where an attacker reverse-engineers training data) and data poisoning (where malicious data is injected to corrupt model behavior). This requires strict access controls on training data and continuous monitoring of model outputs for anomalies.

Building these principles into your AI architecture from day one creates a system that is not only powerful but also practical, secure, and financially sustainable.

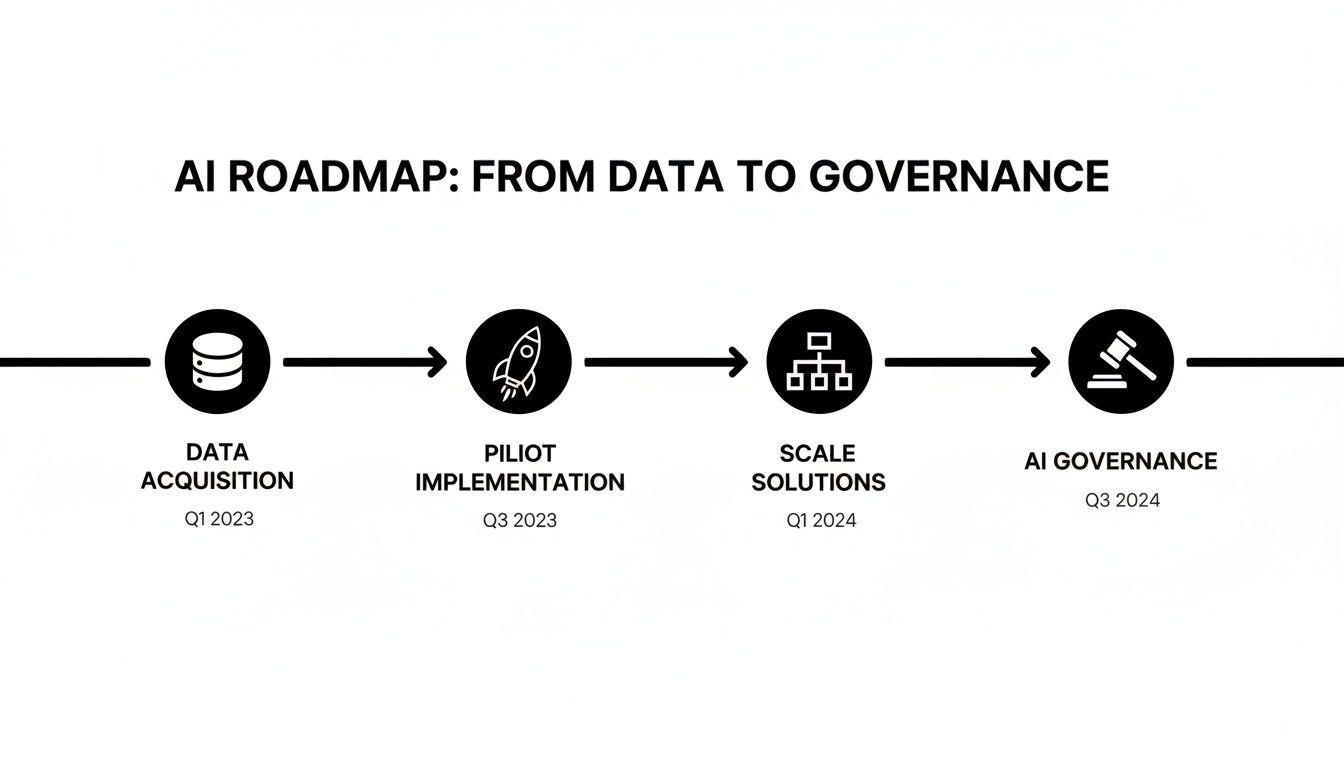

A Phased Implementation Roadmap for Retail AI

Attempting to implement retail artificial intelligence across an entire organization at once is a common cause of failure. It leads to budget overruns, operational disruption, and solutions that do not meet user needs. A phased, incremental roadmap is the only pragmatic approach. It delivers measurable value at each step, reduces risk, and builds the internal momentum needed to justify future investment. This approach treats AI implementation like product development: start small, prove value, and scale systematically.

Phase 1: Build the Data Foundation

No AI model can function without clean, accessible, and reliable data. This initial phase is the most critical and involves building the data infrastructure that will fuel all subsequent work. The primary objective is to unify information from disparate systems like POS, ERP, and e-commerce platforms. This requires standardizing formats, cleaning data, and establishing a central repository (e.g., a data lake or warehouse). Success is measured not by AI outputs, but by data accessibility and reliability.

Phase 2: Pilot a Single, High-Impact Internal Use Case

With a data foundation in place, the next step is a tightly scoped pilot project designed to solve one specific, high-impact internal problem. This approach proves value quickly and allows for testing the architecture in a low-risk environment. Avoid customer-facing applications at this stage. A strong candidate is an AI-powered search tool for internal product and merchandising teams. This allows staff to query the product catalog using natural language, drastically reducing the time required for tasks like finding comparable products or building collections for marketing campaigns.

The success of this pilot is measured by internal adoption and efficiency gains. When product managers use the tool daily because it saves them hours of manual work, the business case for AI is validated, building credibility for the next phase.

This timeline illustrates the four stages, showing how a successful AI roadmap progresses from foundational infrastructure to enterprise-wide governance.

As the visual shows, each phase builds on the last, ensuring complexity is introduced incrementally and governance is a continuous thread.

Phase 3: Scale the Winner and Pilot a Complementary Use Case

Once the first pilot has proven its value, Phase 3 proceeds on two tracks: scaling the initial solution and introducing a second, complementary use case. Scaling involves hardening the pilot’s architecture, improving its performance, and formally embedding it into daily workflows, transitioning it from an experiment to a production system. A suitable second use case is automated product description generation. By fine-tuning an LLM on the company’s brand voice, the system can generate solid first drafts of product copy, freeing up the content team for more strategic work. You can read more about other applications of AI in retail on our blog.

Phase 4: Establish Enterprise-Wide Governance and Integration

The final phase focuses on establishing a central AI governance framework. The goal is to ensure that all future AI projects are built securely, ethically, and cost-effectively, leveraging reusable components and best practices. This is where a center of excellence is formed to standardize tools and oversee compliance.

Key activities include:

- Building Reusable Components: Creating a library of proven models, APIs, and architectural patterns.

- Implementing Centralized Monitoring: Deploying dashboards to track cost, performance, and model drift across all AI services.

- Formalizing Governance Policies: Documenting clear rules for data handling, model validation, and human-in-the-loop workflows to ensure compliance with regulations like GDPR and NIS2.

Success at this stage is measured by the organization’s ability to deploy new, safe, and effective AI solutions quickly. Retail AI ceases to be a series of disparate projects and becomes a core strategic capability.

Retail AI Implementation Maturity Model

| Phase | Primary Objective | Key Activities | Success Metrics |

|---|---|---|---|

| 1: Foundation | Establish a clean, accessible data infrastructure. | Data unification, pipeline creation, central repository setup. | Data availability, reliability, query speed. |

| 2: Pilot | Prove value with a single, low-risk internal use case. | Develop and deploy a focused solution (e.g., internal search). | Team adoption, time saved, efficiency gains. |

| 3: Scale | Scale the first solution and introduce a second use case. | Harden pilot architecture, deploy a new pilot (e.g., content generation). | Positive ROI on scaled solution, successful new pilot deployment. |

| 4: Enterprise | Integrate AI as a governed, enterprise-wide capability. | Create a centre of excellence, build reusable components, formalise governance. | Speed of new deployments, cost efficiency, compliance adherence. |

By following this phased approach, you transform AI from a collection of isolated experiments into a core engine for continuous improvement across the entire business.

Measuring the Business ROI of Retail AI

An AI project is successful only if it delivers a measurable business impact. While technical metrics like model accuracy are important for engineering teams, the definitive measure of success for founders and CTOs is its effect on business Key Performance Indicators (KPIs). The objective of implementing retail artificial intelligence is to improve outcomes that directly affect the bottom line.

The first step is to establish a clear performance baseline before deploying any AI solution. Without this, it is impossible to quantify the lift provided by the investment.

Connecting AI Use Cases to Business KPIs

The KPIs tracked must be directly tied to the specific problem the AI system was designed to solve. A generic dashboard is insufficient. You must focus on the metrics that matter for each use case to build clear evidence of financial return.

Different AI applications will have different measures of success:

- Inventory Optimization: KPIs include stockout rate (percentage of time a product is unavailable) and inventory carrying costs. The goal is to reduce both.

- E-commerce Personalization: Success is measured by increases in conversion rate, average order value (AOV), and customer lifetime value (CLV).

- AI-Powered Customer Service: The focus is on operational efficiency and customer satisfaction. Key metrics include average agent response time, first-contact resolution rate, and Customer Satisfaction (CSAT) scores.

Measuring the ROI of AI requires a disciplined shift from tracking technical performance to monitoring business outcomes. By linking every AI initiative to a specific financial or operational metric, you can build a clear, data-driven case that the technology investment is creating tangible value.

From Technical Metrics to Financial Return

Once baselines are established and the correct KPIs are being tracked, improvements can be translated into a clear financial return. For example, a 5% reduction in stockouts can be directly linked to a specific amount of recaptured revenue. Similarly, a 10% increase in conversion rate from a new personalization engine has a clear monetary value. This framework provides a robust business case, demonstrating that AI is not just another IT project but a strategic investment contributing to profitability.

Common Questions on Retail AI Implementation

When leadership plans their first retail artificial intelligence projects, several practical questions consistently arise. Clear, pragmatic answers are essential for navigating the technical and business trade-offs inherent in building effective AI systems.

Here are the most common concerns we hear from CTOs, founders, and IT managers.

Should we build custom models or use third-party APIs?

This is not an either/or decision; it is about selecting the right tool for the specific job, considering the use case and data sensitivity. For generic tasks like summarizing product descriptions, an API from a provider like OpenAI is often faster and more cost-effective. However, for specialized functions that involve proprietary data—such as fraud detection or demand forecasting based on unique sales history—a custom-trained model creates a significant competitive advantage. A hybrid strategy is often optimal: use APIs for general capabilities and invest in custom models for core, differentiating business functions.

What is the biggest hidden cost in an AI project?

The most common budgeting error is underestimating the costs that follow initial model development. The largest hidden costs are in ongoing data management, infrastructure maintenance, and governance. This includes maintaining data pipelines, storage, continuous monitoring for model drift, and the human resources required for oversight and human-in-the-loop workflows.

Focusing solely on the initial build without budgeting for these ‘Day 2’ operational costs is a primary reason AI projects fail to deliver long-term ROI. Plan for operational expenses to be a substantial part of the total cost of ownership.

How do we ensure our AI systems comply with regulations like GDPR?

Compliance cannot be an afterthought; it must be designed into the system’s architecture from the outset. Attempting to bolt on privacy features later is a common and critical failure point.

Key architectural strategies include:

- Data Minimization: Collecting and processing only the data that is absolutely essential for the model’s function.

- Anonymization: Stripping personal identifiers from data before it is used in any training process.

- Access Controls: Implementing strict, role-based access controls for both data and models.

- Data Deletion: Engineering systems to effectively and permanently remove data to honor a ‘Right to be Forgotten’ request.

At Devisia, we build AI-enabled systems with a focus on pragmatic architecture, long-term maintainability, and robust governance. If you are ready to turn your business vision into a reliable digital product, explore our approach at https://www.devisia.pro.