A data management platform is a centralised system for collecting, organizing, and activating large volumes of data from disparate sources. Its primary function is to combat data fragmentation, transforming a chaotic collection of information into structured, actionable audience segments.

For technical and business leaders, a DMP is not merely another tool; it is a strategic architectural decision to impose order on data chaos.

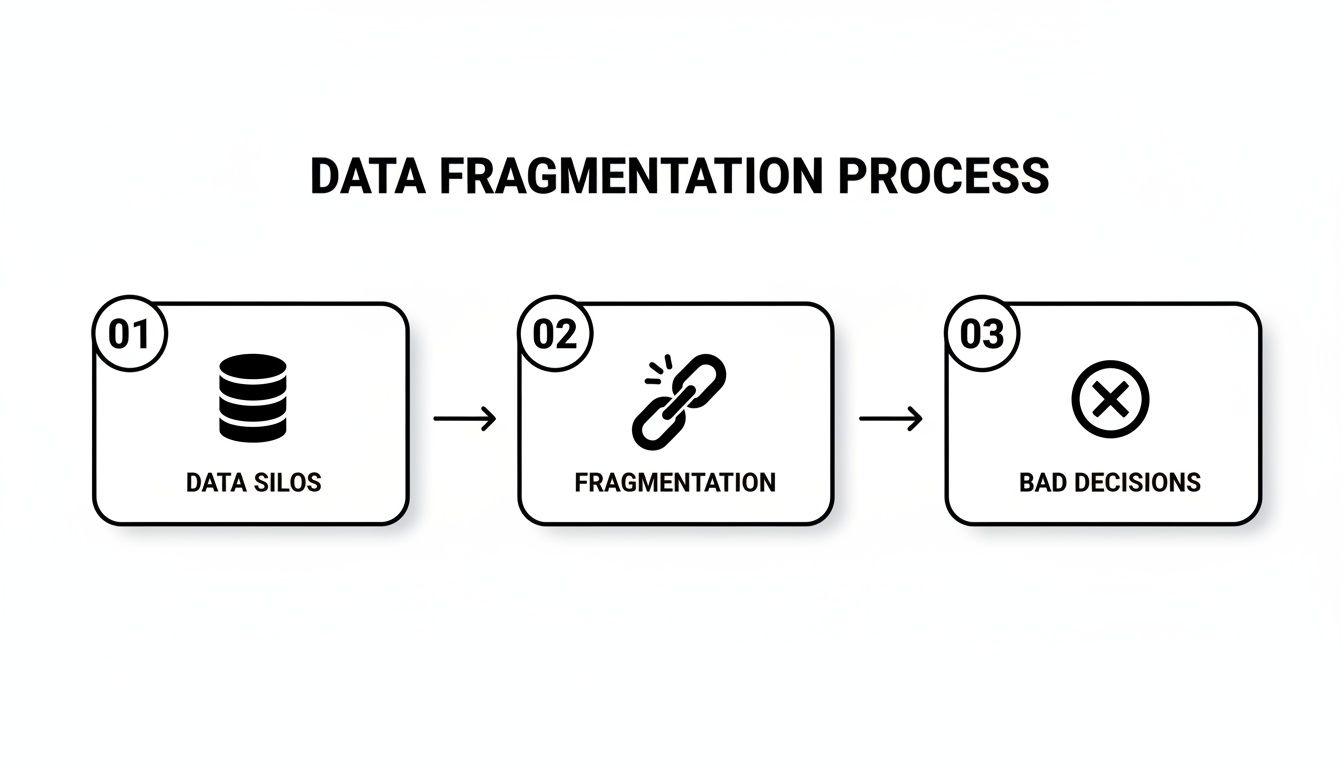

The Problem: Data Fragmentation and Its Consequences

Most organisations operate on fragmented data. Customer information is often distributed across disconnected systems: CRMs, marketing automation tools, web analytics, transactional databases, and third-party applications.

This creates isolated data silos, each with its own structure, identifiers, and access rules. This isn’t just an IT management headache; it’s a systemic weakness that directly undermines core business objectives.

When data is disconnected, creating a coherent view of the customer journey becomes impossible. This directly impacts everything from marketing personalisation to high-level strategic planning. Marketing teams cannot deliver consistent user experiences, product teams lack a unified view of user behavior, and compliance managers struggle with data lineage and consent enforcement.

The Risks of a Naive Approach

Ignoring data fragmentation introduces significant technical and business risks. Without a central system for data governance, organisations inevitably face these challenges:

- Inconsistent Customer Experiences: A user unsubscribing from emails via one system might continue receiving promotions from another. This not only creates a disjointed experience but also erodes user trust and can violate consent.

- Compliance and Security Failures: Fulfilling data subject requests under regulations like GDPR becomes a manual, error-prone process. A fragmented architecture makes it nearly impossible to enforce data retention policies or demonstrate compliance during an audit, exposing the organisation to significant financial penalties.

- Inefficient AI and Analytics: Training effective AI models or deriving meaningful business intelligence requires clean, unified data. When data is siloed, engineering teams spend more time on data cleansing and entity resolution than on value creation, resulting in poor model performance and unreliable analytics.

A data management platform isn’t just another tool to add to the stack. It is a foundational architectural choice that addresses the root cause of data chaos, enabling organisations to build reliable, scalable, and compliant digital systems.

A Strategic Architectural Response

Viewing a data management platform as a strategic architectural component shifts the conversation from marketing tactics to core infrastructure. It establishes a unified layer where data is ingested, standardized, and enriched before being distributed to downstream systems.

For example, by resolving user identities from different touchpoints, a DMP ensures a single customer profile is recognized across platforms—whether a user is browsing the website, using a mobile application, or interacting with a support chatbot.

This centralisation enables effective data integrity and governance. Universal policies for data access, consent management, and retention can be applied system-wide, embedding compliance into the architecture by design. For technical leaders, this represents a shift from a reactive, patchwork approach to a proactive, structured one. For a deeper analysis of the legal framework, our guide to understanding the Data Processing Agreement offers valuable context.

Ultimately, a well-architected data management platform becomes the backbone for all data-driven activities within an organization.

What Is a Data Management Platform Under the Hood

A data management platform (DMP) is not a general-purpose database. It is more accurately described as a data refinery—a purpose-built system designed to process raw, high-volume data from numerous sources and transform it into structured, actionable audience segments.

Its primary technical function is to construct a coherent picture of anonymous user behavior. It ingests data, cleanses it, resolves different identifiers, and groups users based on behavioral or demographic attributes. This process turns chaotic data streams into a strategic asset.

Without this centralised processing, organisations are left with fragmented data, which almost invariably leads to flawed analysis and poor strategic decisions.

As the diagram illustrates, disconnected data silos create a cycle of poor decisions. A DMP is engineered specifically to break this cycle by enforcing data unification at a central point.

The Engineering Blueprint of a DMP

A DMP relies on several core components working in concert. Each plays a critical role in transforming raw data points into actionable insights.

Here is a breakdown of the essential architecture:

| Component | Technical Function | Business Purpose |

|---|---|---|

| Data Ingestion Connectors | API endpoints and connectors for batch files (e.g., CSV) and real-time streams (e.g., event data from websites). | To centralise data from all sources, whether a nightly CRM export or live user clickstreams. |

| Identity Resolution Engine | Deterministic and probabilistic matching algorithms for anonymous identifiers like cookies and device IDs. | To unify user profiles, recognizing that a user on a laptop is the same individual using a mobile app. |

| Segmentation Tools | A user interface or API for building audiences with rule-based logic (e.g., (event = 'page_view' AND url CONTAINS 'pricing') > 3). | To allow marketers or analysts to define specific user groups for targeting or analysis. |

| Activation Endpoints | APIs and integrations that push audience data to external platforms (e.g., DSPs, marketing automation tools). | To make newly defined audience segments available for immediate use in advertising or personalisation campaigns. |

These components make a DMP more than a data store; they form a data processing pipeline optimized for audience management at scale.

The Technical Data Flow from Collection to Activation

The data journey begins with collection, typically via tag managers on a website or SDKs in a mobile application. These tools capture user events and transmit them to the DMP’s ingestion endpoints.

Server-to-server integrations are another common method, allowing systems like a CRM to pass data directly and securely to the DMP. This is a more reliable approach than relying on client-side (browser) tracking.

Once ingested, the identity resolution engine begins its work. It uses a user ID matching process to associate incoming data with existing profiles. For example, if a website visitor’s cookie ID can be deterministically linked to a mobile app’s device ID through a login event, their profiles are merged. This creates a richer, more complete view of their behavior across time and devices.

From an architectural standpoint, a DMP is an event-driven system built for aggregation and segmentation at scale. Its design prioritises handling high-volume, anonymous data streams for near-real-time audience building, which distinguishes it from other data systems.

The market for these platforms is mature, with significant investment in regions with advanced digital infrastructure. North America, for instance, holds a dominant 48.55% revenue share, driven by a strategic shift to first-party data in response to regulations like GDPR and CCPA. You can find further details in reports on data management market trends.

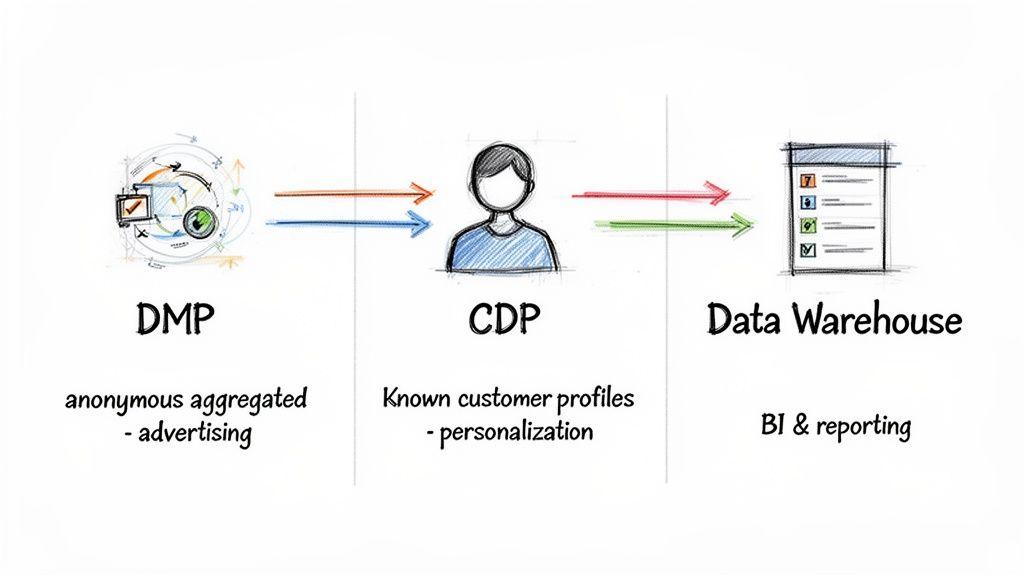

DMP vs. CDP vs. Data Warehouse: An Engineer’s Guide

In discussions about data infrastructure, the terms DMP, CDP, and Data Warehouse are often used interchangeably. From an engineering perspective, this conflation is problematic. While all three manage data, their underlying architectures, data models, and core purposes are fundamentally different.

Selecting the wrong tool for a given use case leads to architectural friction, creates technical debt, introduces security vulnerabilities, and ultimately results in failure to meet business objectives. The marketing language may sound similar, but their internal mechanics are distinct.

A Data Management Platform (DMP) is architected for the anonymous web—its primary function is large-scale audience building for advertising. A Customer Data Platform (CDP) is focused on known individuals, creating a single, persistent profile for personalisation. A Data Warehouse is a different class of system entirely, designed for historical business intelligence (BI) and complex analytical reporting.

Understanding these distinctions is the first step toward building a data stack that is coherent and fit for purpose.

Core Architectural Distinctions

A DMP is engineered to handle massive volumes of third-party and anonymous first-party data. Its job is to ingest behavioral signals—ad impressions, clicks, site visits—from millions of anonymous users.

This data is inherently short-lived, typically tied to cookies or mobile Ad-IDs with a retention period of around 90 days. This makes DMPs effective for programmatic advertising but unsuitable for building long-term customer relationships. The system is optimized for high-speed ingestion and segmentation, not for storing sensitive Personally Identifiable Information (PII).

A CDP, by contrast, is architected around a persistent, unified customer profile. It is designed to ingest first-party data from every touchpoint where an organization interacts with a known customer—CRM, e-commerce platform, mobile app, support desk.

This data explicitly includes PII and is intended to be durable, evolving over the entire customer lifecycle. Architecturally, CDPs are optimized for real-time data synchronization and activation to enable one-to-one interactions, such as triggering a specific email immediately after a user performs an action in an application.

The classic Data Warehouse is a relational database designed for complex, heavy-duty analytical queries, not real-time customer engagement. It serves as the historical system of record for structured and semi-structured data from across the business—finance, operations, sales, and more. Its architecture is optimized for query performance for BI and reporting, typically fed by batch ETL/ELT processes that run on a scheduled basis (e.g., nightly).

Here’s the fundamental architectural trade-off: DMPs are built for anonymous scale, CDPs for individual identity, and Data Warehouses for historical analysis. Trying to force one to do another’s job is a recipe for expensive failure.

A Practical Comparison for Technical Leaders

Misaligning the tool with the use case is a common cause of costly re-platforming projects. To make an informed decision, leaders must look past marketing materials and focus on the engineering realities.

This table provides a pragmatic comparison for technical evaluation.

DMP vs. CDP vs. Data Warehouse: Technical Comparison

| Attribute | Data Management Platform (DMP) | Customer Data Platform (CDP) | Data Warehouse |

|---|---|---|---|

| Primary Data Type | Anonymous, third-party, and aggregated first-party data (cookies, device IDs). | Known, first-party PII (email, phone, user ID) and associated behavioral data. | Structured and semi-structured historical business data (transactions, logs, etc.). |

| Primary Use Case | Digital advertising, audience segmentation, and lookalike modeling. | Personalised marketing, cross-channel journey orchestration, unified customer view. | Business intelligence, historical reporting, and deep strategic analytics. |

| Data Retention | Short-term (typically 30-90 days). Data is transient by design. | Long-term and persistent, covering the entire customer lifecycle. | Long-term and permanent for historical trend analysis. |

| Real-Time Capability | Near-real-time for audience activation; often batch-based for ingestion. | Architected for real-time data ingestion, profile unification, and activation. | Primarily batch-oriented (ETL/ELT). Not designed for real-time interaction. |

| Key Users | Advertisers, media buyers, marketing analysts. | Marketers, product managers, customer service teams. | Data analysts, business intelligence teams, data scientists, executives. |

These platforms are often complementary, not mutually exclusive, in a modern data stack.

An organization might use a DMP to acquire new audiences, a CDP to nurture them once they become known customers, and a Data Warehouse to analyze their long-term value and other business metrics. The correct choice depends on the specific problem being solved.

How to Evaluate a Modern Data Management Platform

Selecting a data management platform is a significant architectural commitment, not just a software procurement exercise. A successful implementation creates a core strategic asset; a poor choice results in technical debt, compliance risks, and operational friction.

A thorough evaluation must extend beyond feature checklists to scrutinize the platform’s underlying architecture, scalability, and governance capabilities. A system’s true value is revealed not in a sales demo but under production-level data loads and strict compliance constraints.

Scalability and Performance

A modern DMP must be capable of ingesting high-volume data streams and activating segments with low latency. Evaluating its underlying architecture is critical to understanding its real-world performance.

Key questions to ask vendors include:

- Ingestion Throughput: Can the platform handle large batch files (e.g., a multi-gigabyte CRM export) and a high-velocity stream of real-time events (e.g., millions of website clicks per hour) without creating processing bottlenecks? Demand specific performance metrics, such as events per second.

- Query and Segmentation Latency: What is the time required to build a complex audience segment and propagate it to activation endpoints? A system that requires hours to sync is ineffective for timely, responsive campaigns.

- Infrastructure and Elasticity: Is the platform built on a scalable cloud architecture (e.g., Kubernetes, serverless functions)? It must be able to automatically scale resources based on demand to manage costs and maintain performance during traffic spikes.

A platform that cannot scale efficiently will become a liability, leading to missed opportunities and escalating infrastructure costs.

Data Governance and Privacy by Design

In a regulatory environment shaped by GDPR, NIS2, and DORA, data governance is not an optional feature but a foundational requirement. A platform with a superficial approach to privacy represents a significant business risk. A robust data management platform must provide granular controls to enforce compliance by design.

From an architectural perspective, privacy is not a feature you bolt on. It must be woven into the platform’s core, dictating how data is collected, processed, stored, and shared. This is a non-negotiable principle for any system handling user data today.

Your evaluation must confirm the platform has strong capabilities for:

- Consent Management: The ability to ingest, store, and act upon user consent signals in real time, respecting individual choices across all channels.

- Data Lineage Tracking: A clear, auditable trail documenting data provenance, transformations applied, and downstream distribution. This is essential for compliance audits under regulations like the EU Data Governance Act.

- Data Anonymisation and Pseudonymisation: The platform must provide built-in tools to remove or obscure personally identifiable information (PII) to minimize risk exposure and adhere to data minimization principles.

Integration, Extensibility, and Total Cost of Ownership

A DMP’s value is directly proportional to its ability to integrate with other systems. A platform with a closed, limited ecosystem will create new data silos, defeating its primary purpose. Prioritize platforms with a rich, well-documented API and a comprehensive library of pre-built connectors for both your MarTech stack and internal systems.

This need for better-integrated data systems is fueling significant market growth. For instance, global DMP market projections show a rise from US$ 3.8 billion in 2026 to US$ 9.7 billion by 2033, driven by the shift toward first-party data and stricter data governance regulations.

Finally, assess the Total Cost of Ownership (TCO), which extends far beyond the license fee. Factor in the costs of implementation, data migration, engineering resources for maintenance, and any usage-based fees (e.g., data volume, API calls). Only a comprehensive TCO analysis provides a realistic picture of the long-term investment.

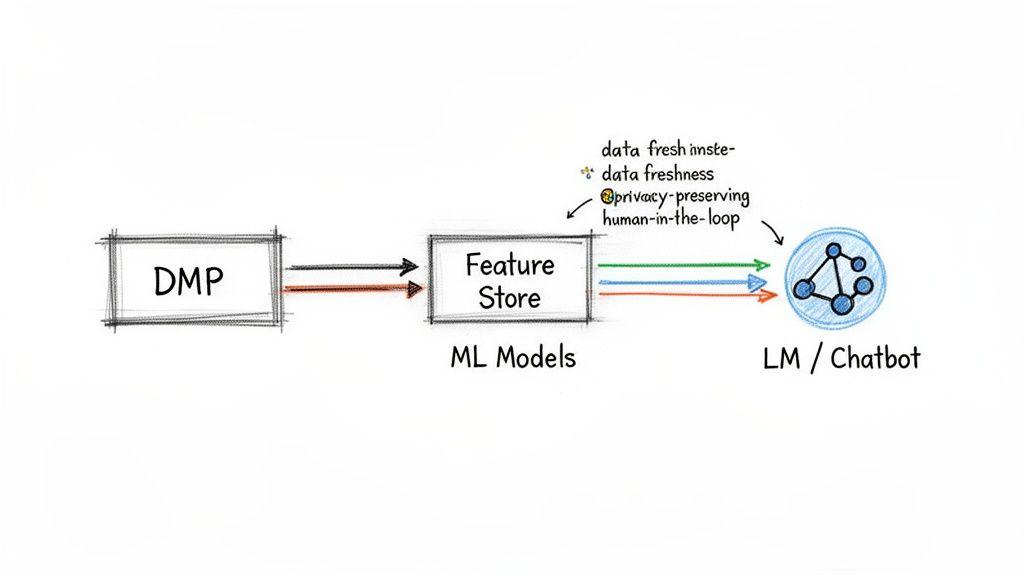

Integrating Your DMP with AI and LLM Workflows

A data management platform is more than an advertising tool; it is a source of structured, high-quality data for training and operating modern AI systems.

The audience segments and user attributes curated within a DMP are precisely the kind of features that improve the predictive power of machine learning models. They also provide the necessary context for Large Language Models (LLMs) to generate relevant and personalized outputs.

By moving from raw, unstructured data to a well-governed DMP, organizations can feed clean, pre-segmented audience attributes into their AI workflows. This can dramatically improve the performance of recommendation engines, predictive models, and generative AI applications like customer service chatbots.

The market is responding to this synergy. In the Asia-Pacific IT sector, for instance, the DMP market is projected to grow at a 26.85% CAGR, largely driven by AI adoption. Investments in AI-driven audience analytics are a key factor in these forecasts, as detailed in reports on the growing DMP market in APAC at mordorintelligence.com.

An Architectural Pattern for AI Integration

A robust architectural pattern for connecting a DMP to an AI workflow involves a feature store. A feature store is a centralized repository for machine learning features that stores, versions, and serves them to models. This architecture decouples the data preparation pipeline from model training and inference.

The data flow follows this pattern:

- DMP to Feature Store: An automated pipeline extracts structured audience data (e.g., segments like “high-intent buyers” or attributes like “lapsed user”) from the DMP. This data is transformed into features—the numerical or categorical variables understood by ML models—and loaded into the feature store.

- Model Training: Machine learning models access the feature store to pull consistent, versioned training data. This ensures that the features used for training are identical to those used for real-time inference, preventing training-serving skew.

- Real-Time Inference: When an LLM-powered chatbot or a recommendation engine needs to make a real-time decision, it queries the feature store for the current user’s attributes. This provides immediate context, enabling a more relevant and personalized response.

This pattern enhances system reliability, maintainability, and governance.

Practical Implementation Considerations

Integrating a DMP with AI is not a trivial task. A naive approach can lead to inaccurate models, privacy violations, or brittle systems that are difficult to debug and maintain.

Careful planning is required around several key constraints:

- Data Freshness and Latency: Define the data recency requirements for each use case. A real-time recommendation engine may require sub-second data freshness, while a predictive churn model might function effectively with daily data updates. The architecture must be designed to meet these latency requirements.

- Privacy Preservation: Never feed raw DMP data directly into a model without appropriate safeguards. Employ techniques like pseudonymisation, aggregation, and differential privacy to train models without exposing sensitive user information and to ensure compliance with regulations like GDPR.

- Prompt Engineering with DMP Insights: For generative AI, DMP segments can be used to dynamically construct more effective prompts. For example, a chatbot prompt for an e-commerce site could be augmented with a tag like

[user_segment: high_value_customer]to guide the LLM’s tone, style, and product recommendations.

Building AI systems without robust guardrails is a significant operational and reputational risk. Integrating DMP data requires strong observability to monitor model behavior, human-in-the-loop controls for sensitive decisions, and clearly defined fallback mechanisms for when the AI fails or produces an unsafe response.

The Build vs. Buy Decision for Your DMP

Deciding whether to build a custom data management platform or buy a commercial SaaS solution is a pivotal architectural choice with long-term consequences. A hasty decision can lead to vendor lock-in, technical debt, or a system that fails to meet unique business requirements.

The optimal path depends on an organization’s technical maturity, available resources, and strategic objectives.

Buying a commercial DMP offers a primary advantage: speed to market. A platform can be operational in weeks, with predictable costs and outsourced maintenance. However, this speed comes with trade-offs: you are bound by the vendor’s product roadmap, data model, and integration capabilities, which may not align with your specific logic or future needs.

Building a custom DMP provides complete control and flexibility. Every component, from identity resolution to data activation, can be engineered to exact specifications. This enables deep integration with proprietary systems and can become a significant competitive advantage. This control, however, requires a substantial and ongoing investment in specialized engineering talent, infrastructure, and operational support.

Key Questions to Guide Your Decision

To make an informed decision, leaders must conduct an honest assessment of internal capabilities and strategic priorities.

Your team should address the following questions:

- Core Competency: Is building and operating complex data infrastructure a core competency of your engineering team, or is their time better invested in developing your primary product?

- Time-to-Market: How quickly do you need to activate your data to achieve business goals? If the need is urgent, buying is almost always the faster path to value.

- Uniqueness of Requirements: Is your data strategy based on standard marketing use cases, or do you have unique data sources, identity models, or activation channels that off-the-shelf platforms cannot accommodate?

- Long-Term Strategic Flexibility: Do you anticipate your data needs will evolve in ways that require a highly adaptable platform, or are your requirements stable and predictable?

- Resource Availability: Can you realistically recruit, retain, and fund a dedicated team of data engineers, security specialists, and infrastructure experts to build and maintain a custom DMP?

The build vs. buy question is fundamentally about where you want to invest complexity. Buying a DMP outsources infrastructure complexity. Building a DMP allows you to invest that complexity into creating a unique business advantage.

Tailoring the Decision to Your Organisation

The right choice varies significantly based on company size and maturity.

For Startups and SMBs: Buying is almost always the pragmatic first step. Engineering resources are scarce and should be focused on the core product. A SaaS DMP delivers immediate value, allowing the organization to validate its data strategy without a large capital investment. A custom or hybrid model can be explored later as the company scales. Deployment models are a key consideration, and our guide on on-premises vs. cloud solutions provides further context.

For Enterprises: The decision is more nuanced. An enterprise may have the resources to build a custom solution that becomes a major strategic asset. However, the maintenance burden and opportunity cost are substantial. This must be weighed against the feature maturity and speed of commercial SaaS platforms. Often, a hybrid approach is the most practical path: leveraging a commercial DMP for core functionality and augmenting it with custom-built components for specific, high-value tasks.

Key Takeaways for Technical Leaders

For a technical leader, a data management platform is an engineering and architectural concern. It represents a strategic shift from a state of data chaos to one where data is a predictable, governed, and valuable asset. It is not a tool to be delegated to marketing but a foundational component of the technical architecture that impacts reliability, compliance, and competitive capability.

To implement a DMP successfully, focus on these core principles.

A Unified Strategy Is Non-Negotiable

The era of disconnected data silos is a liability. A unified data strategy is essential for any modern digital business. This requires architecting a central system to ingest, standardize, govern, and enrich data from every touchpoint—from CRMs and analytics tools to proprietary backend systems.

A DMP provides this cohesive layer, transforming disparate data points into a single, actionable view of the audience. Without this unified foundation, efforts in personalisation, compliance, and AI will be unreliable and difficult to scale.

A data management platform is an architectural choice for taming data complexity. It enforces structure and governance by design, ensuring data integrity is a systemic property, not an afterthought.

Know Your Tools Architecturally

DMP, CDP, and data warehouse are not interchangeable terms. Confusing their architectural purpose leads to expensive mistakes that are difficult to remediate. The engineering distinctions are critical:

- DMPs are built for scale with anonymous, aggregated, and transient data, primarily for advertising use cases.

- CDPs are architected around known, persistent customer profiles containing PII, designed for one-to-one personalisation.

- Data Warehouses are optimized for historical BI and complex analytical reporting, not real-time activation.

Choosing the right tool requires a deep understanding of its core data model, retention policies, and intended use case. Fighting a tool’s innate architecture is a losing battle.

Evaluate on Governance and Integration

When evaluating a data management platform, look beyond marketing features. The most critical criteria are architectural and operational. Prioritize platforms with robust data governance capabilities, including granular consent management and transparent data lineage. This is non-negotiable for complying with regulations like GDPR and NIS2.

Equally important is integration and extensibility. A modern DMP must interoperate with a broad ecosystem. It requires rich, well-documented APIs to connect with your existing MarTech stack and, crucially, your bespoke internal systems. A platform with a closed, walled-garden architecture simply creates another expensive silo.

Prepare for Modern AI Workflows

Finally, view the DMP as a strategic enabler for AI and LLM workflows. The structured, segmented audience data it produces is ideal for training more accurate predictive models. It also provides the necessary context for generative AI applications to deliver truly useful and personalized interactions.

By integrating your DMP with a feature store, you can build a reliable data pipeline that feeds clean, governed data directly into your ML models and LLM prompts. This is where the long-term strategic value of a unified data platform is fully realized.

At Devisia, we believe that privacy and performance are architectural choices. We specialise in building reliable digital products and AI-enabled systems with a focus on long-term maintainability and clear business value. If you need a technical partner to help you build or modernise a robust data architecture, visit us at https://www.devisia.pro to learn how we can help.