An Advanced Driver Assistance System (ADAS) is not a single technology but a complex assembly of functions engineered to improve vehicle safety and reduce driver workload. These systems operate as a digital co-pilot, using sensors like cameras and radar to monitor the vehicle’s environment and compensate for the split-second delays in human reaction where most accidents originate.

Their purpose is not to replace the driver but to assist. This distinction is critical for defining product scope, managing liability, and making sound engineering decisions.

Deconstructing The Advanced Driver Assistance System

For a technical leader, an ADAS must be understood as a deeply integrated system of sensors, processors, and software that interprets the vehicle’s surroundings in real-time. The objective is to provide an additional layer of awareness and mitigate the cognitive load on the driver.

The fundamental engineering problem is to bridge the gap between human perception and the physics of a moving vehicle. This involves building a robust safety net, not achieving full autonomy—a crucial boundary for setting realistic product goals and managing technical trade-offs.

Core Functional Categories

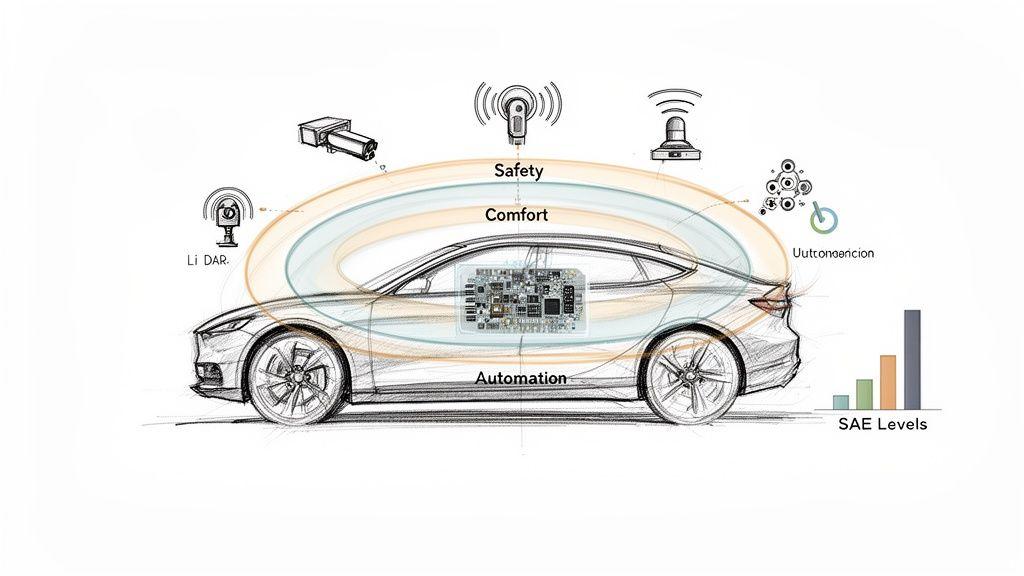

To structure development and align market demands with regulatory constraints, ADAS functions can be classified into three primary categories.

- Safety Functions: These features are designed to prevent or mitigate collisions. Examples include Automatic Emergency Braking (AEB), which applies the brakes to avoid an imminent crash, and Blind Spot Detection, which alerts the driver to unseen vehicles.

- Comfort and Convenience Functions: These systems aim to reduce driver strain, particularly during long-distance travel or in dense traffic. Adaptive Cruise Control (ACC), which maintains a set distance from the vehicle ahead, and Lane Keeping Assist (LKA) are prominent examples.

- Automation Functions: These features assume specific driving tasks under well-defined operational conditions. Traffic Jam Assist, for instance, can manage steering, braking, and acceleration in low-speed, congested environments.

Market adoption reflects the value of these systems. By 2026, features like forward collision warning and automatic emergency braking have surpassed 90% market penetration in new vehicles, transitioning ADAS from a luxury option to a standard, software-defined vehicle component.

At its core, an ADAS is an exercise in applied risk mitigation. The system continuously calculates the probability of adverse events—a collision, a lane departure—and acts to reduce that risk. Its effectiveness is determined not by a single algorithm, but by the reliability of the entire hardware and software chain.

This rapid integration is a key aspect of the Internet of Things in the automotive sector, where connected systems are now integral to vehicle function and safety.

SAE Levels of Driving Automation

To provide a common language for automation capabilities, the industry relies on the SAE J3016 standard, which defines six levels of driving automation. This framework clarifies the division of responsibility between the system and the human driver.

For engineers and product leaders, these levels are not just labels; they represent distinct architectural, validation, and liability thresholds.

SAE Levels Of Driving Automation At A Glance

| SAE Level | System Responsibility | Driver Responsibility |

|---|---|---|

| Level 0 | Provides warnings only (e.g., forward collision warning). | Full-time driving, steering, braking, and acceleration. |

| Level 1 | Provides either steering or speed control (e.g., adaptive cruise control). | Supervises the system and performs all other driving tasks. |

| Level 2 | Provides both steering and speed control (e.g., lane centring + ACC). | Must remain engaged, monitor the environment, and be ready to take over. |

| Level 3 | Handles all driving tasks under specific, limited conditions. | Can disengage but must be ready to take back control when requested. |

| Level 4 | Handles all driving tasks within a defined operational area (geofenced). | Can fully disengage; no expectation to take back control within the area. |

| Level 5 | Handles all driving tasks on all roads and under all conditions. | Becomes a passenger. No driver needed. |

The vast majority of ADAS features deployed today operate at Level 1 and Level 2. The transition to Level 3 represents a significant technical and legal hurdle, as it marks the first point at which the system, not the driver, is responsible for monitoring the driving environment.

An advanced driver assistance system is a complex ecosystem of hardware and software. For product and engineering teams, understanding the components and their trade-offs is the first step toward building a system that is not only effective but also commercially viable and safe.

This begins with the sensor suite, which serves as the vehicle’s sensory interface to the world.

Choosing the right sensor mix is a high-stakes architectural decision, balancing cost, performance, and reliability across all potential operating conditions. Each sensor type has inherent strengths and weaknesses, making redundancy a core design requirement, not an optional extra. A poorly architected sensor suite introduces unacceptable systemic risks.

The Sensory Input Layer

A modern ADAS constructs its environmental model by fusing data from a heterogeneous mix of sensors. No single sensor can provide a complete and reliable picture, especially in adverse weather or complex urban environments.

-

Cameras: As the primary visual sensors, cameras are excellent for color recognition, reading road signs, and identifying lane markings. Their primary limitation is degraded performance in low light, heavy precipitation, or direct glare. They provide rich 2D data crucial for object classification.

-

Radar: Using radio waves, radar is a robust solution for measuring the distance and relative velocity of objects, even in weather conditions that impair cameras. It is the workhorse for functions like Adaptive Cruise Control (ACC) and Forward Collision Warning. Its main trade-off is lower resolution, making it difficult to classify the type of object detected.

-

LiDAR (Light Detection and Ranging): LiDAR constructs a high-precision 3D point cloud of the environment by emitting laser pulses. This provides an unmatched ability to detect objects and discern their shape. While its performance is excellent, heavy fog, snow, or rain can cause scattering and attenuation. Historically, it has also been a more expensive component.

-

Ultrasonics: These are simple, low-cost sensors used for low-speed, short-range applications like parking assist and blind-spot monitoring. They are effective for proximity detection but have a very limited operational range.

The sensor-first perspective has significant market implications. The global ADAS market is projected to be worth over USD 40 billion by 2026, with sensors comprising a major share. However, the true differentiator is increasingly shifting toward software. AI-driven improvements in real-time data processing can enhance system capabilities, sometimes reducing reliance on the most expensive hardware. For product leaders, this signals a strategic focus: software and AI are the defensible assets. You can dive deeper into these market dynamics and sensor trends for a closer look.

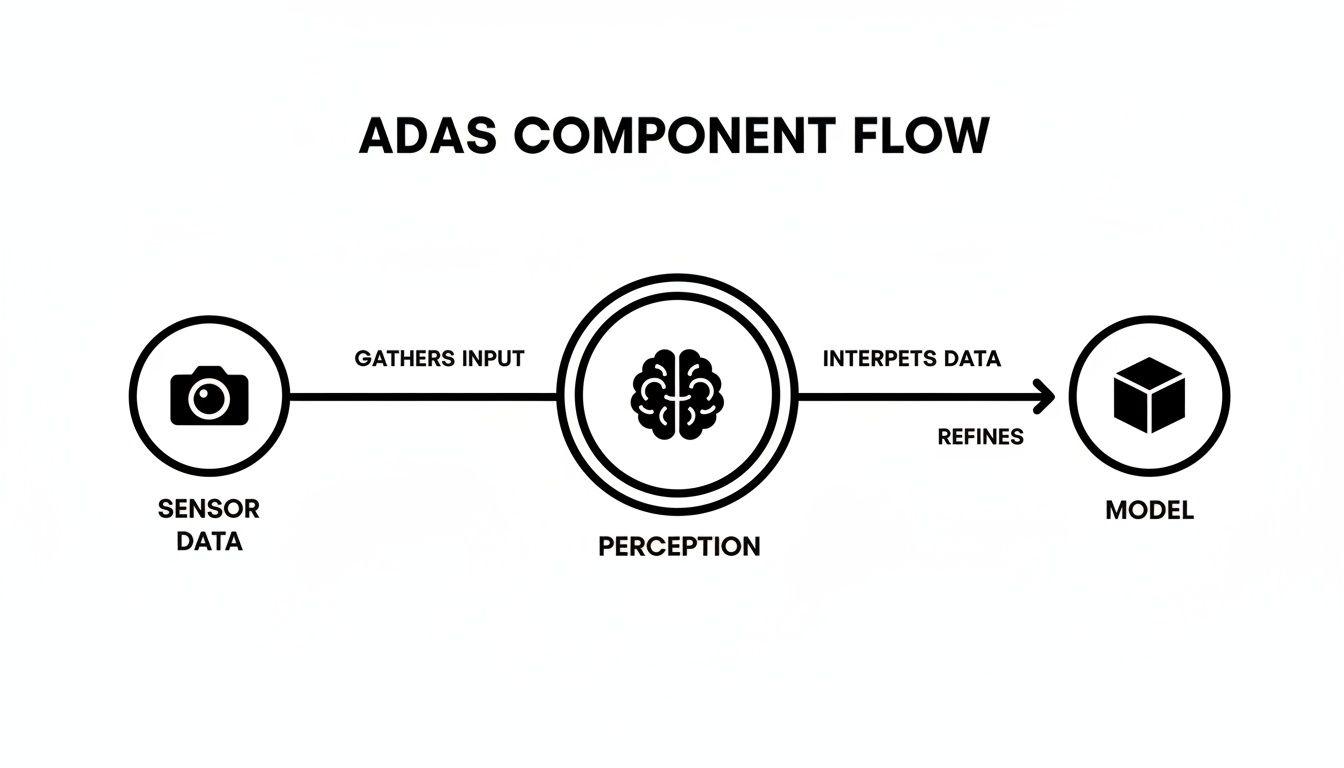

The Software Perception and Fusion Layer

Raw sensor data is a stream of unstructured noise. The critical engineering task of the perception layer is to process this data and build a coherent, actionable model of the vehicle’s environment. This is where sensor fusion is paramount.

Sensor fusion is the process of combining data from multiple sensors to generate a more accurate and robust understanding than any single sensor could achieve alone. For example, a camera may identify an object as a pedestrian, while a radar simultaneously confirms its precise distance and velocity. Fused together, they create a high-confidence assessment upon which the system can act.

Sensor fusion isn’t just about layering data; it’s about architecting for resilience. When one sensor is compromised—a camera blinded by sun glare—the system must gracefully rely on other inputs, such as radar, to maintain a safe operational state. This is a fundamental principle of functional safety design.

When designing the fusion system, teams face a critical architectural choice:

-

Early Fusion (or Tight Coupling): This approach combines raw, low-level data from different sensors at the beginning of the processing pipeline. By feeding this unified data stream into a single complex algorithm, it is possible to detect features that might be missed by processing sensor data independently. The trade-off is significant complexity and tight coupling between sensors and the fusion algorithm, making the system harder to test and maintain.

-

Late Fusion (or Loose Coupling): This is the more common and modular approach. Each sensor’s data is processed independently to generate high-level object lists (e.g., “camera detects a car at 50m,” “radar detects an object at 51m”). These object lists are then combined (“fused”) in a subsequent step. While potentially less nuanced than early fusion, this architecture is far simpler to build, test, scale, and maintain, allowing for independent development of each sensor stack.

The choice between early and late fusion has profound implications for system architecture, development velocity, and validation strategy. For most teams, a late fusion strategy offers a more pragmatic path to delivering value incrementally while ensuring long-term maintainability.

The Role Of AI And Machine Learning In ADAS

With a fused environmental model, the system’s core intelligence—driven by Artificial Intelligence (AI) and Machine Learning (ML)—comes into play. These models are responsible for transforming a flood of sensor data into predictive insights and, ultimately, driving decisions.

For an engineering leader, the challenge is not just theoretical. It involves understanding the practical trade-offs required to deploy these models reliably on power- and resource-constrained automotive-grade hardware.

The ADAS decision-making process funnels raw sensor inputs through a perception model, which attempts to build a comprehensive understanding of the environment.

This pipeline—from raw data to perception to an AI model’s interpretation—is the foundation for every action the system takes.

From Object Detection To Scene Understanding

The workhorse AI models in many ADAS platforms today are Convolutional Neural Networks (CNNs). A CNN is a specialized pattern-recognition model, trained to detect and classify objects like vehicles, pedestrians, and traffic signs from camera imagery. It excels at answering the question, “What objects are in this scene?”

However, identifying objects is insufficient for safe navigation. A robust ADAS must also understand context. This requirement is driving the industry toward more sophisticated architectures like Vision Transformers (ViTs).

Unlike a CNN that processes an image in localized patches, a Transformer can assess the entire scene holistically. This global attention mechanism allows it to model the relationships between objects, providing a more comprehensive grasp of the situation—an analogue to how a human driver perceives a dynamic road environment.

The shift from CNNs to Transformers represents a move from simple object recognition to genuine scene comprehension. It is the difference between identifying a ball and predicting that a child might run into the road after it. This predictive capability is what elevates an ADAS from a reactive warning system to a proactive safety partner.

The Engineering Reality Of Edge AI Deployment

Developing a high-performance AI model is one challenge; deploying it reliably inside a moving vehicle is another entirely. Automotive systems are highly constrained environments, and the engineering lead’s role is to balance model performance with the physical limitations of the hardware.

Key deployment constraints include:

- Latency: ADAS decisions must be made in milliseconds. Any delay in model inference—the time it takes to process live data—can have catastrophic consequences. This necessitates that all safety-critical processing occurs on the vehicle’s edge hardware, as the latency of a round trip to the cloud is unacceptable.

- Power Consumption: A vehicle’s electrical system has a finite power budget. Computationally intensive AI processing consumes significant energy, which can directly impact an EV’s range or a conventional vehicle’s efficiency. Model efficiency is a hard engineering requirement.

- Model Size and Quantization: Large, high-performance models trained in data centers are often too large for the specialized, cost-effective processors used in vehicles. This requires techniques like quantization, which reduces the numerical precision of the model’s weights (e.g., from 32-bit floating-point to 8-bit integers) to shrink its footprint and accelerate inference. The trade-off is a potential loss of accuracy that must be rigorously quantified and validated.

Deploying AI in an automotive context is an exercise in ruthless optimization. It requires a deep, practical understanding of how software performance is coupled with hardware constraints to ensure the system’s intelligence is not only powerful but also efficient and dependable.

Software Architecture And Orchestration For Reliability

Building a reliable ADAS is an exercise in disciplined software engineering. The architecture chosen at the outset determines the system’s robustness, its fault tolerance, and its maintainability over the vehicle’s lifecycle.

For any CTO or product leader, reliability is not a feature to be added later; it is an emergent property of the system’s architecture. The design must assume that components will fail and ensure the system degrades gracefully to a safe state rather than collapsing catastrophically.

Monolithic Versus Microservices Architectures

The architectural debate between monolithic and microservices approaches has critical safety implications in the automotive domain. A traditional monolithic architecture, where all functions are tightly coupled in a single executable, may seem simpler to deploy on a single Electronic Control Unit (ECU).

However, this approach is brittle. A bug in a non-critical function, such as traffic sign recognition, could destabilize the entire system and compromise essential safety features like emergency braking. Furthermore, updates are perilous, as a minor change requires re-validating the entire monolith.

A microservices-inspired design, adapted for automotive constraints, offers a more resilient alternative. This approach decouples functions into independent services that communicate over a well-defined internal bus.

- Isolation: A fault in the lane-keeping service is contained and will not affect the forward-collision warning service. This fault isolation is a cornerstone of functional safety.

- Independent Updates: A new pedestrian detection model can be deployed without modifying or re-validating the adaptive cruise control logic. This enables faster, safer iteration cycles.

- Scalability: Computational resources on high-performance computers (HPCs) can be allocated dynamically based on task criticality, rather than treating all functions as equal.

An architectural mindset focused on decoupling and fault tolerance is the only viable path. A reliable ADAS must be designed for failure, not just functionality. The system must be architected to remain in a safe state even when individual components are compromised.

For teams new to this paradigm, exploring the principles of Service-Oriented Architecture (SOA) can provide a solid foundation for building modular and resilient systems.

Real-Time Operating Systems And Data Transport

The software services themselves require a deterministic execution environment. An ADAS depends on a Real-Time Operating System (RTOS) to guarantee that critical tasks complete within their specified deadlines. Unlike a general-purpose OS, an RTOS provides the predictable scheduling necessary for safety-critical functions. A missed deadline is not a performance issue; it is a system failure.

The communication fabric between these components is equally critical. The traditional Controller Area Network (CAN bus) remains a reliable, battle-tested solution for low-bandwidth commands like “apply brakes.”

However, CAN bus lacks the bandwidth to handle the massive data streams from modern cameras, radar, and LiDAR. For this, Automotive Ethernet serves as the high-speed backbone, transporting raw sensor data to central processing units where AI models run.

Maintainability Through OTA Updates And Observability

Finally, a modern ADAS architecture must be designed for the vehicle’s entire operational life. Over-the-Air (OTA) updates are no longer a luxury but an essential mechanism for delivering security patches, bug fixes, and feature enhancements after the vehicle is sold. A well-designed modular architecture makes OTA updates significantly safer by allowing for targeted updates to individual services instead of flashing the entire system.

This entire lifecycle must be supported by robust observability. The system must continuously log performance metrics, errors, and operational data. This provides engineering teams with a real-time view of fleet health, facilitates remote diagnostics, and enables validation that an OTA update has performed as intended in the field. This feedback loop is the hallmark of a mature and maintainable ADAS product.

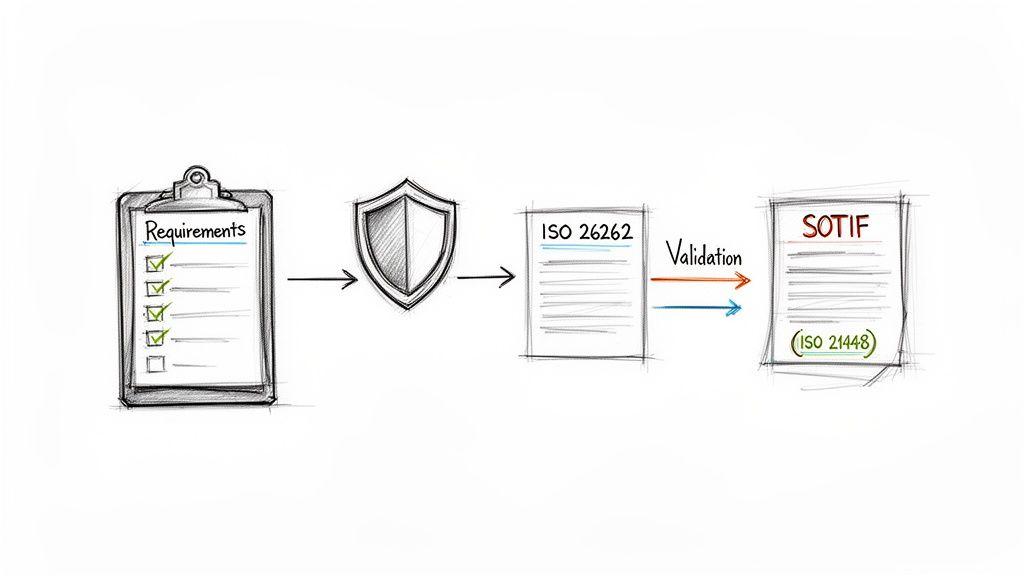

Navigating Safety Standards And Compliance

If safety compliance is treated as a final checklist item, the project is already on a path to failure. For any ADAS, safety must be an integral part of the system’s architecture from the initial concept. Neglecting this leads to costly redesigns, validation bottlenecks, and significant launch delays.

The automotive industry operates under strict safety standards for good reason—the stakes are immense. Two standards are central to any ADAS development: ISO 26262 (Functional Safety) and ISO 21448 (Safety of the Intended Functionality, or SOTIF). While related, they address fundamentally different types of risk.

This is not merely a regulatory burden. The ADAS market is on a trajectory to exceed USD 200 billion by 2034, driven by a global demand to reduce traffic fatalities. This demand is for intelligent, AI-powered systems that meet the highest safety and reliability standards. You can explore the market projections here.

ISO 26262: Managing System Malfunctions

ISO 26262 is the foundational standard for automotive functional safety. Its focus is on mitigating risks arising from malfunctioning electrical and electronic systems. It provides a framework for building systems that do not cause harm when a sensor fails, a software bug occurs, or a processor faults.

The standard mandates a systematic process of hazard analysis and risk assessment, classifying potential failures using Automotive Safety Integrity Levels (ASILs), and implementing corresponding safety measures. This process spans the entire development lifecycle, from concept to production.

ISO 26262 is about managing the known unknowns—foreseeable hardware and software malfunctions. The goal is to anticipate these failures and design robust countermeasures, such as redundant components or fail-safe operational modes, to ensure the system fails gracefully.

For a software team, this requires demonstrating code robustness, fault detection, and the ability to transition to a safe state. Methodologies like the V-model, which integrate verification and validation throughout development, are essential. For a deeper look, see our guide on how the V-model supports rigorous software development.

SOTIF (ISO 21448): When a Functioning System Behaves Unsafely

While ISO 26262 addresses system failures, SOTIF (ISO 21448) confronts a more modern and nuanced problem: unsafe behavior arising from a system that is functioning correctly according to its design, but is faced with a real-world scenario that exceeds its performance limitations.

These are the unknown unknowns. The system’s sensors perceive the world, and its code executes without error, but the logic still leads to a hazardous outcome.

A classic SOTIF scenario:

- A camera correctly detects an unusual, stationary object in the road.

- The perception model, despite being trained on vast datasets, has never encountered this specific object and misclassifies it as a harmless plastic bag.

- The vehicle proceeds at speed, when the object was in fact a large, solid piece of fallen cargo.

SOTIF is focused on identifying and mitigating these performance limitations and corner cases. It requires a massive investment in validation, including millions of miles of simulation and real-world driving, to expose the edge cases where the system’s algorithms may prove insufficient.

ISO 26262 vs SOTIF: A Practical Comparison

For product teams, understanding this distinction is critical for allocating resources and managing risk. This table summarizes the core differences.

| Aspect | ISO 26262 (Functional Safety) | ISO 21448 (SOTIF) |

|---|---|---|

| Core Focus | Preventing hazards from E/E system malfunctions. | Preventing hazards when the system is functioning without faults. |

| The Problem | ”The component broke." | "The system worked as designed, but the outcome was unsafe.” |

| Example | A radar sensor fails and stops sending data, causing the ACC to disengage. | A camera misinterprets a shadow as an obstacle, causing unnecessary braking. |

| Root Cause | Random hardware failures, systematic software bugs. | Performance limitations, sensor insufficiencies, edge cases, foreseeable misuse. |

| Primary Solution | Redundancy, fault detection, fail-safe mechanisms, robust code. | Extensive testing, simulation, data collection, and algorithm refinement. |

| Keywords | Fault, failure, hazard, ASIL, safe state. | Performance limitation, triggering event, unsafe scenario, validation. |

In summary, ISO 26262 ensures the system is built correctly, while SOTIF ensures it behaves correctly in a messy, unpredictable world. A safe and commercially successful ADAS product requires mastery of both disciplines.

From Blueprint to Reality: A Guide for Product and Engineering Teams

Building an advanced driver assistance system is a significant undertaking. Treating it as just another software project is a direct path to failure. Success requires a pragmatic strategy that balances innovation with the hard realities of safety, technical debt, and long-term maintainability.

For product and engineering leaders, the primary challenge is to develop a realistic roadmap. This begins with prioritizing foundational safety functions. A team must master capabilities like reliable Automatic Emergency Braking (AEB) before pursuing more complex comfort or automation features.

Each new function adds layers of complexity and novel failure modes. This introduces technical debt that must be managed proactively, not deferred until it triggers a costly recall.

The Build vs. Buy Decision

The “build vs. buy” question is central to any ADAS strategy. Attempting to build every component in-house is rarely feasible. The degree of specialization required for sensors, perception models, and safety validation is immense. A hybrid approach is almost always the most prudent path.

- Buy: Commodity components like cameras and radar, or established software modules for basic functions like lane detection, should be sourced from specialized vendors. These suppliers have invested heavily in validation and offer economies of scale that are difficult to replicate.

- Build: Your team’s core intellectual property and competitive advantage lie in the integration and orchestration layers. This is where you create value: the sensor fusion logic, the decision-making algorithms, and the software architecture that defines your system’s unique behavior.

Your core IP isn’t the individual sensors or algorithms. It’s the intelligence that weaves them into a cohesive, dependable system. Focus your engineering firepower on that architectural glue and the decision logic. That is how you build a defensible product.

A Pragmatic Checklist for Vendors and Teams

Whether evaluating a third-party vendor or assessing your own team’s readiness, the fundamental questions remain the same. Look beyond marketing demonstrations and assess long-term viability. Any potential partner or internal project lead must provide concrete answers to these points:

-

Data and Validation Strategy: Where will the massive datasets for training and validation be sourced? How will you acquire, label, and manage this data? What is your simulation strategy for testing dangerous edge cases that cannot be safely replicated on public roads?

-

Architectural Integrity: Can they provide a clear walkthrough of the system architecture? How does it handle fault tolerance and fail-safe conditions? How are software components isolated to prevent cascading failures?

-

Long-Term Maintenance and OTA: What is the plan for delivering Over-the-Air (OTA) updates safely and reliably? How will you monitor the health and performance of the deployed system across the entire vehicle fleet?

-

Embedded Safety and Compliance: How are ISO 26262 and SOTIF principles integrated into the development process from day one? What documentation and evidence can be provided to substantiate safety claims?

Answering these questions with technical rigor provides a framework for building an ADAS that is viable, safe, and maintainable. It distinguishes durable market players from short-lived experiments.

Frequently Asked Questions from Technical Leaders

When developing an advanced driver assistance system, bridging the gap between technical possibility and operational reality is where projects succeed or fail. Here are critical questions that product and engineering leaders must address, with a focus on real-world trade-offs.

What Is The True Cost of Building an ADAS?

The initial procurement cost for hardware and software licenses is only a fraction of the total investment. The most significant expenses are embedded in the long-term lifecycle of development, validation, and maintenance.

This should be viewed not as a one-time product purchase, but as a long-term operational commitment. The primary cost drivers include:

- Data Operations: Acquiring, cleaning, labeling, and storing petabytes of data for model training and validation is a continuous and substantial operational expense.

- Validation and Simulation: The engineering hours and compute infrastructure required to run millions of virtual miles—in addition to extensive real-world testing—to satisfy standards like SOTIF are enormous.

- Compliance and Certification: Achieving and maintaining certifications like ISO 26262 requires rigorous documentation, strict process controls, and specialized expertise that must be integrated into the team’s daily workflow.

A budget that only accounts for sensors and initial software development is incomplete and will lead to failure. A realistic financial model must treat these long-term operational activities as core project costs.

The true investment in an ADAS isn’t the code written today. It is the infrastructure built to validate, deploy, and safely maintain that code for the next decade. You are committing to a process, not just a product.

How Should Data Privacy and GDPR Be Handled in an ADAS?

An ADAS is a prolific data-gathering device, capturing video of public spaces, driver behavior, and vehicle telemetry. This makes compliance with regulations like GDPR and other data protection laws a foundational architectural requirement, not a feature to be added later.

To embed privacy by design, your architecture must incorporate:

- Processing at the Edge: Whenever feasible, process sensor data directly on the vehicle. This minimizes the volume of raw, potentially identifiable data transmitted to the cloud.

- Systematic Anonymization: Before any data leaves the vehicle for fleet-wide analysis, it must be systematically scrubbed of all personally identifiable information (PII). This includes automated blurring of faces and license plates in video frames.

- Clear Data Governance: A robust data governance policy must be established, defining precisely what data is collected, its purpose, its retention period, and who has access. Ambiguity is a significant legal and reputational risk.

Attempting to retrofit privacy controls onto an existing architecture is a path to legal exposure and a catastrophic erosion of public trust.

Can ADAS Features Prevent All Accidents?

No, and it is imperative to be transparent about this limitation. An ADAS is engineered to assist a human driver and mitigate risk, not eliminate it. It functions as a sophisticated safety net, but one that has inherent limitations.

System effectiveness is constrained by sensor performance in adverse weather, unavoidable algorithmic edge cases, and the boundless unpredictability of the real world.

Managing expectations is critical. The driver is—and must remain—ultimately responsible for the vehicle’s safe operation. Positioning the technology correctly is non-negotiable for managing both legal liability and user trust.

Building a safe and reliable Advanced Driver Assistance System is a complex engineering marathon that demands a deep, pragmatic understanding of architecture, safety, and long-term maintenance. At Devisia, we specialize in turning ambitious technical visions into dependable, supportable digital products. We provide the end-to-end expertise to build and integrate the robust software systems that power the future of mobility.

Learn more about our approach at https://www.devisia.pro.